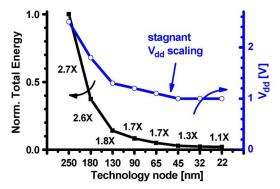

Using Moore’s law as the metric of progress has become misleading: starting around the 65-nm node, improvements in packing densities no longer translate to proportional increases in performance or energy efficiency. Researchers predict that near-threshold computing could restore the relationship between transistor density and energy efficiency. Credit: Dreslinski, et al. ©2010 IEEE.

(PhysOrg.com) -- While electronic devices have greatly improved in many regards, such as in storage capacity, graphics, and overall performance, etc., they still have a weight hanging around their neck: they’re huge energy hogs. When it comes to energy efficiency, today’s computers, cell phones, and other gadgets are little better off than those from a decade ago, or more. The problem of power goes beyond being green and saving money. For electrical engineers, power has become the primary design constraint for future electronic devices. Without lowering power consumption, improvements made in other areas of electronic devices could be useless, simply because there isn’t enough power to support them.

In a recent study, a team of researchers, Ronald Dreslinski, et al., from the University of Michigan, have investigated a solution to the power problem by using a method called near-threshold computing (NTC). In the NTC method, electronic devices operate at lower voltages than normal, which reduces energy consumption. The researchers predict that NTC could enable future computer systems to reduce energy requirements by 10 to 100 times or more, by optimizing them for low-voltage operation. Unfortunately, low-voltage operation also involves performance trade-offs: specifically, performance loss, performance variation, and memory and logic failures.

Continuing Moore’s law

As the researchers explain, reducing power consumption is essential for allowing the continuation of Moore’s law, which states that the number of transistors on a chip doubles about every two years. Continuing this exponential growth is becoming more and more difficult, and power consumption is the largest barrier to meaningful increases in chip density. While engineers can design chips to hold additional transistors, power consumption has begun to prohibit these devices from actually being used.

As the researchers explain, engineers are currently facing “a curious design dilemma: more gates can now fit on a die, but a growing fraction cannot actually be used due to strict power limits. … It is not an exaggeration to state that developing energy-efficient solutions is critical to the survival of the semiconductor industry.”

In the past, technologies that required large amounts of power eventually became replaced by more energy-efficient technologies; for example, vacuum tubes were replaced by transistors. Today, transistors are arranged using CMOS (complementary metal-oxide-semiconductor) circuitry design techniques. Since beyond-CMOS technologies are still far from being commercially viable, and large investments have been made in CMOS-based infrastructure, the Michigan researchers predict that CMOS will likely be around for a while. For this reason, solutions to the power problem must come from within.

“NTC is an enabling technology that allows for continued scaling of CMOS-based devices, while significantly improving energy efficiency,” Dreslinski told PhysOrg.com. “The major impact of the work is that, for a fixed battery lifetime, significantly more transistors can be used, allowing for greater functionality. Particularly, [NTC allows] the full use of all transistors offered by technology scaling, eliminating ‘Dark Silicon’ that occurs as we scale to future technology nodes beyond 22 nm where ’more transistors can be placed on chip, but will be unable to be turned on concurrently.’”

Operating at threshold voltage

Near-threshold computing could be the key to decreasing power requirements without overturning the entire CMOS framework, the researchers say. Although low-voltage computing is already popular as an energy-efficient technique for ultralow-energy niche markets such as wristwatches and hearing aids, its large circuit delays lead to large energy leakages that have made it impractical for most computing segments. So far, these ultralow-energy devices have operated at extremely low “subthreshold” voltages, from around 200 millivolts down to the theoretical lower limit of 36 millivolts. Conventional voltage operation is about 1.0 volts. Meanwhile, near-threshold operation occurs around 400-500 millivolts, or near a device’s threshold voltage.

Operating at near-threshold rather than subthreshold voltages could provide a compromise, enabling devices to require less energy while minimizing the energy leakage. This improved trade-off could potentially open up low-voltage design to mainstream semiconductor products. However, near-threshold computing still faces the other three challenges mentioned earlier: a 10 times performance loss, five times increase in performance variation, and an increase in functional failure rate of five orders of magnitude. These challenges have not been widely addressed so far, but the Michigan researchers spend the bulk of their analysis reviewing the current research to overcome these barriers.

Part of the attraction of near-threshold computing is that it could have nearly universal applications in high-demand segments, such as data centers and personal computing. As the Web continues to grow, more data centers and servers are needed to host websites, and their power consumption is currently doubling about every five years.

Personal computing devices, many of which are portable, could also benefit from increased battery lifetime due to reduced power needs. Dreslinski notes that previous studies have shown that the impact of NTC on devices will vary based on a particular consumer’s usage.

“A user who only uses their device for making phone calls won't see much impact because most of the power is consumed outside the CPU,” he said. “However, users who utilize music/video players and other compute-intensive tasks on their phone could see significant battery life improvements and reduced heat generated by the device. Quantifying these numbers is difficult based on the varying workloads of users coupled with parallel advances in battery technologies. My unofficial estimate would be a 1.5x to 2x improvement in battery lifetime, although some users could see significantly more or less.”

Near-threshold computing could also be useful in sensor-based systems, which have applications in biomedical implants, among other areas. While these sensors may have a size of about 1 mm3, they often require batteries that are many orders of magnitude larger than the electronics themselves. By reducing the power requirements by up to 100 times in sensors, near-threshold computing could open the doors to many possible future designs.

More information: Ronald G. Dreslinski, Michael Wieckowski, David Blaauw, Dennis Sylvester, Trevor Mudge. “Near-Threshold Computing: Reclaiming Moore’s Law Through Energy Efficient Integrated Circuits.” Proceedings of the IEEE. Vol. 98, No. 2, February 2010. Doi:10.1109/JPROC2009.2034764

Copyright 2010 PhysOrg.com.

All rights reserved. This material may not be published, broadcast, rewritten or redistributed in whole or part without the express written permission of PhysOrg.com.