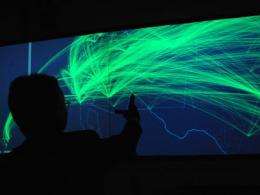

Ishii works with the g-speak "wall" interactive control system in his Tangible Bits Lab. Photo / Donna Coveney

(PhysOrg.com) -- At a time when ever more aspects of our lives are moving toward the virtual, online world -- stores, newspapers, games and even social interactions -- Hiroshi Ishii seems to be swimming against that current: His aim is to bring the world of computers into more real and tangible form, seamlessly integrated with our daily lives.

Instead of just using flat screens, keyboards and mice, he wants people to be able to interact with their computers and other devices by moving around and by handling real physical objects. In short, by doing what comes naturally.

Ishii came to MIT's Media Lab 14 years ago, at the urging of the lab's founder and then-director Nicholas Negroponte, after having worked for the Japanese telephone company NTT for 16 years. "He was bursting with energy, even when you talked to him you felt he was going to burst with excitement," Negroponte recalls. "MIT was the place for him to release it, and he did." At NTT, Negroponte says, Ishii was "more or less in a closet. I urged him to basically step out of the closet, onto the world stage. He did so with excellence."

He immediately set about looking for ways to replace conventional Graphical User Interfaces (GUIs), exemplified by the Windows and Mac operating systems, with what he calls Tactile User Interfaces (TUIs).

That vision has sprung to life in Ishii's Tangible Media Group at the Media Lab. One early example was a tabletop system called the Urban Planning Workbench, where an architect or city planner can move framework models of planned buildings, and the tabletop instantly responds by displaying how the shadows and reflections from those buildings would appear at different times of day and how they would interact with the adjacent buildings. The same display can then be switched to show how air would flow around the buildings -- a kind of virtual wind tunnel.

In this way, it becomes easy to see the impact of changes in the orientation or shape of buildings on the resulting play of light and wind patterns, without having to build detailed models for each possibility. Over the years, Ishii and his students have created a number of variations on this basic system, including sand and clay tables that allow for a three-dimensional version of such interactive displays, revealing how changes in the topography can also affect the behavior of light and wind.

Other students in his lab have developed similar concepts into systems in which manipulating physical objects on a surface can be used to work with such widely varied things as musical compositions, chemical formulas, business processes or electrical circuits. For example, wooden blocks may represent different electronic components, and when the blocks are arranged in a way that completes a circuit, they behave accordingly -- with projected images causing a simulated motor to begin turning or a bulb to light up.

'Driven by art'

For Ishii, the underlying concepts that guide all these projects are more significant than the specific implementations. "For us, the most important thing is the vision, the philosophy, the principle," Ishii says. "I've never been driven by science or engineering. I'm driven by art. Technology gets obsolete in a year, and applications get obsolete in 10 years. But vision driven by art survives beyond our lifespan."

Hiroshi Ishii with one of his early projects, bottles that play music when their stoppers are removed. Photo / Donna Coveney

Part of that vision is Ishii's sense that aesthetic qualities matter. For him, it's not just about making something that works; in his careful attention to materials and design, he makes it clear that he believes the experience of interacting with devices should be pleasing, not just functional.

Already, Ishii's vision has had a broad influence. His first doctoral student, John Underkoffler '88, SM '91, PhD '99, calls Ishii "a luminous dynamo" who has been "father, mother and obstetrician to a whole new field" of computer interfaces. The key point, he says, was Ishii's "recognition that it's not about the electronic technology, but about the physicality, the human-centered aspects" of working with that technology.

In his years at the Media Lab, Ishii's basic vision has been played out in a variety of experiments that he and his students have carried out. Perhaps the most widely known is a system for controlling a computer display with three-dimensional gestures using both hands -- a version of which was featured in the 2002 movie Minority Report. To prepare for that movie's computer-interaction scenes, actor Tom Cruise and the movie's special effects team worked closely with Underkoffler.

At the time, the technology was just a vision of what could be. Underkoffler coached Cruise in how to gesture in front of a blank screen, to which the computer graphics were added later. But in the years since then, the vision has gradually moved closer to becoming reality. Underkoffler, working with a startup company called Oblong Industries in California, has developed the gesture-controlled computer interface into a product called g-speak. Students in Ishii's group are actively working with that interface in the Media Lab, exploring its potential and adding to its capabilities.

"Everybody thought this movie was science fiction," Ishii says, "but it's not fiction, it's fact. The future is now. It came from the Media Lab, it went to the movie, and now it's reality."

From fantasy to reality

Just by moving one's hands, an image can be moved from one wall to another, or onto the tabletop. The system responds not just to hands moving from side to side or up and down, but forward and back as well, and it can also respond to multiple users simultaneously.

How would you use it? Say you've just taken hundreds of digital pictures and you want to select the best ones. You stand in front of the display looking at a pile of photos, and just by moving your hands you push them around, sort them into piles, and put the ones you choose into a box in the corner. Others can join the process, sorting one pile while you work with another. When dealing with large amounts of data and collaborating with others, this can be faster and easier than a traditional mouse-based point-and-click process.

That's not the only system from the Tangible Media Group that has spawned its own startup company. A simpler technology that also could change the ways people interact with information led to a company called Ambient Devices, which produces displays that continuously show anything from weather forecasts to the performance of the stock market or the use of energy in a home. One of these devices is a frosted-glass globe that glows in color, with the hue changing according to the information being displayed (such as red when the stock market is going down, green when it's going up).

Ishii explains that these devices are designed to be part of the background of a room, unlike computer displays that are intended to be highly interactive. He compares them to an old-fashioned clock on the wall -- always "on," little noticed, but capable of conveying useful information almost instantaneously at a glance.

Ben Resner SM '01, who started Ambient Devices and calls Ishii the "intellectual founder" of the company, says he is "a true teacher." Resner adds that "the thing about Hiroshi that really impresses me, that I didn't notice until later" after leaving the Lab, was his "ability to find that nugget of newness in every student's work."

And that's the key thing. Ultimately, the influence Ishii hopes to have on the world is not measured just by the devices he and his students create -- the interactive tables and display walls and glowing orbs. It's what happens inside the minds of his students and those influenced by his work. "Inspiring people, firing up the imagination," that's what matters, he says. "We try to defy gravity, to make things more engaging, more exciting."

Provided by Massachusetts Institute of Technology (news : web)