Credit: Eindhoven University of Technology

An international team of scientists from Eindhoven University of Technology, University of Texas at Austin, and University of Derby, has developed a revolutionary method that quadratically accelerates artificial intelligence (AI) training algorithms. This gives full AI capability to inexpensive computers, and would make it possible in one to two years for supercomputers to utilize Artificial Neural Networks that quadratically exceed the possibilities of today's artificial neural networks. The scientists presented their method on June 19 in the journal Nature Communications.

Artificial Neural Networks (or ANN) are at the very heart of the AI revolution that is shaping every aspect of society and technology. But the ANNs that we have been able to handle so far are nowhere near solving very complex problems. The very latest supercomputers would struggle with a 16 million-neuron network (just about the size of a frog brain), while it would take over a dozen days for a powerful desktop computer to train a mere 100,000-neuron network.

Personalized medicine

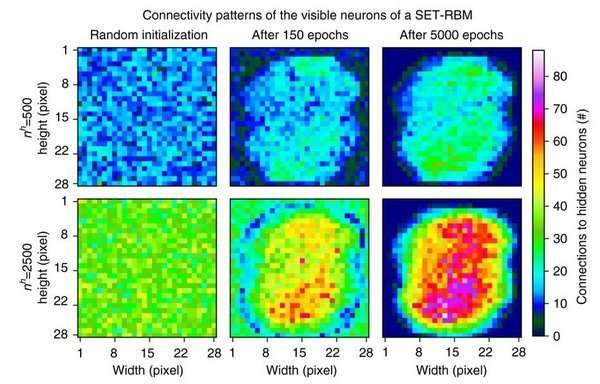

The proposed method, dubbed Sparse Evolutionary Training (SET), takes inspiration from biological networks and in particular neural networks that owe their efficiency to three simple features: networks have relatively few connections (sparsity), few hubs (scale-freeness) and short paths (small-worldness). The work reported in Nature Communications demonstrates the benefits of moving away from fully-connected ANNs (as done in common AI), by introducing a new training procedure that starts from a random, sparse network and iteratively evolves into a scale-free system. At each step, the weaker connections are eliminated and new links are added at random, similarly to a biological process known as synaptic shrinking.

The striking acceleration effect of this method has enormous significance, as it will allow the application of AI to problems that are not currently tractable due to the vast number of parameters. Examples include affordable personalized medicine and complex systems. In complex, rapidly changing environments such as smart grids and social systems, where frequent on-the-fly retraining of an ANN is required, improvements in learning speed (without compromising accuracy) are essential. In addition, because such training can be achieved with limited computation resources, the proposed SET method will be preferred for the embedded intelligences of the many distributed devices connected to a larger system.

Frog brain

Thus, concretely, with SET any user can build on its own laptop an artificial neural network of up to 1 million neurons, while with state-of-the-art methods this was reserved only for expensive computing clouds. This does not mean that the clouds are not useful anymore. They are. Imagine what you can build on them with SET. Currently the largest artificial neural networks, built on supercomputers, have the size of a frog brain (about 16 million neurons). After some technical challenges are overpassed, with SET, we may build on the same supercomputers artificial neural networks close to the human brain size (about 80 billion neurons).

Lead author Dr. Decebal Mocanu: "And, yes, we do need such extremely large networks. It was shown, for example, that artificial neural networks are good in detecting cancer from human genes. However, complete chromosomes are too large to fit in state-of-the-art artificial neural networks, but they could fit in an 80 billion neuron network. This fact can hypothetically lead to better healthcare and affordable personalized medicine for all of us."

More information: Decebal Constantin Mocanu et al. Scalable training of artificial neural networks with adaptive sparse connectivity inspired by network science, Nature Communications (2018). DOI: 10.1038/s41467-018-04316-3

Journal information: Nature Communications

Provided by Eindhoven University of Technology