Credit: Disney Research

A team led by Disney Research, Zürich has developed a method to more efficiently render animated scenes that involve fog, smoke or other substances that affect the travel of light, significantly reducing the time necessary to produce high-quality images or animations without grain or noise.

The method, called joint importance sampling, helps identify potential paths that light can take through a foggy or underwater scene that are most likely to contribute to what the camera – and the viewer – ultimately sees. In this way, less time is wasted computing paths that aren't necessary to the final look of an animated sequence.

Wojciech Jarosz, a research scientist at Disney Research, Zürich, said the computation time needed to produce noise-free images when rendering a complex scene can take minutes, hours or even days. The new algorithms his team created can reduce that time dramatically, by a factor of 10, 100, or even up to 1,000 in their experiments.

"Faster renderings allow our artists to focus on the creative process instead of waiting on the computer to finish," Jarosz said. "This leaves more time for them to create beautiful imagery that helps create an engaging story."

The researchers, including collaborators from Saarland University, Aarhus University, Université de Montréal and Charles University, Prague, will present their findings at the ACM SIGGRAPH Asia 2013 conference, November 19-22, in Hong Kong.

Light rays are deflected or scattered not only when they bounce off a solid object, but also as they pass through aerosols and liquids. The effect of clear air is negligible for rendering algorithms used to produce animated films, but realistically producing scenes including fog, smoke, smog, rain, underwater scenes, or even a glass of milk requires computational methods that account for these "participating media."

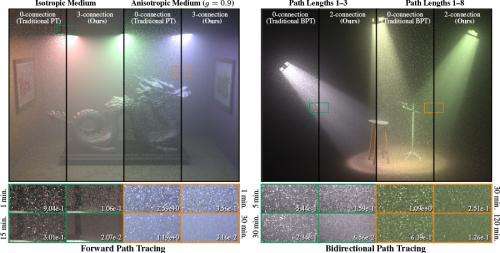

So-called Monte Carlo algorithms are increasingly being used to render such phenomena in animated films and special effects. These methods operate by analyzing a random sampling of possible paths that light might take through a scene and then averaging the results to create the overall effect. But Jarosz explained that not all paths are created equal. Some paths end up being blocked by an object or surface in the scene; in other cases, a light source may simply be too far from the camera to have much chance of being seen. Calculating those paths can be a waste of computing time or, worse, averaging them may introduce error, or noise, that creates unwanted effects in the animation.

Computer graphics researchers have tried various "importance sampling" techniques to increase the probability that the random light paths calculated will ultimately contribute to the final scene and keep noise to a minimum. Some techniques trace the light from its source to the camera; others from the camera back to the source. Some are bidirectional – tracing the light from both the camera and the source before connecting them together. Unfortunately, even such sophisticated bidirectional techniques compute the light and camera portions of the paths independently, without knowledge of each other, before connecting them together, so they are unlikely to construct full light paths that ultimately have a strong contribution to the final image.

By contrast, the joint importance sampling method developed by the Disney Research team chooses the locations along the random paths with mutual knowledge of the camera and light source locations. This approach allows their method to create high-contribution paths more readily, increasing the efficiency of the rendering process.

The researchers found that their algorithms significantly reduced noise and improved rendering performance. "There's always going to be noise, but with our method, we can reduce the noise much more quickly, which can translate into savings of time, computer processing and ultimately money," Jarosz said.

More information: Iliyan Georgiev, Jaroslav Křivánek, Toshiya Hachisuka, Derek Nowrouzezahrai, Wojciech Jarosz. Joint Importance Sampling of Low-Order Volumetric Scattering. ACM Transactions on Graphics (Proceedings of ACM SIGGRAPH Asia 2013), 32(6), November 2013.

Provided by Disney Research