Big data and synthetic chemistry could fight climate change and pollution

Scientists at the University of South Carolina and Columbia University have developed a faster way to design and make gas-filtering membranes that could cut greenhouse gas emissions and reduce pollution.

Their new method, published today in Science Advances, mixes machine learning with synthetic chemistry to design and develop new gas-separation membranes more quickly. Recent experiments applying this approach resulted in new materials that separate gases better than any other known filtering membranes.

The discovery could revolutionize the way new materials are designed and created, Brian Benicewicz, the University of South Carolina SmartState chemistry professor, said.

"It removes the guesswork and the old trial-and-error work, which is very ineffective," Benicewicz said. "You don't have to make hundreds of different materials and test them. Now you're letting the machine learn. It can narrow your search."

Plastic films or membranes are often used to filter gases. Benicewicz explained that these membranes suffer from a tradeoff between selectivity and permeability—a material that lets one gas through is unlikely to stop a molecule of another gas. "We're talking about some really small molecules," Benicewicz said. "The size difference is almost imperceptible. If you want a lot of permeability, you're not going to get a lot of selectivity."

Benicewicz and his collaborators at Columbia University wanted to see if big data could design a more effective membrane.

The team at Columbia University created a machine learning algorithm that analyzed the chemical structure and effectiveness of existing membranes used for separating carbon dioxide from methane. Once the algorithm could accurately predict the effectiveness of a given membrane, they turned the question around: What chemical structure would make the ideal gas separation membrane?

Sanat K. Kumar, the Bykhovsky Professor of Chemical Engineering at Columbia, compared it to Netflix's method for recommending movies. By examining what a viewer has watched and liked before, Netflix determines features that the viewer enjoys and then finds videos to recommend. His algorithm analyzed the chemical structures of existing membranes and determined which structures would be more effective.

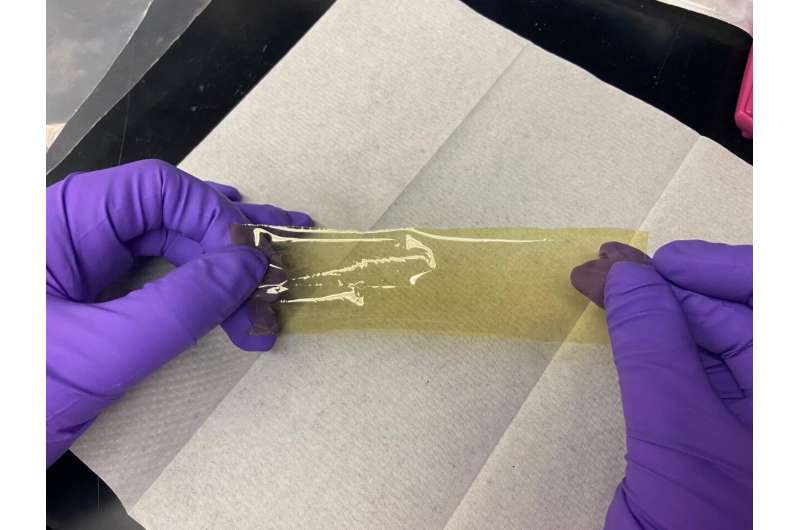

The computer produced a list of 100 hypothetical materials that might surpass current limits. Benicewicz, who leads a synthetic chemistry research group, identified two of the proposed structures that could plausibly be made. Laura Murdock, a UofSC Ph.D. student in chemistry, made the prescribed polymers and cast them into thin films.

When the membranes were tested, their effectiveness was close to the computer's prediction and well above presumed limits.

"Their performance was very good—much better than what had been previously made," Murdock said. "And it was pretty easy. It has the potential for commercial use."

Separating carbon dioxide and methane has an immediate application in the natural gas industry; CO2 must be removed from natural gas to prevent corrosion in pipelines. But Murdock said the method of using big data to remove the guesswork from the process leads to another question: "What other polymer materials can we apply machine learning to and create better materials for all kinds of applications?"

Benicewicz said machine learning could help scientists design new membranes for separating greenhouse gases from coal, which can help to reduce climate change.

"This work thus points to a new way of materials design," Kumar said. "Rather than test all the materials that exist for a particular application, you look for the part of a material that best serves the need that you have. When you combine the very best materials then you have a shot at designing a better material."

More information: Designing exceptional gas-separation polymer membranes using machine learning, Science Advances (2020). DOI: 10.1126/sciadv.aaz4301. advances.sciencemag.org/content/6/20/eaaz4301

Journal information: Science Advances

Provided by University of South Carolina