Training with states of matter search algorithm enables neuron model pruning

Artificial neural networks are machine learning systems composed of a large number of connected nodes called artificial neurons. Similar to the neurons in a biological brain, these artificial neurons are the primary basic units that are used to perform neural computations and solve problems. Advances in neurobiology have illustrated the important role played by dendritic cell structures in neural computation, and this has led to the development of artificial neuron models based on these structures.

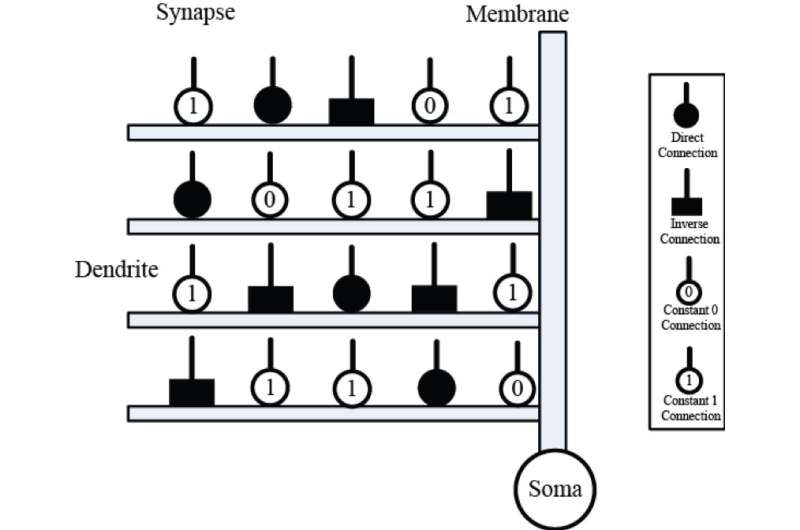

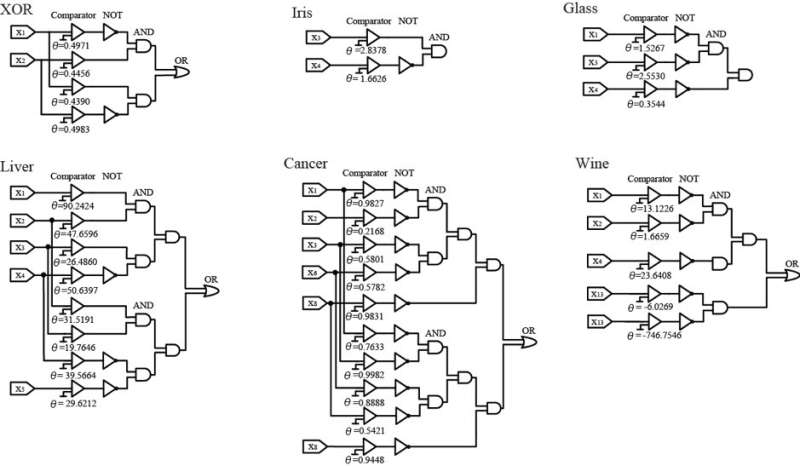

The recently developed approximate logic neuron model (ALNM) is a single neural model that has a dynamic dendritic structure. The ALNM can use a neural pruning function to eliminate unnecessary dendrite branches and synapses during training to address a specific problem. The resulting simplified model can then be implemented in the form of a hardware logic circuit.

However, the well-known backpropagation (BP) algorithm that was used to train the ALMN actually restricted the neuron model's computational capacity. "The BP algorithm was sensitive to initial values and could easily be trapped into local minima," says corresponding author Yuki Todo of Kanazawa University's Faculty of Electrical and Computer Engineering. "We therefore evaluated the capabilities of several heuristic optimization methods for training of the ALMN."

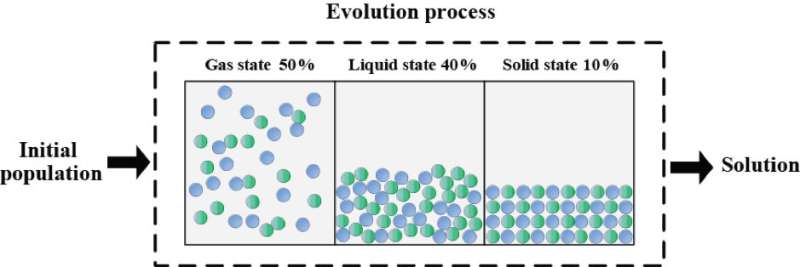

After a series of experiments, the states of matter search (SMS) algorithm was selected as the most appropriate training method for the ALMN. Six benchmark classification problems were then used to evaluate the ALNM's optimization performance when it was trained using the SMS as a learning algorithm, and the results showed that SMS provided superior training performance when compared with BP and the other heuristic algorithms in terms of both accuracy and convergence speed.

"A classifier based on the ALNM and SMS was also compared with several other popular classification methods," states Associate Professor Todo, "and the statistical results verified this classifier's superiority on these benchmark problems."

During the training process, the ALNM simplified the neural models through synaptic pruning and dendritic pruning procedures, and the simplified structures were then substituted using logic circuits. These circuits also provided satisfactory classification accuracy for each of the benchmark problems. The ease of hardware implementation of these logic circuits suggests that future research will see the ALNM and SMS used to solve increasingly complex and high-dimensional real-world problems.

More information: Junkai Ji et al, Approximate logic neuron model trained by states of matter search algorithm, Knowledge-Based Systems (2018). DOI: 10.1016/j.knosys.2018.08.020

Provided by Kanazawa University