January 19, 2018 feature

Information engine operates with nearly perfect efficiency

Physicists have experimentally demonstrated an information engine—a device that converts information into work—with an efficiency that exceeds the conventional second law of thermodynamics. Instead, the engine's efficiency is bounded by a recently proposed generalized second law of thermodynamics, and it is the first information engine to approach this new bound.

The results demonstrate both the feasibility of realizing a "lossless" information engine—so-called because virtually none of the available information is lost but is instead almost entirely converted into work—and also experimentally validates the sharpness of the bound set by the generalized second law.

The physicists, Govind Paneru, Dong Yun Lee, Tsvi Tlusty, and Hyuk Kyu Pak at the Institute for Basic Science in Ulsan, South Korea (Tlusty and Pak are also with the Ulsan National Institute of Science and Technology), have published a paper on the lossless information engine in a recent issue of Physical Review Letters.

"Thinking about engines has driven the progress of thermodynamics and statistical mechanics ever since Carnot set a limit on the efficiency of heat engines in 1824," Pak told Phys.org. "Adding information processing in the form of 'demons' set new limitations, and it was essential to verify the new limits in experiment."

Traditionally, the maximum efficiency with which an engine can convert energy into work is bounded by the second law of thermodynamics. In the past decade, however, experiments have shown that an engine's efficiency can surpass the second law if the engine can gain information from its surroundings, since it can then convert that information into work. These information engines (or "Maxwell's demons," named after the first conception of such a device) are made possible due to a fundamental connection between information and thermodynamics that scientists are still trying to fully understand.

Naturally, the recent experimental demonstrations of information engines have raised the question of whether there is an upper bound on the efficiency with which an information engine can convert information into work. To address this question, researchers have recently derived a generalized second law of thermodynamics, which accounts for both energy and information being converted into work. However, no experimental information engine has approached the predicted bounds, until now.

The generalized second law of thermodynamics states that the work extracted from an information engine is limited by the sum of two components: the first is the free energy difference between the final and initial states (this is the sole limit placed on conventional engines by the conventional second law), and the other is the amount of available information (this part sets an upper bound on the extra work that can be extracted from information).

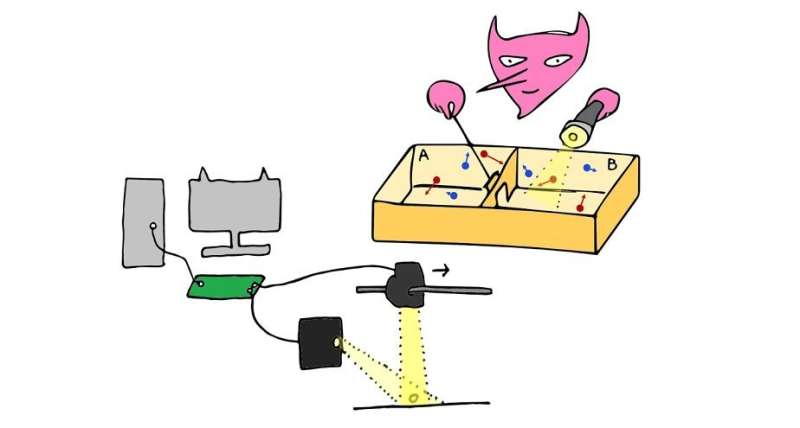

To achieve the maximum efficiency set by the generalized second law, the researchers in the new study designed and implemented an information engine made of a particle trapped by light at room temperature. Random thermal fluctuations cause the tiny particle to move slightly due to Brownian motion, and a photodiode tracks the particle's changing position with a spatial accuracy of 1 nanometer. If the particle moves more than a certain distance away from its starting point in a certain direction, the light trap quickly shifts in the direction of the particle. This process repeats, so that over time the engine transports the particle in a desired direction simply by extracting work from the information it obtains from the system's random thermal fluctuations (the free energy component here is zero, so it does not contribute to the work extracted).

One of the most important features of this system is its nearly instantaneous feedback response: the trap shifts in just a fraction of a millisecond, giving the particle no time to move further and dissipate energy. As a result, almost none of the energy gained by the shift is lost to heat, but rather nearly all of it is converted into work. By avoiding practically any information loss, the information-to-energy conversion of this process reaches approximately 98.5% of the bound set by the generalized second law. The results lend support for this bound, and illustrate the possibility of extracting the maximum amount of work possible from information.

Besides their fundamental implications, the results may also lead to practical applications, which the researchers plan to investigate in the future.

"Both nanotechnology and living systems operate at scales where the interplay between thermal noise and information processing is significant," Pak said. "One may think about engineered systems where information is used to control molecular processes and drive them in the right direction. One possibility is to create hybrids of biological systems and engineered ones, even in the living cell."

More information: Govind Paneru, Dong Yun Lee, Tsvi Tlusty, and Hyuk Kyu Pak. "Lossless Brownian Information Engine." Physical Review Letters. DOI: 10.1103/PhysRevLett.120.020601

Journal information: Physical Review Letters

© 2018 Phys.org