'Seeing' around corners: DARPA research into holographic imaging of hidden objects

Researchers from SMU's Lyle School of Engineering will lead a multi-university team funded by the Defense Advanced Research Projects Agency (DARPA) to build a theoretical framework for creating a computer-generated image of an object hidden from sight around a corner or behind a wall.

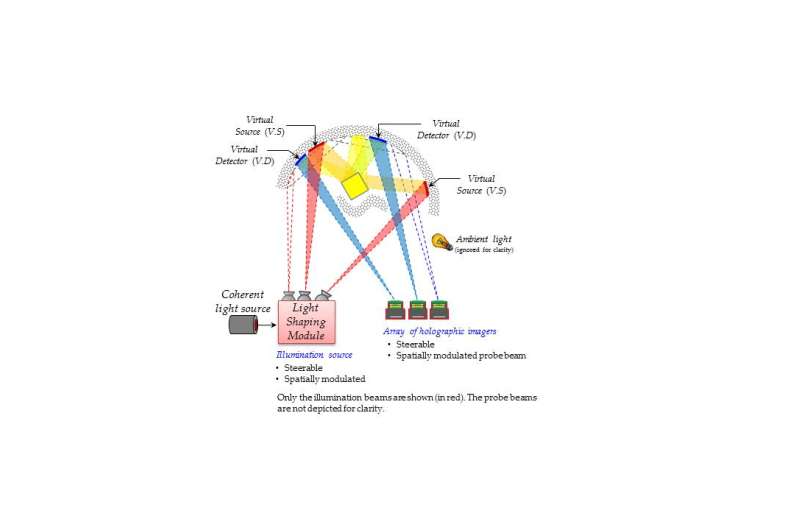

The core of the proposal is to develop a computer algorithm to unscramble the light that bounces off irregular surfaces to create a holographic image of hidden objects.

"This will allow us to build a 3-D representation - a hologram - of something that is out of view," said Marc Christensen, dean of the Bobby B. Lyle School of Engineering at SMU and principal investigator for the project.

"Your eyes can't do that," Christensen said. "It doesn't mean we can't do that."

The DARPA award is for a four-year project with anticipated total funding of $4.87 million. SMU Lyle has been awarded $2.2 million for the first two years of what DARPA calls the "REVEAL" project, with the expectation that phase II funding of another $2.67 million will awarded by 2018. SMU is the lead university for the research and is collaborating with engineers from Rice, Northwestern, and Harvard.

Co-investigators for the SMU team are Duncan MacFarlane, Bobby B. Lyle Centennial Chair in Engineering Entrepreneurship and professor of electrical engineering; and Prasanna Rangarajan, a research assistant professor who directs the Lyle School's Photonics Architecture Lab.

DARPA's mission, which dates back to reaction against the Soviet Union's launch of SPUTNIK in 1957, is to make pivotal investments in breakthrough technologies for national security.

In seeking proposals for its "REVEAL" program, DARPA officials noted that conventional optical imaging systems today largely limit themselves to the measurement of light intensity, providing two-dimensional renderings of three-dimensional scenes and ignoring significant amounts of additional information that may be carried by captured light. SMU's Christensen, an expert in photonics, explains the challenge like this:

"Light bounces off the smooth surface of a mirror at the same angle at which it hits the mirror, which is what allows the human eye to "see" a recognizable image of the event - a reflection," Christensen said. "But light bouncing off the irregular surface of a wall or other non-reflective surface is scattered, which the human eye cannot image into anything intelligible.

"So the question becomes whether a computer can manipulate and process the light reflecting off a wall - unscrambling it to form a recognizable image - like light reflecting off a mirror," Christensen explained. "Can a computer interpret the light bouncing around in ways that our eyes cannot?"

In an effort to tackle the problem, the proposed research effort will extend the light transport models currently employed in the computer graphics and vision communities based on radiance propagation to simultaneously accommodate the finite speed of light and the wave nature of light. For example, light travels at different speeds through different media (air, water, glass, etc.) and light waves within the visible spectrum scatter at different rates depending on color.

The Goal for the DARPA program is to develop a fundamental science for indirect imaging in scattering environments. This will lead to systems which can "see" around corners and behind obstructions at distances ranging from meters to kilometers.

People have been using imaging systems to gain knowledge of distant or microscopic objects for centuries, Christensen notes. But the last decade has witnessed a number of advancements that prepare engineers for the revolution that DARPA is seeking.

"For example, the speed and sophistication of signal processing (the process of converting analog transmissions into digital signals) has reached the point where we can accomplish really intensive computational tasks on handheld devices," Christensen said. "What that means is that whatever solutions we design should be easily transportable into the battlefield."

The SMU-led project is working under the acronym OMNISCIENT - "Obtaining Multipath & Non-line-of-sight Information by Sensing Coherence & Intensity with Emerging Novel Techniques." The team unites leading researchers in the fields of computational imaging, computer vision, signal processing, information theory and computer graphics. Guiding the Rice University component of the research are Ashok Veeraraghavan, assistant professor of electrical and computer engineering, and Richard Baraniuk, Victor E, Cameron Professor; leading the Northwestern component is Oliver Cossairt, assistant professor of electrical engineering and computer science and head of the university's Computational Photography Lab; and the Harvard research is led by Todd Zickler, professor of electrical engineering and computer science. Wolfgang Heindcrich, director of the Visual Computing Center at King Abdullah University of Science and Technology, will be a consultant to the SMU Team.

Provided by Southern Methodist University