Process variation threatens to slow down and even pause chip miniaturization

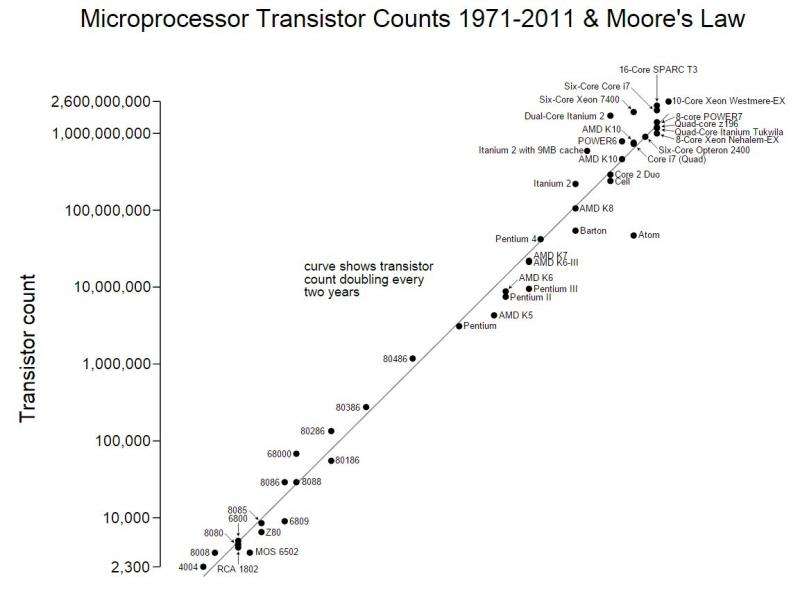

For past several decades, the processor industry has enjoyed the benefits of chip miniaturization and the exponential increase in the number of on-chip transistors as predicted by Moore's law. However, as process technology scales to small feature sizes, precise control of fabrication processes has become increasingly difficult. As a result, 'process variation' (PV), which refers to the deviation in parameters from their nominal specifications, has greatly exacerbated.

Above the nearly 350nm technology node, PV had negligible effect on processors, since the magnitude of variation was insignificant compared to the device size. However, with ongoing process scaling, the effect of PV can be seen on all metrics of interest, such as performance, energy and yield.

For example, due to PV, the maximum clock frequency of different cores in a 65nm, 80-core Intel processor can vary between 5.7 GHz and 7.3 GHz. Similarly, due to PV, the timing parameters in a DDR3 DRAM device can be up to 66 percent lower than the datasheet specifications. PV can lead to as high as 9X variation in the sleep power in different instances of ARM Cortex M3 processors. In phase change memory (PCM), the write endurance of different cells can vary by up to 50X due to PV.

The effect of PV also increases at low voltages and as the supply voltage continues to scale with process scaling (e.g., from 5v at 800nm to ~1.1v at 32nm process technology) or as voltage-scaling approaches become deployed for saving energy, the effect of PV is expected to worsen. In fact, a study reports chip yields reducing from nearly 90 percent at 350nm to 50 percent at the 90nm feature size. It has been estimated that if left unaddressed, PV can wipe out the performance gain obtained from an entire process technology generation.

These points are highlighted in a recent survey paper titled, "A Survey Of Architectural Techniques for Managing Process Variation" by ORNL researcher Sparsh Mittal. This paper, accepted in ACM Computing Surveys 2015, investigates the impact of PV along with strategies for mitigating it in a wide range of system architectures, e.g. in CPUs, GPUs, in processor components (cache, main memory, processor core), in memory technologies (SRAM, DRAM, eDRAM, non-volatile memories e.g. PCM, resistive RAM) and in both 2D and 3D processors.

The paper also summarizes some commonly used system-level techniques for managing process variation, such as task scheduling, DVFS, use of redundant storage, etc. For example, in multicore processors, the tasks can be scheduled to a core which is least affected by PV. Similarly, higher supply voltage or additional refresh operations can be provisioned for a block most affected by PV. Further, PV-affected parts (e.g. registers or cache blocks) can be disabled and normal or spare parts can instead be used. Also, the faults in PV-affected parts can be corrected by using error-correcting codes (ECC). These techniques have shown significant potential in alleviating the impact of PV on processors.

As the quest of ongoing process scaling confronts the formidable challenge of rising process variation, the design of computing systems is likely to undergo a major overhaul. Crossing over these obstacles for designing variation-resilient computing systems is the challenge that awaits us in near future.

More information: A Survey Of Architectural Techniques for Managing Process Variation: www.academia.edu/19490711/A_Su … ng_Process_Variation

(c) 2015 Phys.org