Robotics insights through flies' eyes

To understand how a fly's tiny brain processes visual information efficiently enough to guide its aerobatic feats -- and ultimately to build more capable robots -- researchers in Munich, Germany, have set up a flight simulator for flies.

Common and clumsy-looking, the blow fly is a true artist of flight. Suddenly changing direction, standing still in the air, spinning lightning-fast around its own axis, and making precise, pinpoint landings - all these maneuvers are simply a matter of course. Extremely quick eyesight helps to keep it from losing orientation as it races to and fro. Still, how does its tiny brain process the multiplicity of images and signals so rapidly and efficiently?

To get to the bottom of this, members of a Munich-based "excellence cluster" called Cognition for Technical Systems or CoTeSys have created an unusual research environment: a flight simulator for flies. Here they're investigating what goes on in flies' brains while they're flying. Their goal is to put similar capabilities in human hands - for example, to aid in developing robots that can independently apprehend and learn from their surroundings.

A fly's brain enables the unbelievable - the animal's easy negotiation of obstacles in rapid flight, split-second reaction to the hand that would catch it, and unerring navigation to the smelly delicacies it lives on. Researchers have long known that flies take in many more images per second than humans do. For human eyes, anything more than 25 discrete images per second will merge into a continuous movement. A blow fly, on the other hand, can perceive 100 images per second as discrete sense impressions and interpret them quickly enough to steer its movement and precisely determine its position in space.

Yet the fly's brain is hardly bigger than a pinhead, too small by far to enable the fly's feats if it functioned exactly the way the human brain does. It must have a simpler and more efficient way of processing images from the eyes into visual perception, and that is a subject of intense interest for robot builders. Even today, robots have great difficulty perceiving their surroundings through their cameras, and even more difficulty making sense of what they see. Even the recognition of obstacles in their own work space takes too long. So people still need to protect their automated helpers, for example, by surrounding them with safety enclosures. Yet a more direct, supportive collaboration between human and machine is a central research goal of the "excellence cluster" named CoTeSys, Cognition for Technical Systems. This Munich-area research initiative was co-founded by around one hundred scientists and engineers from five universities and institutes.

Within the framework of CoTeSys, brain researchers from the Max-Planck Institute for Neurobiology are exploring how flies manage to apprehend their environment and their own movement so efficiently. They've built a flight simulator for flies under the leadership of neurobiologist Prof. Alexander Borst. Here, on a wraparound display, the researchers present diverse patterns, movements, and sensory stimuli to blow flies. The insect is held in place by a halter, so that electrodes can register the reactions of its brain cells. Thus the researchers observe and analyze what happens in a fly's brain when the animal whizzes in criss-cross flight around a room.

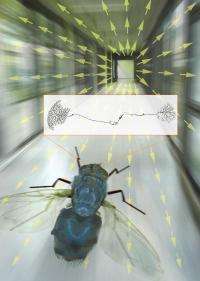

The first results show one thing very clearly: The way flies process the images from their immobile eyes is completely different from they way the human brain processes visual signals. Movements in space produce so-called "optical flux fields" that characterize specific kinds of motion definitively. In forward motion, for example, objects rush past on the sides, and foreground objects appear to get bigger. Near and distant objects appear to move differently. The first step for the fly is to construct a model of these movements in its tiny brain. The speed and direction with which objects before the fly's eyes appear to move generate, moment by moment, a typical pattern of motion vectors, the flux field, which in a second step is assessed by the so-called "lobula plate," a higher level of the brain's vision center. In each hemisphere there are only 60 nerve cells responsible for this; each reacts with particular intensity when presented with the pattern appropriate to it. For the analysis of the optical flux fields, it's important that motion information from both eyes be brought together. This happens over a direct connection of specialized neurons called VS cells. In this way, the fly gets a precise fix on its position and movement.

Prof. Borst explains the significance of this investigation: "Through our results, the network of VS cells in the fly's brain responsible for rotational movement is one of the best understood circuits in the nervous system." Yet these efforts don't end with the purely fundamental research. The discoveries of the neuroscientists in Martinsried are also particularly interesting to the engineers associated with the academic chair for guidance and control at the Technischen Universität München (TUM), with whom Prof. Borst collaborates closely in the framework of CoTeSys.

Under the leadership of Prof. Martin Buss and Dr. Kolja Kühnlenz, the TUM researchers are working to develop intelligent machines that can observe their environment through cameras, learn from what they see, and react appropriately to the current situation. Their long-range aim is to enable the creation of intelligent machines that can interact with people directly, effectively, and safely. Even in factories, the safety barriers between humans and robots should fall. To that end, simple, fast, and efficient methods for the analysis and interpretation of camera pictures are absolutely essential.

For example, the TUM researchers are developing small, flying robots whose position and movement in flight will be controlled by a computer system for visual analysis inspired by the example of the fly's brain. One mobile robot, the Autonomous City Explorer (ACE) was challenged to find its way from the institute to Marienplatz at the heart of Munich - a distance of about a mile - by stopping passers-by and asking for directions. To do this, ACE had to interpret the gestures of people who pointed the way, and it had to negotiate the sidewalks and traffic crossings safely.

Increasingly natural interaction between intelligent machines and humans is unthinkable without efficient image analysis. Insights gained from the flight simulator for flies - through the scientific interplay CoTeSys fosters among researchers from various disciplines - offer an approach that might be simple enough to be technically portable from one domain to the other, from the insects to the robots.

More information: Further information is available at: www.cotesys.org/ and www.neuro.mpg.de/english/index2.html .

Source: Technische Universitaet Muenchen