This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

proofread

Advanced AI techniques for predicting and visualizing citrus fruit maturity

Citrus, the world's most valuable fruit crop, is at a crossroads with slowing production growth and a focus on improving fruit quality and post-harvest processes. Key to this is understanding citrus color change, a critical indicator of fruit maturity, traditionally gauged by human judgment.

Recent machine vision and neural network advancements offer more objective and robust color analysis, but they struggle with varying conditions and translating color data into practical maturity assessments.

Research gaps remain in predicting color transformation over time and developing user-friendly visualization techniques. Additionally, implementing these advanced algorithms on edge devices in agriculture is challenging due to their limited computing capabilities, highlighting a need for optimized, efficient technologies in this field.

In June 2023, Plant Phenomics published a research article titled "Predicting and Visualizing Citrus Colour Transformation Using a Deep Mask-Guided Generative Network."

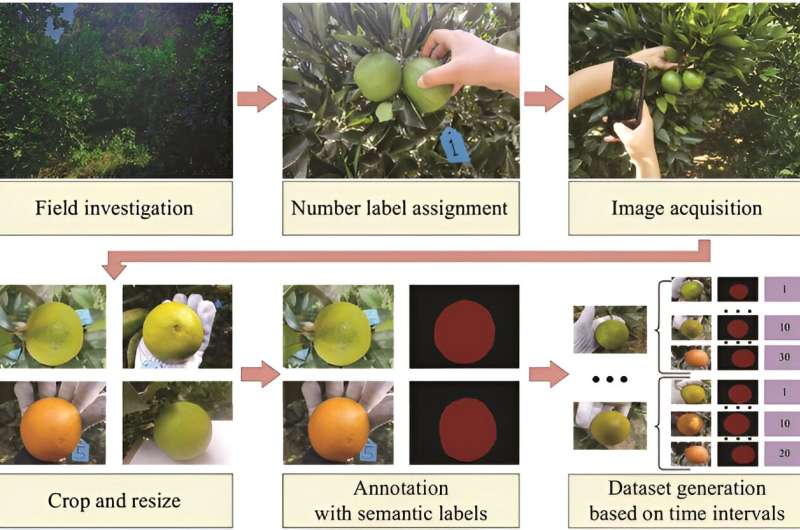

In this study, researchers developed a novel framework for predicting and visualizing citrus fruit color transformation in orchards, leading to the creation of an Android application. This network model processes citrus images and a specified time interval, outputting a future color image of the fruit.

The dataset, encompassing 107 orange images captured during color transformation, was crucial for training and validating the network. The framework utilizes a deep mask-guided generative network for accurate predictions and has a design requiring fewer resources, facilitating mobile device implementation. Key results include achieving a high Mean Intersection over Union (MIoU) for semantic segmentation, indicating the network's proficiency in varying conditions.

The network also excelled in citrus color prediction and visualization, demonstrated by high peak signal-to-noise ratio (PSNR) and low mean local style loss (MLSL), indicating less distortion and high fidelity of generated images. The generative network's robustness was evident in its ability to replicate color transformation accurately, even with different viewing angles and colors of oranges.

Additionally, the network's merged design, incorporating embedding layers, allowed for accurate predictions over various time intervals with a single model, reducing the need for multiple models for different time frames. Sensory panels further validated the network's effectiveness, with a majority finding high similarity between synthesized and real images.

In summary, this study's innovative approach allows for more precise monitoring of fruit development and optimal harvest timing, with potential applications extending to other citrus species and fruit crops. The framework's adaptability to edge devices like smartphones makes it highly practical for in-field use, demonstrating the potential of generative models in agriculture and beyond.

More information: Zehan Bao et al, Predicting and Visualizing Citrus Color Transformation Using a Deep Mask-Guided Generative Network, Plant Phenomics (2023). DOI: 10.34133/plantphenomics.0057

Provided by TranSpread