This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

preprint

trusted source

proofread

Let the robot take the wheel: Autonomous navigation in space

Tracking spacecraft as they traverse deep space isn't easy. So far, it's been done manually, with operators of NASA's Deep Space Network, one of the most capable communication arrays for contacting probes on interplanetary journeys, checking data from each spacecraft to determine where it is in the solar system.

As more and more spacecraft start to make those harrowing trips between planets, that system will not be scalable. So engineers and orbital mechanics experts are rushing to solve this problem—and now a team from Politecnico di Milano has developed an effective technique that would be familiar to anyone who has seen an autonomous car.

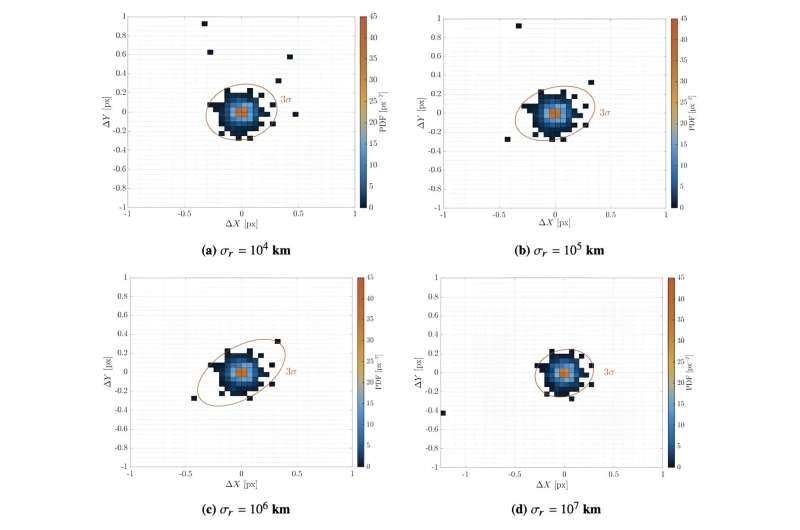

Visual systems are at the heart of most autonomous vehicles here on Earth, and they are also the heart of the system outlined by Eleonora Andreis and her colleagues. Instead of taking pictures of the surrounding landscape, these visual systems, essentially highly sensitive cameras, take pictures of the light sources surrounding the probe and focus on a specific kind.

Those light sources are known to wander and are also known as planets. Combining their positioning in a visual frame with a precise time calculated on the probe can accurately place where the probe is in the solar system. Importantly, such a calculation can be done with relatively minimal computing power, making it possible to automate the entire process on board, even a Cubesat.

This contrasts with more complicated algorithms, such as those that use pulsars or radio signals from ground stations as their basis for navigation. These require many more images (or radio signals) in order to calculate an exact position, thereby requiring more computing power that can reasonably be put onto a Cubesat at their current levels of development.

Using planets to navigate isn't as simple as it sounds, though, and the recent paper posted to the preprint server arXiv describing this technique points out the different tasks that any such algorithm has to accomplish. Capturing the image is just the start—figuring out what planets are in the image, and therefore, which would be the most useful for navigation, would be the next step. Using that information to calculate trajectories and speeds is up next and requires an excellent orbital mechanics algorithm.

After calculating the current position, trajectory, and speed, the probe must make any course adjustments to ensure it stays on the right track. On Cubesats, this can be as simple as firing off some thrusters. Still, any significant difference between the expected and actual thrust output can result in significant discrepancies in the probe's eventual location.

To calculate those discrepancies and any other problems that might arise as part of this autonomous control system, the team in Milan implemented a model of how the algorithm would work on a flight from Earth to Mars. Using just the visual-based autonomous navigation system, their model probe calculated its location within 2,000 km and its speed to within 0.5 km/s at the end of its journey. Not bad for a total trip of around 225 million kilometers.

However, implementing a solution in silicon is one thing—implementing it on an actual Cubesate deep space probe is another. The research that resulted in the algorithm is part of an ongoing European Research Council funding program, so there is a chance that the team could receive additional funding to implement their algorithm in hardware. For now, though, it is unclear what the next steps are for the algorithm are. Maybe an enterprising Cubesat designer somewhere can pick it up and run with it—or better yet, let it run itself.

More information: Eleonora Andreis et al, An Autonomous Vision-Based Algorithm for Interplanetary Navigation, arXiv (2023). DOI: 10.48550/arxiv.2309.09590

Journal information: arXiv

Provided by Universe Today