August 31, 2023 report

This article has been reviewed according to Science X's editorial process and policies. Editors have highlighted the following attributes while ensuring the content's credibility:

fact-checked

peer-reviewed publication

trusted source

proofread

Study suggests most people watching extremist videos on YouTube already hold extremist views

A team of sociologists affiliated with several institutions in the U.S. and one in the U.K. has found evidence via surveys and viewer tracking that most of the audience watching extremist videos on YouTube are people who already hold such views. In their study, reported in the journal Science Advances, the group analyzed data from public opinion surveys and used online viewer tracking software to learn more about the viewing habits of visitors to YouTube.

Over the past several years, several social media sites have been accused of platforming racist, sexist or antagonistic content. Some sites, such as YouTube, were also accused of indirectly promoting the posting of such content through promotion of recommendation algorithms. In response, YouTube changed its recommendation algorithms in 2019 to reduce the number of recommendations for such content. In this new effort, the research team sought to find out how effective the changes have been.

The work involved first analyzing responses to a public opinion survey from 2018 regarding viewing habits on YouTube. They then tracked the viewing habits of 1,181 volunteer YouTube users for 133 days.

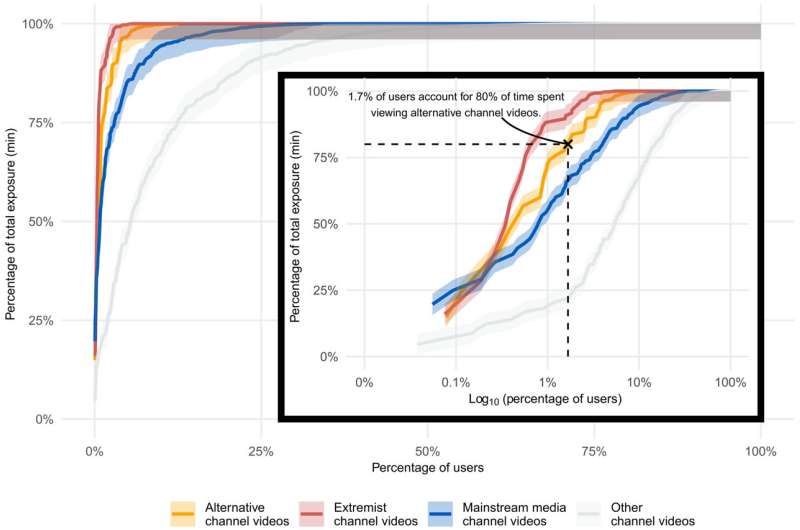

The researchers found that YouTube rarely recommended antagonistic content to anyone except the small number of users who subscribed to channels that were known to host such types of content. More specifically, they found that just 15% of viewers watched videos provided by alternative channels (which were deemed mildly antagonistic) and that just 0.6% of viewers were watchers of 80% of the extremist videos on the site. Overall, just 1.7% of viewers watched approximately 80% of such content.

The research team concludes that few extremist videos are watched by people on YouTube who are not specifically looking for them—and that YouTube has been successful in preventing its algorithms from suggesting such content to those who are not looking for it.

More information: Annie Y. Chen et al, Subscriptions and external links help drive resentful users to alternative and extremist YouTube channels, Science Advances (2023). DOI: 10.1126/sciadv.add8080

Journal information: Science Advances

© 2023 Science X Network