Pressing a button is more challenging than appears—new theory improves button designs

Pressing a button appears effortless and one easily dismisses how challenging it is. Researchers at Aalto University, Finland, and KAIST, South Korea, have created detailed simulations of button pressing with the goal of producing human-like presses.

"This research was triggered by admiration of our remarkable capability to adapt button pressing," says Professor Antti Oulasvirta at Aalto University. "We push a button on a remote controller differently than a piano key. The press of a skilled user is surprisingly elegant when looked at terms of timing, reliability, and energy use. We successfully press buttons without ever knowing the inner workings of a button. It is essentially a black box to our motor system. On the other hand, we also fail to activate buttons, and some buttons are known to be worse than others."

Previous research has shown that touch buttons are worse than push-buttons, but there has not been adequate theoretical explanation.

"In the past, there has been very little attention to buttons, although we use them all the time," says Dr. Sunjun Kim. The new theory and simulations can be used to design better buttons.

"One exciting implication of the theory is that activating the button at the moment when the sensation is strongest will help users better rhythm their keypresses."

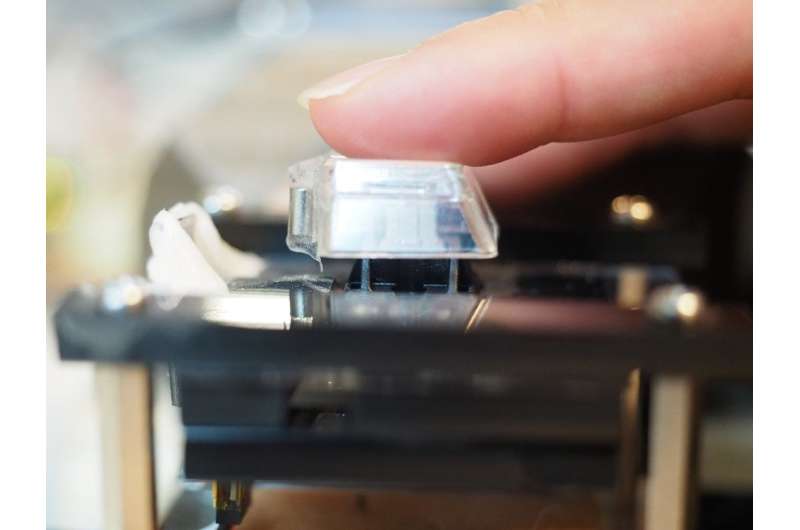

To test this hypothesis, the researchers created a new method for changing the way buttons are activated. The technique is called Impact Activation. Instead of activating the button at first contact, it activates it when the button cap or finger hits the floor with maximum impact.

The technique was 94 percent more precise in rapid tapping than the regular activation method for a push-button (Cherry MX switch) and 37 percent than a regular touchscreen button using a capacitive touch sensor. The technique can be easily deployed in touchscreens. However, regular physical keyboards do not offer the required sensing capability, although special products exist (e.g., the Wooting keyboard) on which it can be implemented.

The technique could help gamers and musicians in tasks that require speed and rhythm.

The simulations shed new light on what happens during a button press. One problem the brain must overcome is that muscles do not activate perfectly. Instead, every press is slightly different. Moreover, a button press is very fast, occurring within 100 milliseconds, and is too fast for correcting movement. The key to understanding button pressing is therefore to understand how the brain adapts based on the limited sensations that are the residue of the brief button-pressing event.

The researchers argue that the key capability of the brain is a probabilistic model: The brain learns a model that allows it to predict a suitable motor command for a button. If a press fails, it can pick a very good alternative and try it out. "Without this ability, we would have to learn to use every button like it was new," says Professor Byungjoo Lee from KAIST. After successfully activating the button, the brain can tune the motor command to be more precise, use less energy and to avoid stress or pain. "These factors together, with practice, produce the fast, minimum-effort, elegant touch people are able to perform."

The brain uses probabilistic models also to extract information optimally from the sensations that arise when the finger moves and its tip touches the button. It "enriches" the ephemeral sensations optimally based on prior experience to estimate the time the button was impacted. For example, tactile sensation from the tip of the finger a better predictor for button activation than proprioception (angle position) and visual feedback.

Best performance is achieved when all sensations are considered together. To adapt, the brain must fuse their information using prior experiences. Professor Lee explains: "We believe that the brain picks up these skills over repeated button pressings that start already as a child. What appears easy for us now has been acquired over years."

The researchers also used the simulation to explain differences among physical and touchscreen-based button types. Both physical and touch buttons provide clear tactile signals from the impact of the tip with the button floor. However, with the physical button this signal is more pronounced and longer.

"Where the two button types also differ is the starting height of the finger, and this makes a difference," explains Prof. Lee. "When we pull up the finger from the touchscreen, it will end up at different height every time. Its down-press cannot be as accurately controlled in time as with a push-button where the finger can rest on top of the key cap."

Three scientific articles, "Neuromechanics of a Button Press," "Impact activation improves rapid button pressing," and "Moving target selection: A cue integration model," will be presented at the CHI Conference on Human Factors in Computing Systems in Montréal, Canada, in April 2018.

More information: Project web pages:

userinterfaces.aalto.fi/neuromechanics

userinterfaces.aalto.fi/impact_activation

kiml.org/Moving-Target-Selection

Provided by Aalto University