Researchers develop the first model to capture crosstalk in social dilemmas

Previous interactions can affect unrelated future decisions: In a line at a coffee shop, a stranger pays for the coffee of the man behind her, who then pays for the next stranger's coffee. He's had no interaction with other customers, and no reason to do them a favor, but he does it anyway. This is an example of crosstalk, in which previous interactions affect unrelated future decisions. And though this notion might seem natural, it had never before been incorporated into simulations of groups engaging in repeated social dilemmas. A new framework developed by computer scientists at IST Austria and their collaborators at Harvard, Yale and Stanford has changed that, and enables the analysis of the effects of crosstalk between games.

The prisoner's dilemma is a classic example of a social dilemma—that is, a situation in which both people would be better off if they cooperated than if they both defected, but there is still some incentive to defect. When social dilemmas are repeated, people subconsciously develop a strategy that dictates when they should cooperate and when they should defect. Researchers use computer simulations to study repeated social dilemmas or "games" by assigning virtual players different strategies, and have established which strategies lead to the development of cooperation, and how stable the resulting cooperative situations are. Successful strategies include, for instance, "tit-for-tat" (I start by cooperating, and then I'll do whatever you did last) or "win-stay, lose-shift" (I start with cooperation, then I'll keep doing what I'm doing until I lose).

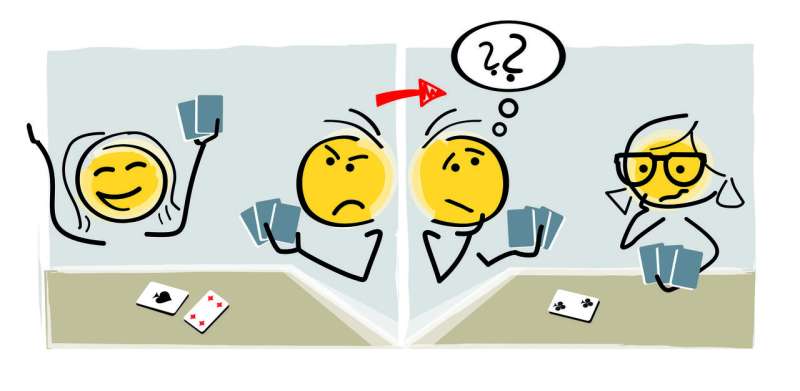

However, in all of these previous studies, scientists have assumed that a player is only interacting with one other player (i.e. Bob only ever plays Alice), or that a player's decisions in one game are completely independent of their decisions in another game (i.e. Bob's games with Alice have no effect on his games with Caroline). These assumptions do not necessarily apply to real-life social dilemmas, however. Humans are often involved in many simultaneous games, and interactions with other players spill over into other games. In other words, these games are subject to crosstalk.

Now, a team of researchers has developed a new framework to address this limitation in the theory, and allow for the quantitative evaluation of the effects of crosstalk on cooperation dynamics in a population. The team includes experts in evolutionary dynamics, game theory, psychology and economics, who collaborated to create the new model.

In a given simulation, each virtual player has a memory of the games played with each of the other players. In previous models, a player would review their past with their current opponent, and decide on a course of action based on this past and their game strategy. In the new model, there is some chance that these memories will be replaced with the memories corresponding to a third player. This method of encoding crosstalk is general, and accounts for all the many varieties of crosstalk, whether simple human error (mixing people up), paying it forward (remembering good experiences), or some other type. Moreover, it can be applied to any societal network—including groups in which everyone knows everyone else, circles, or a random mess of connections.

For researcher Christian Hilbe, this development was exactly what the framework needed. "When modeling repeated games, you always have certain phenomena that you want to describe. For me, it never felt as though previous models were complete. When we introduced crosstalk, it was as if everything snapped together—this is the model we should be using."

Human error has previously been considered in simulations of repeated social dilemmas. The difference here is that while these errors affected only the repeated game in which they occurred, crosstalk causes ripple effects across the entire population: "When crosstalk is introduced, suddenly you're not playing against a single person—you're playing against everyone you are connected to, the whole society," explains co-author Krishnendu Chatterjee.

This results in cooperative and defective behavior spreading much more easily—even a single defective player can cause the complete breakdown of cooperation in a society, if the other players are not sufficiently forgiving. But crosstalk also necessitates strategies with the "correct" level of forgiveness: too harsh, and you end up with a society where no one cooperates; too generous, and defection can also spread as players learn to take advantage of other players. Crosstalk also hinders the evolution of cooperation: The authors implemented an evolutionary model, and found that crosstalk decreases the number of different starting societies that end up in stable cooperative states.

Their paper, published today in Nature Communications, presents an interesting message for our current society. Johannes Reiter says, "The presence of crosstalk means that players must be more forgiving, especially in a network that is highly connected. A harsh strategy for cooperation, such as tit-for-tat, is particularly disastrous in this environment."

More information: Johannes G. Reiter et al, Crosstalk in concurrent repeated games impedes direct reciprocity and requires stronger levels of forgiveness, Nature Communications (2018). DOI: 10.1038/s41467-017-02721-8

Journal information: Nature Communications

Provided by Institute of Science and Technology Austria