NASA Explores potential of altered realities for space engineering and science

Virtual and augmented reality are transforming the multi-billion-dollar gaming industry. A team of NASA technologists now is investigating how this immersive technology could profit agency engineers and scientists, particularly in the design and construction of spacecraft and the interpretation of scientific data.

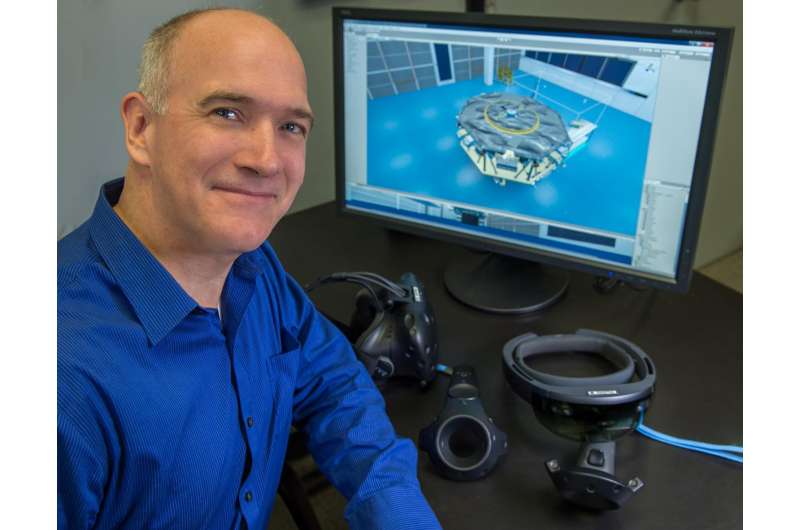

Thomas Grubb, an engineer at NASA's Goddard Space Flight Center in Greenbelt, Maryland, is leading a team of center technical experts and university students to develop six multidisciplinary pilot projects highlighting the potential of virtual and augmented reality, also known as VR and AR. These pilots showcase current capabilities in engineering operations and science, but also provide a glimpse into how technologists could use the technology in the future.

"Anyone who followed the popularity of Pokémon Go has seen how the public has embraced this technology," said Grubb, referring to the augmented-reality game that quickly became a global sensation in 2016. "Just as it's changing the gaming industry, it will change the way we do our jobs," Grubb added. "Five years from now, it's going to be amazing."

To understand the potential, Grubb, whose project is funded by NASA's Center Innovation Fund, said people need to understand the technology's differences and how it has evolved.

Virtual reality typically involves wearing a headset that allows the user to experience and interact with an artificial, computer-generated reality. By combining computer-generated 3-D graphics and coded behaviors—that is, how the app will respond when the user chooses an action—these simulations can be used for design and analysis, entertainment, and training. They allow the user to feel like he or she is experiencing the situation firsthand.

Augmented reality, on the other hand, doesn't move the user to a different place, but adds something to it. As with Pokémon Go, augmented reality is made possible through low-end devices like smartphones and high-end AR headsets that blend digital components into the real world. In other words, with virtual reality, the user swims with the sharks; with augmented reality, the shark pops out of the user's cellphone.

Although the computer-generated technology can trace its heritage to the 1980s with the advent electronic-gaming devices, it has advanced rapidly over the past 15 years, largely because of sophisticated computer technologies that render more realistic 3-D experiences and the decline in prices for headsets, handheld devices, and other gear.

"For several years, commercial VR and AR technology has been showing promise, but without real tangible results," said Ted Swanson, senior technologist for strategic integration for Goddard's Office of the Chief Technologist. "However, recently there have been substantial developments in VR/AR hardware and software that may allow us to use this technology for scientific and engineering applications."

The aim isn't to reinvent the hardware and software developed by technology companies, but to be a "consumer of the products and create NASA-oriented applications," he said.

The pilots, which involve students from the University of Maryland, College Park, and Bowie State University, also in Maryland, are as diverse as the specialties in which NASA excels, Swanson said.

Under one, Grubb and his university collaborators are creating a collaborative virtual-reality environment where users don headgear and use hand controls to design, assemble, and interact with spacecraft using pre-defined, off-the-shelf parts and virtual tools, such as wrenches and screwdrivers. "The collaborative capability is a major feature in VR," Grubb said. "Even though they may work at locations hundreds of miles apart, engineers could work together to build and evaluate designs in real-time due to the shared virtual environment. Problems could be found earlier, which would save NASA time and money."

In other engineering-related apps, the team has created a 3-D simulation of Goddard's thermal-vacuum chamber to help engineers determine whether all spacecraft components would fit inside the facility before testing begins. In another involving on-orbit robotic servicing, the augmented app combines camera views and telemetry data in one location—an important capability for technicians who operate robotic arms such as the one on the International Space Station. All information is within the operator's field of view, alerting them to potential problems before they happen.

Just as important is applying the technology to scientific analysis, Grubb said.

The team has applied digital elevation maps and lidar data to create a 3-D simulation of terrestrial lava flows and tubes. The goal is to develop a proof-of-concept app that would allow scientists to compare remotely collected data with what they observe in the field. In another, the team is creating a 3-D visualization of space around the sun for mission planning. This simulation involves a constellation of CubeSats surrounding the sun to investigate the structure of the solar atmosphere, including the formation of coronal mass ejections that, when intense and traveling in the right direction, can affect low-Earth-orbiting spacecraft and power grids.

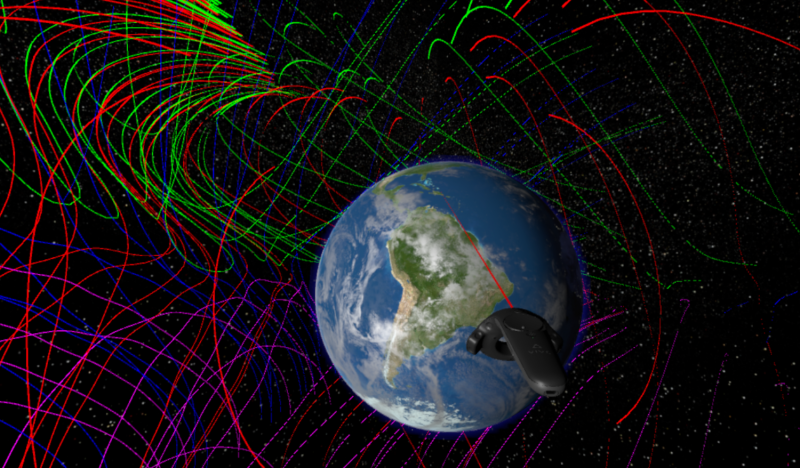

And last, the team is creating a virtual-reality environment where users can explore and visualize topographical features of Earth's protective magnetosphere. This app allows users to study magnetic reconnection sites, which are difficult to interpret without observations from more than one vantage point, Grubb said.

"I'm a gamer, but I see the potential for engineering and science applications," Grubb said. "We're in the early stages, but I believe this technology will transform how we work here. It will enhance engineering and give scientists a unique perspective of data."

Provided by NASA's Goddard Space Flight Center