May 16, 2017 feature

Stars as random number generators could test foundations of physics

(Phys.org)—Stars, quasars, and other celestial objects generate photons in a random way, and now scientists have taken advantage of this randomness to generate random numbers at rates of more than one million numbers per second. Generating random numbers at very high rates has a variety of applications, such as in cryptography and computer simulations.

But the researchers in the new study are also interested in using these cosmic random number generators for another purpose: to test the foundations of physics by progressively addressing another loophole in the Bell tests. While Bell tests show that quantum particles are correlated in ways that cannot be explained by classical physics, the results may not be reliable if parts of these tests manage to take advantage of any kind of loophole.

The researchers, led by Jian-Wei Pan, at the University of Science and Technology of China in Shanghai, have published a paper on using cosmic sources to generate random numbers in a recent issue of Physical Review Letters.

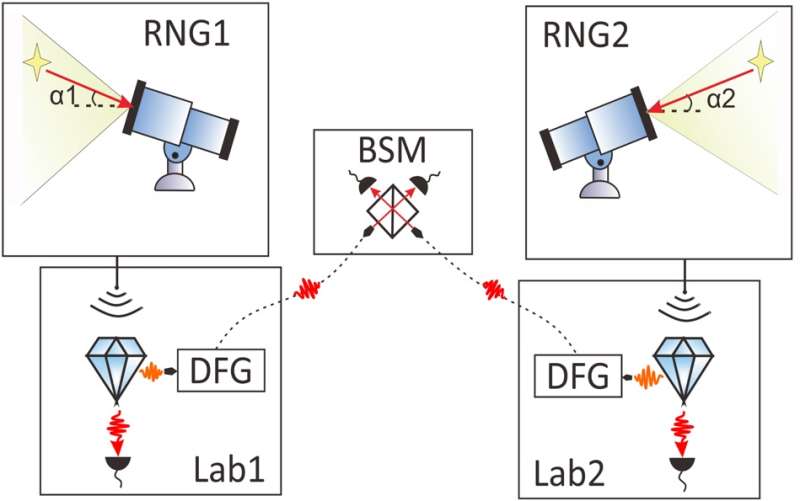

"We presented an experimental realization of cosmic random number generators (RNGs) and a realistic design of an event-ready Bell test experiment with these RNGs to address the freedom-of-choice loophole while closing the locality and efficiency loopholes simultaneously," coauthor Jingyun Fan told Phys.org. "It will be of high interest to implement the proposed experiment in the near future."

In their work, the researchers used an optical telescope located at the Astronomy Observatory in Xinglong, China, to collect light from a variety of very bright and distant cosmic radiation sources. Some of these objects are more than a trillion times brighter than our Sun and located hundreds of millions of light-years away.

Since the time interval between photon emission events is random, the photons are detected by the telescope at random time intervals. The device has a time resolution of 25 picoseconds (a picosecond is one trillionth of a second). On average, a photon is detected about once every 100 nanoseconds, corresponding to more than a million photons detected per second. This rate is competitive with today's current best random number generators, which use lasers as the photon source.

In the second part of their study, the physicists proposed that this cosmic random number generator could be used to improve Bell tests. These tests aim to show that, unlike our observations of the classical world, the quantum world does not obey local realism—a concept that refers to a combination of locality (that objects cannot influence each other across large distances) and realism (that objects exist even before any measurement is made). Violating a Bell inequality shows that, at the quantum level, nature violates either locality or realism, or both.

However, Bell tests have several loopholes. Typically, loopholes are ways for the objects being measured to secretly share information in a classical way in order to make it appear that local realism is violated when it is not. Although physicists have recently closed two of these loopholes (the locality loophole and detection loophole), there may always be some loopholes that can conceivably circumvent the restrictions of the test.

One such possibility is called the freedom-of-choice (or randomness) loophole. This loophole suggests that the detector settings—which are determined using random number generators—could have somehow been correlated even before the experiment began. Before now, it has been thought that these correlations could have occurred just a fraction of a second before the start of the experiment.

By using random number generators based on cosmic sources, the researchers showed that these correlations must have occurred before the photons left the stars, which is at least 3000 or so years before the experiment began—an improvement of more than 16 orders of magnitude. (A couple months ago, a paper was independently published that restricted the correlations to at least 600 years in the past, using similar methods based on cosmic sources of random number generation.)

In addition, a third independent group of researchers has recently suggested that the time constraint for the freedom-of-choice loophole could be pushed back by billions of years by using very distant quasars as random number generators.

To further pursue this possibility, the researchers in the new study suggest that a satellite-based cosmic Bell experiment may achieve better results than Earth-based experiments because, for one thing, it would avoid atmospheric disturbances. They hope to further pursue such improvements in the future.

More information: Cheng Wu et al. "Random Number Generation with Cosmic Photons." Physical Review Letters. DOI: 10.1103/PhysRevLett.118.140402

Journal information: Physical Review Letters

© 2017 Phys.org