Safe navigation on construction sites

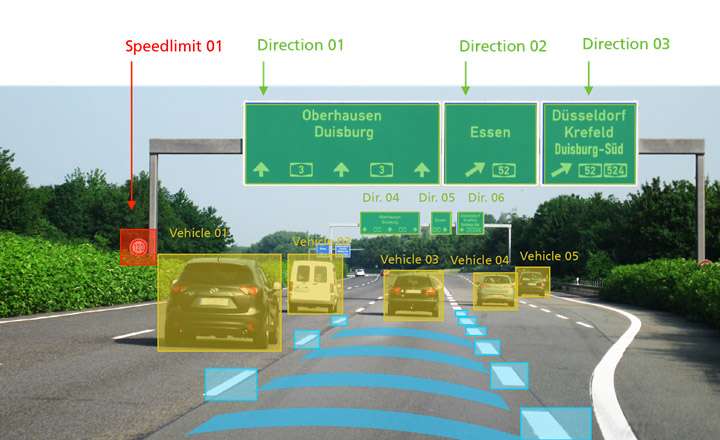

Automated vehicles have to be able to reliably detect traffic signs. Previous systems, however, have had problems in understanding complex traffic management with different information about speed or the course of the lanes, as mainly occurs on construction sites. Fraunhofer researchers are developing technologies for the real-time interpretation of such signs, which they will present at the CeBIT in Hanover from March 20 to 24, 2017 (Hall 6, Booth B36).

Construction sites are a challenge for automated vehicles: Since driving lanes generally narrow, traffic jams develop and drivers often react insecurely or under stress, accidents occur more frequently. The systems of the automated vehicles are also unable to cope with the complex situation: Old and new road markings overlap, and limiting beacons and traffic cones are difficult to detect by the sensors. The signs contain different information about the permitted speed or the course of the lanes.

Recognizing patterns more quickly and efficiently using Deep Learning

"Our technology enables a system to read signs of this kind with a high degree of accuracy," says Stefan Eickeler, who is responsible for the subject of object recognition at the Fraunhofer Institute for Intelligent Analysis and Information Systems IAIS in Sankt Augustin, Germany. The information is processed semantically, understood in terms of content and made available for further processing. "With Deep Learning – a key technology for the future of the automotive industry – we teach the software to recognize the classic patterns more quickly and efficiently."

Via the interplay between navigation equipment and on-board computers, it will be possible in the future for differently designated highway exits on construction sites to be correctly identified, for the distances to other vehicles to be kept optimally, and for the speed to be adjusted in a timely manner. "What in the short term could be able to promote relaxation and increased safety when driving by means of assisted driving is intended to work all by itself in the long term: Automated vehicles will then react independently," Eickeler explains.

The future vision: Camera replaces numerous sensors

An automotive camera is used which currently delivers 20 to 25 frames per second. Directly during the trip, these pictures are analyzed and information about signs, lane information or LED traffic signs are identified and processed. A future vision is that this camera will be able to function as a primary interface, making a large number of sensors redundant.

At the CeBIT, the Fraunhofer IAIS will use a virtual tour to present several projects in the area of big data and machine learning, including the topics "Automated driving on construction sites", "Digital assistants and real-time recommendation systems" or "Knowledge graphs for data-driven business models."

Provided by Fraunhofer-Gesellschaft