From here to infinity: 3-D map plots every color farther than the eye can see

All Virginia Commonwealth University professor Robert Meganck wanted was a better technique to teach color theory to his students.

Three years later, what he ended up with is a 3-D infinite color map that could be used for a myriad of applications, from determining the deterioration rate of priceless art to identifying the size and extent of a tumor.

"Most people do not understand color," said Meganck, chair of the Department of Communication Arts in the VCU School of the Arts. "It is one of the most misinterpreted principles."

A misinterpretation that starts very young for most of us.

"When you were in kindergarten, your teacher held up a crayon and said, 'This is red,'" he said. "What you saw, you associated with the word red. What the person next to you saw, they associated with the word red. But you did not necessarily see and register the same color. So you've got now a whole different definition of what that color is.

"You don't know that you're seeing differently than somebody else. But in reality, in all probability, that's what you're doing."

So if you wonder what color something—say, a tomato—is, you're already thinking incorrectly. Discerning an object's color is meaningless because color doesn't exist. At least, not the way we think it does.

"You're asking the wrong question because you have to ask 'What color is the light?' That's the answer," he said. If you were to shine a green light on a "red" tomato, it would only be able to reflect green light, causing it to appear green as well.

"Names don't mean anything" when it comes to color, Meganck said. Since there are not enough words in any language to name the infinite number of colors that exist, color identification by means of common names such as red, blue and green is simply inadequate.

The Color Gamut—the 3-D color map that Meganck developed with communication arts professor Matt Wallin and physics professor Peter Martin—eliminates the guesswork in determining a color, as well as the visual perceptual differences that cause us to see color differently.

"Every interpretation of color is based on the scientific properties of light," said Martin, who helped develop the College of Humanities and Science's "Wonders of Technology" course for nonscience VCU students that taught some of the underlying principles of physics, including color perception.

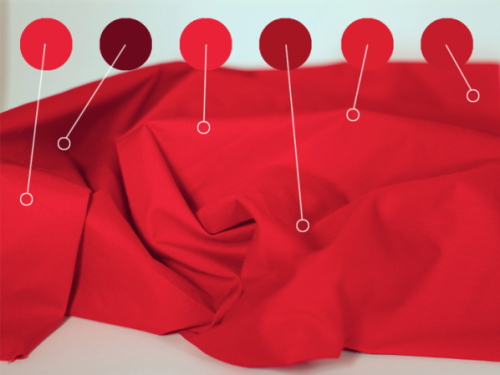

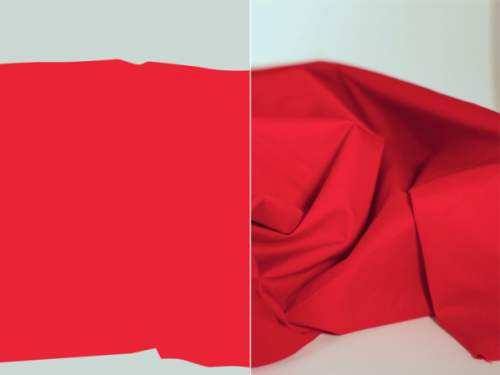

"Our color model is able to take a digital image and analyze it to determine which colors are present in the image and how many times each occurs," he said. "Usually the image consists of R, G and B [red, green and blue] values for each point. The model converts these into an achromatic intensity parameter (Value), which varies between reflecting all the incident light and absorbing all the light, and two chromatic parameters (Hue and Chroma), which describe the color part of the signal. Each color is displayed in an interactive 3D model that can be rotated by the user to show where the colors exist relative to each other."

Only rather than relying on imprecise terms such as "red" or "blue," the model uses degrees.

"Is that red?" Meganck asked, pointing to a color on his computer screen. "Well, it doesn't matter [because] that's 40 degrees, that's 35 degrees, that's 30 degrees. … When does it stop being red and when does it start being orange? We're not really worried about that, because we're not worried about names."

By plotting a color's location in three dimensions in relation to all other colors, differences in light, viewer or monitor screen no longer matter. The mathematical plot remains the same.

And, because the model is mathematical, there is literally no limit to how specific they can be in plotting colors. But if the human eye can't detect these miniscule shifts in color, does a color's location even matter?

Without a doubt, says Wallin, the definitive expert in computer graphics at VCU. Before joining VCU, he spent nearly 20 years in Hollywood creating computer-generated visual effects for motion pictures and television. While Meganck looks at color as an artist, Wallin looks at it in terms of digital image processing.

"One aspect of my film work consisted of analyzing and matching color of live-action photography and computer-generated imagery," Wallin said. "For the seamless integration of photorealistic visual effects, color is a key element that must be matched perfectly or an audience won't be fooled.

"When I look at what we've built thus far, I'm excited at the potential for analyzing full-motion video footage and using that data as a way to instantly match color in visual effects shots. On top of that we feel that our tool could be used in almost any application where color needs to be analyzed and/or matched. The more discussions we've had with the industry or conferences where we've presented the software, the more possibilities seem to surface."

The map's value to the art world is already evident and growing. For instance, the Virginia Museum of Fine Arts sent Meganck very-high-resolution images of some of the paintings in its collection. He used them to compare Vincent van Gogh's "Daisies, Arles" to the color pallet in Marsden Hartley's "Franconia Notch."

"I can feed those paintings … into this model and say map me a Monet," Meganck said. "I want to show what that color difference is between morning, noon and night. And I want to map that so that people can use that. Those are really practical applications for this tool."

But the real-world applications go far beyond the art world in unexpected ways that could truly be life-changing.

Julie Zinnert, Ph.D., an instructor with the VCU Department of Biology in the College of Humanities and Sciences and a research biologist with the U.S. Army Corps of Engineers, leads a VCU team exploring how plants may be used to detect buried explosives. Studies have shown that if there is an undetonated landmine buried in the ground beside a plant for a lengthy period of time, the explosive compounds could leak into the environment, ultimately contaminating the plant. Her team uses hyperspectral imaging to measure reflectance values from the blue to near-infrared region of wavelengths to learn unique things about a plant's physiology and its spectral signature. Having the means to precisely measure a plant's color could prove useful.

"We have some images that we collected on our plants, in explosives, and would be interested in trying to apply this model to them," Zinnert said. "There are also other, nonexplosive, ways in which a model like this could be used.

"Plants have a unique reflectance signature in the visible and infrared range and this signature changes with many different things. Sometimes these are subtle shifts, due to changes in leaf pigments, that are difficult for our eyes to see, but could be picked up by a model. With the availability of drones for imaging, it could even be applied to airborne images that would not require the use of expensive spectrometers, which measure the actual reflectance at specific wavelengths. It makes imaging and detection of stress work more accessible to people."

The model could also be used for an instant diagnosis by medical professionals. A quick scan of a blood sample could reveal exactly how much oxygen it contains, or doctors could determine the extent of a malignant tumor by analyzing its color data.

What started as an idea for an educational tool quickly evolved into a tool for full-color image analysis and manipulation, Wallin said.

"It's almost overwhelming when you begin to realize that 'color' is a vital part of almost everything and at the very core of our visual perception," he said.

Provided by Virginia Commonwealth University