Robots recognize humans in disaster environments

Through a computational algorithm, a team of researchers from the University of Guadalajara (UDG) in Mexico, developed a neural network that allows a small robot to detect different patterns, such as images, fingerprints, handwriting, faces, bodies, voice frequencies and DNA sequences.

Nancy Guadalupe Arana Daniel, researcher at the University Center of Exact and Engineering Sciences (CUCEI) at the UDG, focused on the recognition of human silhouettes in disaster situations. To that end she devised a system in which a robot, equipped with a flashlight and a stereoscopic camera, obtains images of the environment and, after a series of mathematical operations, distinguishes between people and debris.

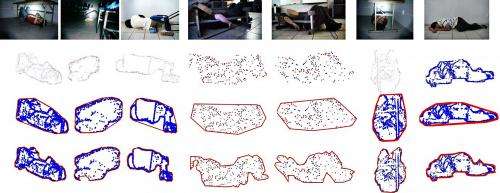

During the imaging process HD cameras are used to scan the environment, then the image is cleaned and the patterns of interest are segmented, in this case human silhouettes from the rubble.

Due to its complexity, this interdisciplinary project required the support of Alma Yolanda Alanis García, Carlos Alberto López Franco and Gehová Lopez all from the CUCEI, who handled the visual and motion control of the robot, which has a similar appearance to that of animated films, with a friction crawler-based drive system (such as the one in war tanks), ideal for all types of terrain.

These features are necessary to break into irregular ground; it also has motion sensors, cameras, a laser and an infrared system, allowing to rebuild the environment, and thereby find paths or create 2D maps.

Initially the whole system is integrated in the robot, but when this model is too fragile to carry a computer, the algorithm runs on a separate laptop, and the robot is controlled wirelessly. In that way the human recognition images obtained by the cameras of the robot are transmitted to the computer, said Arana Daniel.

Once the robot obtains silhouettes, it uses the descriptor system that obtains the visual characteristics (3D points) to segment (wrapping objects in circles) and then builds the human external silhouettes. These silhouettes will serve as descriptors to train a neural network called CSVM, developed by Arana Daniel, to recognize patterns

After that, it transforms the captured images into numerical values representing the shape, color and density. When merged, these figures give rise to a new image, which passes through a filter to detect whether it is a human silhouette or not.

"Pattern recognition allows the descriptors to automatically distinguish objects containing information about the features that represent a human figure; this involves developing algorithms to solve problems of the descriptor and assign features to an object," explained the pattern recognition specialist at the UDG.

Finally, the purpose is to continue working with the robot and train it to automatically classify human shapes from previous experience. The idea is to mimic the learning process of intelligent beings, allowing it to automatically relate elements.

Provided by Investigación y Desarrollo