Walk through buildings from your own device

Would you like to visit The Frick Collection art museum in New York City but can't find the time? No problem. You can take a 3-D virtual tour that will make you feel like you are there, thanks to Yasutaka Furukawa, PhD, assistant professor of computer science & engineering in the School of Engineering & Applied Science at Washington University in St. Louis.

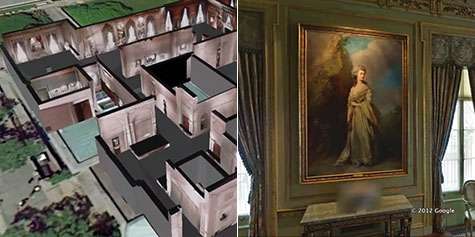

First, you see an aerial view of New York City. Then, you slowly zoom in on what looks like the museum with its roof removed. You fly into the first room, and suddenly you are looking at a very high-resolution version of the room's paintings, furniture and window treatments – so real, it looks more like a photograph than a computer-created model. You can continue through other rooms in the museum without ever leaving your chair.

Furukawa combines 3-D computer vision of indoor scenes with the capabilities of Google Maps and Google Earth to create a unique, high-resolution, photorealistic mapping experience of indoor spaces. Though he is starting with public spaces such as well-known museums, he intends to bring his technology to St. Louis – specifically, Washington University's Danforth Campus.

"I make the mapping experience of indoor space from the aerial viewpoint, which is good for navigation and exploration," Furukawa said. "But you really have to have the two layers. One is the ground level, or panorama, where you really look at the details and feel immersed, but also the high level, aerial viewpoint, where you can navigate and see the whole structure."

Furukawa, who joined the School of Engineering in September 2013 from Google Inc., where he launched MapsGL and Photo Tours, integrates the existing ground-level data of spaces from Google Maps with his modeling application that creates the aerial view to complete the 3-D indoor maps. He's been working on indoor modeling since 2009.

"It's very difficult," he said. "Outdoor is easier because the photos already exist. When we start with the indoor mapping, we have to go to every single room, take pictures, model it, then show it from every viewpoint. Technically, it's really challenging."

But Furukawa is up for the challenge, devoting about 50 percent of his research to indoor mapping, which could be used to enhance existing digital mapping, such as Google Maps, to digitally recreate the world from scratch, he says. It also could be used in visual effects for movies or computer games. The rest of his time is spent on outdoor mapping and dynamics, as well as teaching computer vision and computer graphics.

Furukawa and a doctoral student at Carnegie Mellon University presented a paper in June about their work at the 2014 IEEE Conference on Computer Vision and Pattern Recognition, which presented a new method for recreating indoor scenes. Furukawa and Ricardo Cabral showed that by focusing on reconstructing the dominant structure of the scene, such as the floor plan and walls, the reconstructions are simplified and keep the relevant information for navigation applications while remaining appealing for viewers. Existing methods focus on producing extremely precise 3-D models, which trigger noticeable rendering artifacts, which are unappealing for viewers.

Their method can be used in architecture and civil engineering as well as to locate things and find directions in airports, hospitals or malls.

Furukawa says that paper was the steppingstone to a new project: to create a 3-D visual model of the university's Danforth Campus, which he expects to take about five years.

"I have plans to start with the campus, and if we can make that work, we would like to do other buildings outside of the campus, such as restaurants, cafes and museums," he says.

Provided by Washington University in St. Louis