British researchers create robot that can learn simple words by conversing with humans (w/ Video)

In an attempt to replicate the early experiences of infants, researchers in England have created a robot that can learn simple words in minutes just by having a conversation with a human.

The work, published this week in the journal PLoS One, offers insight into how babies transition from babbling to speaking their first words.

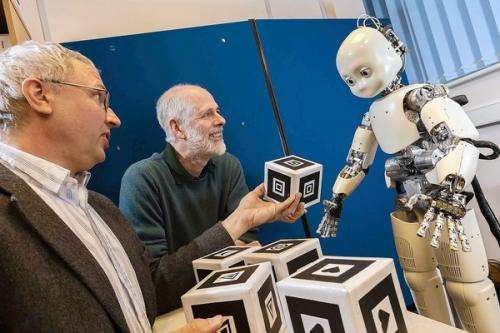

The three-foot-tall robot, named DeeChee, was built to produce any syllable in the English language. But it knew no words at the outset of the study, speaking only babble phrases like "een rain rain mahdl kross."

During the experiment, a human volunteer attempted to teach the robot simple words for shapes and colors by using them repeatedly in regular speech.

At first, all DeeChee could comprehend was an unsegmented stream of sounds. But DeeChee had been programmed to break up that stream into individual syllables and to store them in its memory. Once there, the words were ranked according to how often they came up in conversation; words like "red" and "green" were prized.

DeeChee also was designed to recognize words of encouragement, like "good" and "well done," from its human conversation partner. That feedback helped transform the robot's babble into coherent words, sometimes in as little as two minutes.

If repetition of sounds helps infants learn a language, then it's not surprising that our first words are often mainstays like "mama" and "dada." But why don't we start using common and simple words like "and" or "the" at the same time?

The answer, said study leader Catherine Lyon, a computer scientist at the University of Hertfordshire, is that the words that form the connective tissue of our language - words like "at," "with" and "of" - are spoken in hundreds of different ways, making them difficult for newbies to recognize. On the other hand, more concrete words like "house" or "blue" tend to be spoken in the same way nearly every time.

Because the study relied on the human volunteers speaking naturally, Lyon said it was crucial that the robot resemble a person. DeeChee was programmed to smile when it was ready to pay attention to its teacher and to stop smiling and blink when it needed a break. (Though DeeChee was designed to have a gender-neutral appearance, humans tended to treat it as a boy, according to the study.)

"When we asked people to talk to the robot as a small child, it seemed to come quite naturally to them," she said. "When they talk to a bit of disembodied software, you don't get the same response."

Paul Goldstein, a psychologist at Cornell University who has also used robots to study infant learning but wasn't involved in this study, said the work was highly innovative - and that if the researchers' theory about language acquisition is correct, they can use robots to prove it.

"If we really think we understand how infants learn," he said, "then we should be able to build a robot that can do it."

More information: Lyon C, Nehaniv CL, Saunders J (2012) Interactive Language Learning by Robots: The Transition from Babbling to Word Forms. PLoS ONE 7(6): e38236. doi:10.1371/journal.pone.0038236

Journal information: PLoS ONE

(c)2012 Los Angeles Times

Distributed by MCT Information Services