January 26, 2012 report

Virtual Projection team puts iPhone writing on the wall (w/ video)

(PhysOrg.com) -- A collaborative team from the University of Calgary, University of Munich, and Columbia, have figured out a way to use a smartphone to project the phone’s display on to external displays nearby. The team thinks of its technology approach, Virtual Projection, as "borrowing available display space in the environment." Dominikus Baur, Sebastian Boring, and Steven Feiner are behind Virtual Projection. Feiner is Professor of Computer Science at Columbia University where he directs the Computer Graphics and User Interfaces Lab.

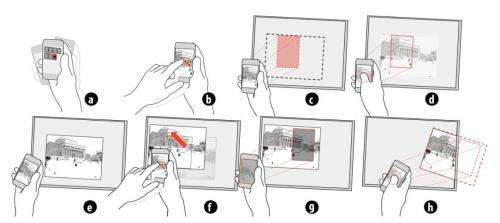

According to a formal description of their technique, Virtual Projection “is based on tracking a handheld device without an optical projector and allows selecting a target display on which to position, scale, and orient an item in a single gesture.”

The phone user holds the phone up to the target computer screen, the phone camera captures and compares images from the screen to work out location -- the system relies on tracking where the phone is being pointed--and passes information back to the computer screen via WiFi to place the projection on the screen. Multiple users can place images on the same screen, when the users want the images to work together.

Baur, currently a postdoctoral fellow at the University of Calgary, took to his blog this week to elaborate on what the team set out to accomplish: “When we started with Virtual Projection the initial idea was just to artificially replicate the workings of an optical projector."

The team wanted to come up with an easy solution and took up the smartphone concept. “While Virtual Projection has the clear downside that it requires a suitable display and does not work on any regular surface, we can at least fix some of the downsides of its real-world mode," he said. "One of the first things was getting rid of distortion. When projectors are aimed towards a wall at an angle, keystone distortion can arise, warping the resulting image. Virtual projections can be freely adjusted when it comes to distortion and transformations of the resulting image.”

Components used for the team’s no-cables prototype included regular, unmodified iPhones, a server that runs on a Windows-PC, and Wifi .

Their official paper on this project, “Virtual Projection: Exploring Optical Projection as a Metaphor for Multi-Device Interactions,” is to appear at the ACM SIGCHI Conference on Human Factors in Computing Systems (CHI 2012) that starts May 6 in Austin. CHI is an international conference on human-computer interaction.

The team is upbeat that they are on to something which, sooner than later, could be a pervasive, everyday opportunity for communication. "Imagine having a VP server running on every display that you encounter in your daily life and being able to 'borrow' the display space for a while (e.g., to look something up on a map)," said Baur. "Give it a few more years (and a friendly industry consortium) and this could become reality.”

More information:

bowr.de/blog/?p=319

do.minik.us/virtual-projection … al-projection_pp.pdf

© 2011 PhysOrg.com