Reconstruct Mars automatically in minutes

A computer system is under development that can automatically combine images of the Martian surface, captured by landers or rovers, in order to reproduce a three dimensional view of the red planet. The resulting model can be viewed from any angle, giving astronomers a realistic and immersive impression of the landscape. This important new development was presented at the European Planetary Science Congress in Potsdam by Dr Michal Havlena.

“The feeling of ‘being right there’ will give scientists a much better understanding of the images. The only input we need are the captured raw images and the internal camera calibration. After minutes of computation on a standard PC, a three dimensional model of the captured scene is obtained,” said Dr Havlena.

The growing amount of available imagery from Mars makes the manual image processing techniques used so far nearly impossible to handle. The new automated method which allows fast high quality image processing, was developed at the Center for Machine Perception of the Technical University of Prague, under the supervision of Tomas Pajdla, as a part of the EU FP7 Project PRoVisG.

From the technical point of view, the image processing consists of three stages: the first step is determining the image order. If the input images are unordered, i.e. they do not form a sequence but still are somehow connected, a state-of-the-art image indexing technique is able to find images of cameras observing the same part of the scene. To start with, up to a thousand features on each image are detected and “translated” into visual words, according to a visual vocabulary trained on images from Mars. Then, starting from an arbitrary image, the following image is selected as such if it shares the highest number of visual words with the previous image.

The second step of the pipeline, the so-called “structure-from-motion computation”, helps scientists determine the accurate camera positions and rotations in three dimensional space. To do this for each image pair representing neighboring frames, it is enough to find 5 corresponding features to obtain a relative camera pose between the two images.

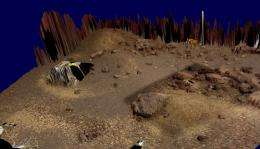

The last and most important step is the so-called “dense 3D model generation” of the captured scene, which essentially creates and fuses the Martian surface depth maps. To do, this the model uses the intensity disparities (parallaxes) present in images taken at two distinct camera positions, which were identified in the second step.

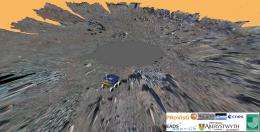

“The pipeline has already been used successfully to reconstruct a three dimensional model from nine images captured by the Phoenix Mars Lander, which were obtained just after performing some digging operation on the Mars surface,” said Dr Havlena.

“The challenge is now to reconstruct larger parts of the surface of the red planet, captured by the Mars Exploration Rovers Spirit and Opportunity,” concluded Dr Havlena.

Provided by European Planetary Science Congress