The five biggest threats to human existence

In the daily hubbub of current "crises" facing humanity, we forget about the many generations we hope are yet to come. Not those who will live 200 years from now, but 1,000 or 10,000 years from now. I use the word "hope" because we face risks, called existential risks, that threaten to wipe out humanity. These risks are not just for big disasters, but for the disasters that could end history.

Not everyone has ignored the long future though. Mystics like Nostradamus have regularly tried to calculate the end of the world. HG Wells tried to develop a science of forecasting and famously depicted the far future of humanity in his book The Time Machine. Other writers built other long-term futures to warn, amuse or speculate.

But had these pioneers or futurologists not thought about humanity's future, it would not have changed the outcome. There wasn't much that human beings in their place could have done to save us from an existential crisis or even cause one.

We are in a more privileged position today. Human activity has been steadily shaping the future of our planet. And even though we are far from controlling natural disasters, we are developing technologies that may help mitigate, or at least, deal with them.

Future imperfect

Yet, these risks remain understudied. There is a sense of powerlessness and fatalism about them. People have been talking apocalypses for millennia, but few have tried to prevent them. Humans are also bad at doing anything about problems that have not occurred yet (partially because of the availability heuristic – the tendency to overestimate the probability of events we know examples of, and underestimate events we cannot readily recall).

If humanity becomes extinct, at the very least the loss is equivalent to the loss of all living individuals and the frustration of their goals. But the loss would probably be far greater than that. Human extinction means the loss of meaning generated by past generations, the lives of all future generations (and there could be an astronomical number of future lives) and all the value they might have been able to create. If consciousness or intelligence are lost, it might mean that value itself becomes absent from the universe. This is a huge moral reason to work hard to prevent existential threats from becoming reality. And we must not fail even once in this pursuit.

With that in mind, I have selected what I consider the five biggest threats to humanity's existence. But there are caveats that must be kept in mind, for this list is not final.

Over the past century we have discovered or created new existential risks – supervolcanoes were discovered in the early 1970s, and before the Manhattan project nuclear war was impossible – so we should expect others to appear. Also, some risks that look serious today might disappear as we learn more. The probabilities also change over time – sometimes because we are concerned about the risks and fix them.

Finally, just because something is possible and potentially hazardous, doesn't mean it is worth worrying about. There are some risks we cannot do anything at all about, such as gamma ray bursts that result from the explosions of galaxies. But if we learn we can do something, the priorities change. For instance, with sanitation, vaccines and antibiotics, pestilence went from an act of God to bad public health.

1. Nuclear war

While only two nuclear weapons have been used in war so far – at Hiroshima and Nagasaki in World War II – and nuclear stockpiles are down from their the peak they reached in the Cold War, it is a mistake to think that nuclear war is impossible. In fact, it might not be improbable.

The Cuban Missile crisis was very close to turning nuclear. If we assume one such event every 69 years and a one in three chance that it might go all the way to being nuclear war, the chance of such a catastrophe increases to about one in 200 per year.

Worse still, the Cuban Missile crisis was only the most well-known case. The history of Soviet-US nuclear deterrence is full of close calls and dangerous mistakes. The actual probability has changed depending on international tensions, but it seems implausible that the chances would be much lower than one in 1000 per year.

A full-scale nuclear war between major powers would kill hundreds of millions of people directly or through the near aftermath – an unimaginable disaster. But that is not enough to make it an existential risk.

Similarly the hazards of fallout are often exaggerated – potentially deadly locally, but globally a relatively limited problem. Cobalt bombs were proposed as a hypothetical doomsday weapon that would kill everybody with fallout, but are in practice hard and expensive to build. And they are physically just barely possible.

The real threat is nuclear winter – that is, soot lofted into the stratosphere causing a multi-year cooling and drying of the world. Modern climate simulations show that it could preclude agriculture across much of the world for years. If this scenario occurs billions would starve, leaving only scattered survivors that might be picked off by other threats such as disease. The main uncertainty is how the soot would behave: depending on the kind of soot the outcomes may be very different, and we currently have no good ways of estimating this.

2. Bioengineered pandemic

Natural pandemics have killed more people than wars. However, natural pandemics are unlikely to be existential threats: there are usually some people resistant to the pathogen, and the offspring of survivors would be more resistant. Evolution also does not favor parasites that wipe out their hosts, which is why syphilis went from a virulent killer to a chronic disease as it spread in Europe.

Unfortunately we can now make diseases nastier. One of the more famous examples is how the introduction of an extra gene in mousepox – the mouse version of smallpox – made it far more lethal and able to infect vaccinated individuals. Recent work on bird flu has demonstrated that the contagiousness of a disease can be deliberately boosted.

Right now the risk of somebody deliberately releasing something devastating is low. But as biotechnology gets better and cheaper, more groups will be able to make diseases worse.

Most work on bioweapons have been done by governments looking for something controllable, because wiping out humanity is not militarily useful. But there are always some people who might want to do things because they can. Others have higher purposes. For instance, the Aum Shinrikyo cult tried to hasten the apocalypse using bioweapons beside their more successful nerve gas attack. Some people think the Earth would be better off without humans, and so on.

The number of fatalities from bioweapon and epidemic outbreaks attacks looks like it has a power-law distribution – most attacks have few victims, but a few kill many. Given current numbers the risk of a global pandemic from bioterrorism seems very small. But this is just bioterrorism: governments have killed far more people than terrorists with bioweapons (up to 400,000 may have died from the WWII Japanese biowar program). And as technology gets more powerful in the future nastier pathogens become easier to design.

3. Superintelligence

Intelligence is very powerful. A tiny increment in problem-solving ability and group coordination is why we left the other apes in the dust. Now their continued existence depends on human decisions, not what they do. Being smart is a real advantage for people and organisations, so there is much effort in figuring out ways of improving our individual and collective intelligence: from cognition-enhancing drugs to artificial-intelligence software.

The problem is that intelligent entities are good at achieving their goals, but if the goals are badly set they can use their power to cleverly achieve disastrous ends. There is no reason to think that intelligence itself will make something behave nice and morally. In fact, it is possible to prove that certain types of superintelligent systems would not obey moral rules even if they were true.

Even more worrying is that in trying to explain things to an artificial intelligence we run into profound practical and philosophical problems. Human values are diffuse, complex things that we are not good at expressing, and even if we could do that we might not understand all the implications of what we wish for.

Software-based intelligence may very quickly go from below human to frighteningly powerful. The reason is that it may scale in different ways from biological intelligence: it can run faster on faster computers, parts can be distributed on more computers, different versions tested and updated on the fly, new algorithms incorporated that give a jump in performance.

It has been proposed that an "intelligence explosion" is possible when software becomes good enough at making better software. Should such a jump occur there would be a large difference in potential power between the smart system (or the people telling it what to do) and the rest of the world. This has clear potential for disaster if the goals are badly set.

The unusual thing about superintelligence is that we do not know if rapid and powerful intelligence explosions are possible: maybe our current civilisation as a whole is improving itself at the fastest possible rate. But there are good reasons to think that some technologies may speed things up far faster than current societies can handle. Similarly we do not have a good grip on just how dangerous different forms of superintelligence would be, or what mitigation strategies would actually work. It is very hard to reason about future technology we do not yet have, or intelligences greater than ourselves. Of the risks on this list, this is the one most likely to either be massive or just a mirage.

This is a surprisingly under-researched area. Even in the 50s and 60s when people were extremely confident that superintelligence could be achieved "within a generation", they did not look much into safety issues. Maybe they did not take their predictions seriously, but more likely is that they just saw it as a remote future problem.

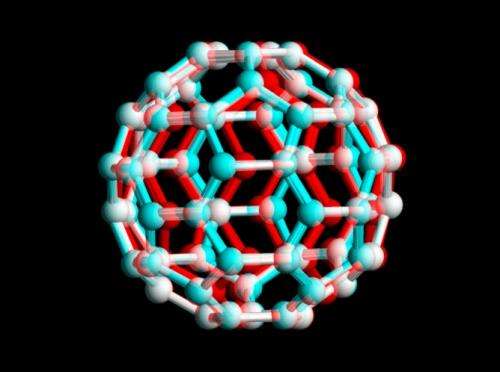

4. Nanotechnology

Nanotechnology is the control over matter with atomic or molecular precision. That is in itself not dangerous – instead, it would be very good news for most applications. The problem is that, like biotechnology, increasing power also increases the potential for abuses that are hard to defend against.

The big problem is not the infamous "grey goo" of self-replicating nanomachines eating everything. That would require clever design for this very purpose. It is tough to make a machine replicate: biology is much better at it, by default. Maybe some maniac would eventually succeed, but there are plenty of more low-hanging fruits on the destructive technology tree.

The most obvious risk is that atomically precise manufacturing looks ideal for rapid, cheap manufacturing of things like weapons. In a world where any government could "print" large amounts of autonomous or semi-autonomous weapons (including facilities to make even more) arms races could become very fast – and hence unstable, since doing a first strike before the enemy gets a too large advantage might be tempting.

Weapons can also be small, precision things: a "smart poison" that acts like a nerve gas but seeks out victims, or ubiquitous "gnatbot" surveillance systems for keeping populations obedient seems entirely possible. Also, there might be ways of getting nuclear proliferation and climate engineering into the hands of anybody who wants it.

We cannot judge the likelihood of existential risk from future nanotechnology, but it looks like it could be potentially disruptive just because it can give us whatever we wish for.

5. Unknown unknowns

The most unsettling possibility is that there is something out there that is very deadly, and we have no clue about it.

The silence in the sky might be evidence for this. Is the absence of aliens due to that life or intelligence is extremely rare, or that intelligent life tends to get wiped out? If there is a future Great Filter, it must have been noticed by other civilisations too, and even that didn't help.

Whatever the threat is, it would have to be something that is nearly unavoidable even when you know it is there, no matter who and what you are. We do not know about any such threats (none of the others on this list work like this), but they might exist.

Note that just because something is unknown it doesn't mean we cannot reason about it. In a remarkable paper Max Tegmark and Nick Bostrom show that a certain set of risks must be less than one chance in a billion per year, based on the relative age of Earth.

You might wonder why climate change or meteor impacts have been left off this list. Climate change, no matter how scary, is unlikely to make the entire planet uninhabitable (but it could compound other threats if our defences to it break down). Meteors could certainly wipe us out, but we would have to be very unlucky. The average mammalian species survives for about a million years. Hence, the background natural extinction rate is roughly one in a million per year. This is much lower than the nuclear-war risk, which after 70 years is still the biggest threat to our continued existence.

The availability heuristic makes us overestimate risks that are often in the media, and discount unprecedented risks. If we want to be around in a million years we need to correct that.

Provided by The Conversation

This story is published courtesy of The Conversation (under Creative Commons-Attribution/No derivatives).

![]()