How the 'Great Filter' could affect tech advances in space

"One of the main things we're focused on is the notion of existential risk, getting a sense of what the probability of human extinction is," said Andrew Snyder-Beattie, who recently wrote a piece on the "Great Filter" for Ars Technica.

As Snyder-Beattie explained in the article, the "Great Filter" is a response to the question of why we can't see any alien civilizations. The "Great Filter" deals with similar issues as the Drake Equation, which talks about the probability of communicating civilizations outside of Earth, and the Fermi Paradox, which asks where the civilizations are.

Simply speaking, the idea is that if a civilization continues to expand (especially at the technological pace we humans have experienced), it wouldn't take all that long in the lifespan of the universe for artificial processes to be visible with our own telescopes. Yes, this is even taking into account a presumed speed limit of no more than the speed of light. So something could be preventing these civilizations from showing up. That's an important part of the Great Filter, but more details about it are below.

Here are a few possibilities for why the filter exists, both from Snyder-Beattie and from the person who first named the Great Filter, Robin Hanson, in 1996.

'Rare Earth' hypothesis

Maybe Earth is alone in the universe. While some might assume life must be relatively common since it arose here, Snyder-Beattie points to observation selection effects as complicating this analysis. With a sample size of one (only ourselves as the observers), it is hard to determine the probability of life arising – we could very well be alone. By one token, that's a "comforting" thought, he added, because it could mean there is no single catastrophic event that befalls all civilizations.

Advanced civilizations are hard to get

Hanson doesn't believe that one. One step would be going from modestly intelligent mammals to human-like abilities, and another would be the step from human-like abilities to advanced civilizations. It only took a few million years to go from modestly intelligent animals to humans. "If you killed all humans on Earth, but you left life on Earth—and the animals have big brains—it wouldn't necessarily be that long before it came back again." Some of the filter steps leading up to that would have taken longer, though, including the emergence of multicellular animals and the emergence of brains, roughly on the timespan of a billion years each per stage.

'The Berserker Scenario'

In this scenario, powerful aliens sit hiding in wait to destroy any visible intelligence that appears. Hanson doesn't believe that would work because if there were multiple berserker species, there would be opposing parties. "As an equilibrium, you'd have these competing teams of these berserkers all trying to smash each other."

Maybe natural activities are masking the extraterrestrials

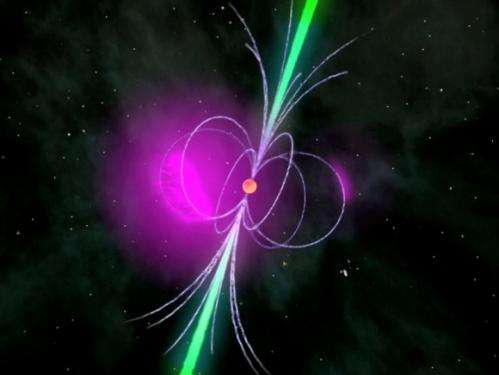

Maybe the big natural activities of those beyond Earth just happens to look exactly as if they are not there. Hanson said it seems rather unlikely, as it would be a "remarkable coincidence" if advanced artificial processes were actually responsible for all the astronomical phenomena we do explain from natural occurrences, from pulsars to dark matter.

A natural disaster

There certainly is an inherent risk to just being an Earthling. One asteroid strike, a stream of radiation from a nearby supernova, or a large enough volcano could end civilization as we know it—and possibly much of life itself. "But the consensus is we have a track record of surviving these things. But it's unlikely that all life would be destroyed forever. "If those humans who were left, it took them 10,000 years to come back to civilization, that's hardly a blink of an eye, that doesn't do it," Hanson said. The next is that statistically speaking, although these events happen, they don't happen often. "It is unlikely one of these very rare events would happen in the next century or 300 years," Snyder-Beattie said.

A 'fundamental technology' that ends civilization

This is open to complete speculation. For example, climate change could be the catalyst, although it would seem extraordinary for all civilizations to encounter such similar political failures, Snyder-Beattie said. More generalized possibilities could be the rise of machine intelligence or distributed biotechnology, a force that is self-replicated. Hanson, however, points out that even that has its limitations—presumably then it would be the robots that head out through the cosmos and leave traces of civilization themselves.

The solution

For the fate of our own civilization, the key is to focus on what we can control, Hanson says. This means drawing up a list of the things that could kill us—however theoretical—and then work on ways of addressing those.

The question of why other civilizations are not visible still persists, however. What are your thoughts about the Great Filter? Let us know in the comments.

Source: Universe Today