Fujitsu develops technology enabling high-speed search while accumulating data at 40-gbps

Fujitsu Laboratories announced the development of industry's first software technology that can perform high-speed searches on information even as it is being accumulated at a speed of 40-Gbps. While this has been difficult to do in the past without specialized network analysis hardware, Fujitsu Laboratories' new technology can be implemented entirely through software and conventional hardware.

As smart devices become increasingly ubiquitous, technologies that can detect what is going on in a network by accumulating and analyzing all communications packets are becoming increasingly important to improving the quality of communications networks. In the past, attempts to implement this kind of technology in software have been constrained by the effects of exclusive access control of memory or disks, making it difficult to perform detailed analyses on high-speed communications at the same time as data are being accumulated.

Now Fujitsu Laboratories has devised a way to accumulate and search communications data running as fast as 40-Gbps using only software and conventional hardware. This method can perform detailed analyses on communications data during each process, from capture processing to accumulation, without halting the flow of data. This is made possible with non-blocking data processing technology that does not require exclusive access control of packets bundled with data volumes appropriate to each process.

This technology makes it possible to catch, inexpensively and with certainty, network bottlenecks or even external cyber-attacks through communications breaches that sampling of partial data or short-term observations might miss. This will work to improve network quality, stabilize datacenter operations, and enhance security.

This technology will be exhibited at Fujitsu Forum 2014, running May 15-16 at Tokyo International Forum in Tokyo.

Background

With the widespread use of smartphones, tablets, and other connected devices, communications network quality is seen as increasingly important. In reality, however, the complex traffic patterns and burgeoning volumes of data on networks have led to an increase in unanticipated network failures and data breaches over networks. In order to trace the causes of these failures and to strengthen security, there is a need for detailed event monitoring of the traffic flowing over the network and the devices connected to it, as well as a need to preserve data traffic as evidence. Rather than partial data, such as statistical information or samples, there is a need for the ability to accumulate all communications data and immediately access the desired packet data, upon which is performed sophisticated analyses.

Issues

In order to accumulate and analyze communications data over a network, the important aspects are the performance of the capture processing of a data flow, and storage of accumulated data in disks, as well as the ability to search for target data within the accumulated communications data. In line with this, the need for higher levels of processing performance increases as communications speeds continue to accelerate.

Because communications data is constantly being transmitted, the data must be captured and accumulated at a pace no slower than the maximum speed of transmission, which conventionally has required the use of specialized hardware. In addition, in order to perform high-speed searches on separated packet data from the communications data, the arrival times of the packets needs to be recorded and the packets need to be processed for collection in accordance with the desired search criteria.

About the Technology

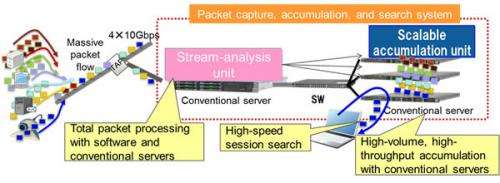

Fujitsu Laboratories has now devised a system for performing high-speed capture, accumulation, and searches at speeds of 40-Gbps using only software and conventional hardware (Figure 1).

The scalable accumulation unit uses a combination of multiple conventional servers for storage, enabling scalable disk capacity and parallel-input performance.

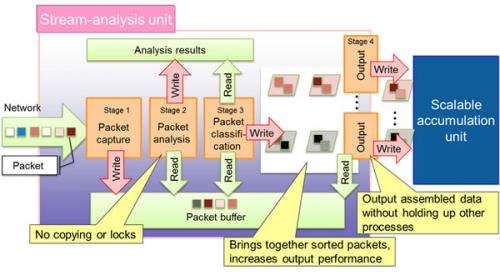

The stream-analysis unit is divided into a number of stages that are executed in sequence, enabling high-speed sequential processing of such tasks as packet analysis (Figure 2).

As they are captured, packets are sorted based on search criteria for accumulation, enabling rapid searches according to logical units, such as conversations or communications sessions per device.

Technology features

Eliminates exclusive access control locks, increases disk read/output performance

Packets are classified by such criteria as time and sender. Packets are held in buffers and an output process is performed by each buffer. Handling output independent of other operations reduces the number of disk accesses, so there is no need to wait for other output processing tasks, and suppresses data overflows caused by differences in processing times between stages.

Avoids copying data

The stream-analysis unit, which analyzes packets, does not copy packets as they are transferred between stages. Instead, it was made so that the analysis results can be referenced. This obviates the need for copying and locks.

These technologies accelerate processing speeds because there is no need for queue data structure typically used for processing data streams.

Results

The ability, over many hours, to accumulate and search the huge volume of packets transmitted over a high-speed, 40-Gbps network, using only software and conventional hardware, makes it possible to catch, inexpensively and with certainty, network events, such as intermittent network troubles or unknown attacks, that sampling of partial data or short-term observations might miss.

In the event of a network fault, for example, this technology makes it possible to browse the packets sent around the time of the incident, enabling quick progress in analyzing the fault. Alternatively, if a virus-infected PC is discovered, it is possible to trace the communications flows to and from that PC. This will improve network quality, stabilize datacenter operations, and enhance security.

Future Plans

Fujitsu Laboratories will pursue further improvements in the processing performance of communications data accumulation and searches while moving forward with verification testing with the aim of bringing the technology into practical use in fiscal 2014.

Provided by Fujitsu