Faster-than-superfast Internet, and why we can't have it (yet)

You may have read about Sony's plan to install a fibre-based internet service in Japan which could reach download speeds of 2 gigabits a second (Gbps). That's 20 times faster than speeds offered by Labor's National Broadband Network (NBN), and even twice as fast as Google Fiber, a 1Gbps connection currently being rolled out in the US.

To put that in more practical terms, a 2Gbps connection means you could download an episode of HBO's hugely popular drama Game of Thrones in seconds. And given the first episode of the current season was illegally downloaded more than a million times, that would presumably be a welcome development.

So, with companies continually outdoing each other and offering faster download capabilities, the question remains: how fast can you go?

To understand internet speeds, we first have to understand optical fibres.

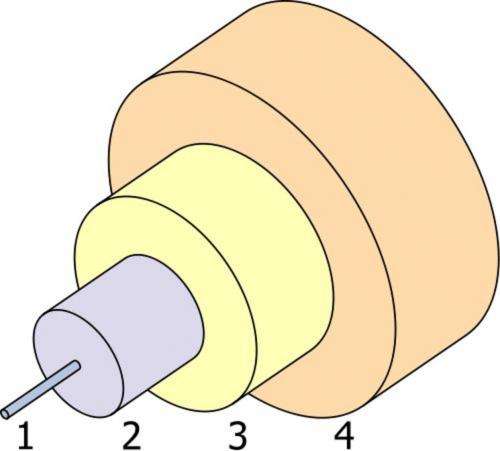

Optical fibres comprise a silica glass core, surrounded by another layer of purer silica, the cladding.

Over these is a silicone protective layer, and one or more layers of protective tubes.

Light is guided along the glass core of the fibre by total internal reflection, which results in very little power loss.

Imagine a glass window 5km thick and only losing 50% of the light; this is how transparent an optical fibre is!

If the core's diameter is smaller than about 10 micrometres (around one tenth the thickness of a human hair), the light is guided straight down the middle.

This is a single-mode fibre, and as there is only one "path" (mode) for the light to travel along, its velocity is very precise.

A very short pulse of light will remain a pulse as it hurtles down the fibre, so we can send many billions of pulses per second without them overlapping.

This gives the single-mode bandwidth an enormous data carrying capability, much more than a home could possibly want (unless you have a server farm in the attic).

Optical fibre capacity

While optical fibre transmits large amounts of data – and fast – the options available to you or me are by no means as fast as has been demonstrated in laboratories.

Even Sony's 2Gbps announcement pales in comparison to recent research.

Reports have shown rates of 26Tbps (that's terabits a second, where one terabit equals 1,000 gigabits) from a single laser source along a standard fibre, to more than 1,000Tbps along a 12-core research optical fibre.

That's equivalent to sending 5,000 high definition, two-hour-long videos over 50km – in one second.

Given that the total average download rate of the world is forecast to be only 250Tbps in 2016, then a single strand of this new multi-core fibre could carry all of the world's internet traffic!

These reports generate consternation for internet users: why can't we all have these download speeds now?

The answer, simply, is the capital cost of the equipment at the ends of the fibre, then the rent and electricity for the exchanges.

This is an acceptable cost if the fibre is running between two cities, so shared by millions, but too much to bear for an individual.

And while research will bring down this cost, it's not doing so as rapidly as demand is increasing.

The sharing of networks limits download speeds. All telecommunications networks are shared, somewhere, so if other users are using it, then you will have a slower connection.

This is particularly annoying when you are trying to pay a credit card bill before the deadline, and your street is downloading films for free to multiple computers, so you get hit with a late fee while the pirates sail the seas.

That said, if you leave paying until the last microsecond you probably deserve a penalty of some sort.

Widening the bottlenecks

The perceived solution is to spend more on the system, so that even the most media-hungry suburb can enjoy business and pleasure simultaneously.

This only pushes the problem back down the line: there may be a bottleneck at your exchange, or between cities, or across the ocean.

As for your own computer, you might be limited by the Gigabit Ethernet (GbE) standard, which, as its name suggests, is limited by a 1Gbps data rate.

It's the "widening the freeway" argument. After spending billions, we eventually get stuck at the entrance to the car park.

A better solution is to design the network so that all parts of the network provide equal performance to the individual.

This is network engineering, and is aided by software tools such as provided by a company I co-founded, VPIsystems.

With a reasonable estimate of where users are, what services they want and how much they will pay, the optimal network can be designed using appropriate technologies for each component and location.

For example, if a data-hungry industry moves into a town, this can be catered for by upgrading the end-equipment if fibre has been installed.

There are also simple software solutions for sharing services.

Reprogramming the software would mean that I could have a slow service that works all of the time for bill payment – like the emergency lane on the freeway – but I would have a very limited download rate.

Unfortunately, technologically simple solutions are difficult to market: it's easier to promise the world for $50 a month.

So, let's all demand a system designed for our most voracious digital appetites – I need to download 10 movies before I fly out on holiday. The taxi just pulled up and the meter is running.

Source: The Conversation

This story is published courtesy of The Conversation (under Creative Commons-Attribution/No derivatives).