October 24, 2011 feature

A second look at supernovae light: Universe's expansion may be understood without dark energy

(PhysOrg.com) -- The 2011 Nobel Prize in physics, awarded just a few weeks ago, went to research on the light from Type 1a supernovae, which shows that the universe is expanding at an accelerating rate. The well-known problem resulting from these observations is that this expansion seems to be occurring even faster than all known forms of energy could allow. While there is no shortage of proposed explanations – from dark energy to modified theories of gravity – it’s less common that someone questions the interpretation of the supernovae data itself.

In a new study, that’s what Arto Annila, Physics Professor at the University of Helsinki, is doing. The basis of his argument, which is published in a recent issue of the Monthly Notices of the Royal Astronomical Society, lies in the ever-changing way that light travels through an ever-evolving universe.

“The standard model of big bang cosmology (the Lambda-CMD model) is a mathematical model, but not a physical portrayal of the evolving universe,” Annila told PhysOrg.com. “Thus the Lambda-CMD model yields the luminosity distance at a given redshift as a function of the model parameters, such as the cosmological constant, but not as a function of the physical process where quanta released from a supernova explosion disperse into the expanding universe.

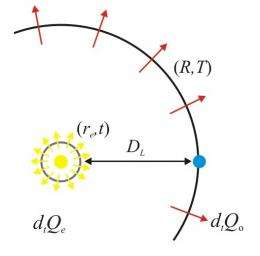

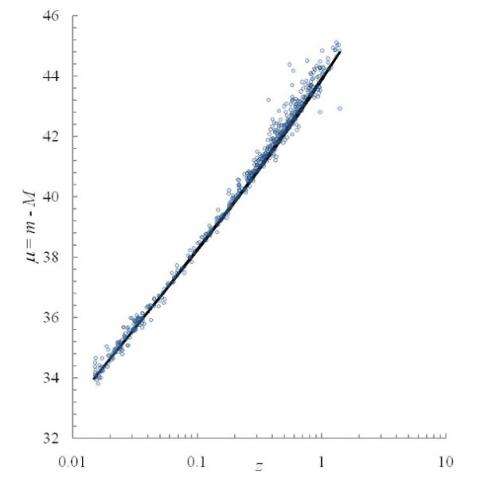

“When the supernova exploded, its energy as photons began to disperse in the universe, which has, by the time we observe the flash, become larger and hence also more dilute,” he said. “Accordingly, the observed intensity of light has fallen inversely proportional to the squared luminosity distance and directly proportional to the redshifted frequency. Due to these two factors, brightness vs. redshift is not one straight line on a log-log plot, but a curve.”

As a result, Annila argues that the supernovae data does not imply that the universe is undergoing an accelerating expansion.

The principle of least time

As Annila explains, when a ray of light travels from a distant star to an observer’s telescope, it travels along the path that takes the least amount of time. This well-known physics principle is called Fermat’s principle or the principle of least time. Importantly, the quickest path is not always the straight path. Deviations from a straight path occur when light propagates through media of varying energy densities, such as when light bends due to refraction as it travels through a glass prism.

The principle of least time is a specific form of the more generally stated principle of least action. According to this principle, light, like all forms of energy in motion, always travels on the path that maximizes its dispersal of energy. We see this concept when the light from a light bulb (or star) emanates outward in all available directions.

Mathematically, the principle of least action has two different forms. Physicists almost always use the form that involves the so-called Lagrangian integrand, but Annila explains that this form can only determine paths within stationary surroundings. Since the expanding universe is an evolving system, he suggests that the original but less popular form, which was produced by the French mathematician Maupertuis, can more accurately determine the path of light from the distant supernovae.

Using Maupertuis’ form of the principle of least action, Annila has calculated that the brightness of light from Type 1a supernovae after traveling many millions of light-years to Earth agrees well with observations of the known amount of energy in the universe, and doesn’t require dark energy or any other additional driving force.

“It is natural for us humans to yearn for predictions since anticipations contribute to our survival,” he said. “However, natural processes, as Maupertuis correctly formulated them, are intrinsically non-computable. Therefore, there is no real reason, but it has been only our desire to make precise predictions which has led us to shun the Maupertuis’ form, even though the least-time imperative is an accurate account of path-dependent processes. The unifying principle serves to rationalize various fine-tuning problems such as the large-scale homogeneity and flatness of the universe.”

Light’s least-time path

How exactly does the light travel on its least-time path? While the light is traveling, the expanding universe is decreasing in density. When light crosses from a higher energy density region to a lower energy density region, Maupertuis’ principle of least action says that the light will adapt by decreasing its momentum. Therefore, due to the conservation of quanta, the photon’s wavelength will increase and its frequency will decrease. Thus, the radiant intensity of light will decrease on its way from the supernova explosion during the high-density distant past to its present-day low-density universal surroundings. Also when light passes by a local energy-dense area, such as a star, the speed of light will change and its direction of propagation will change. All these changes in light ultimately stem from changes in the surrounding energy density.

If this is the way that light from supernovae travels, then it tells us something important about why the universe is expanding, Annila explains. When a star explodes and its mass is combusted into radiation, conservation requires that the number of quanta stays the same, whether in the form of matter or radiation. To maintain the overall balance between energy bound in matter and energy free in photons, the supernovae are, on average, moving away from each other with increasing average velocity approaching the speed of light. If dark energy or any other additional form of energy were involved, it would violate the conservation of energy.

The analysis applies not just to supernovae, but to other “bound forms” of energy as well. When the bound forms of energy in stars, pulsars, black holes, and other objects transform into electromagnetic radiation – the lowest form of energy – through combustion, these irrevocable transformations from high energy densities to low energy densities are what cause the universe to expand.

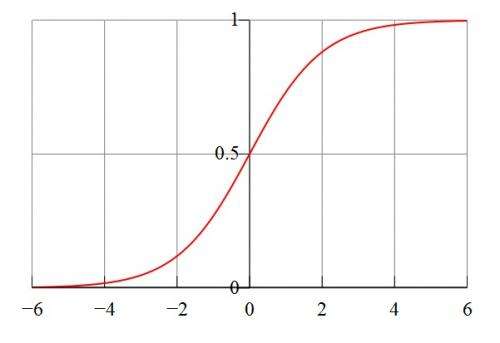

“On-going expansion of the universe is not a remnant of some furious bang at a distant past, but the universe is expanding because energy that is bound in matter is being combusted to freely propagating photons, most notably in stars and other powerful celestial mechanisms of energy transformation,” Annila said. “Thus, today’s rate of expansion depends on the energy density that is still confined in matter as well as on the efficacy of those present-day mechanisms that break matter to light. Likewise, the past rate of expansion depended on those mechanisms that existed then, just as the future rate will depend also on those mechanisms may emerge in the future. Since all natural processes tend to follow sigmoid curves when consuming free energy in the least time, also the universe is expected to expand in a sigmoid manner.”

Not a one-trick pony

While the concept of light’s least-time path seems to be capable of explaining the supernovae data in agreement with the rest of our observations of the universe, Annila notes that it would be even more appealing if this one theoretical concept could solve a few problems at the same time. And it may – Annila shows that, when gravitational lensing is analyzed with this concept, it does not require dark matter to explain the results.

Einstein’s general theory of relativity predicts that massive objects, such as galaxies, cause light to bend due to the way their gravity distorts spacetime, and scientists have observed that this is exactly what happens. The problem is that the deflection seems to be larger than what all of the known (luminous) matter can account for, prompting researchers to investigate the possibility of dark (nonluminous) matter.

However, when Annila used Maupertuis’ principle of least action to analyze how much a galaxy of a certain mass should deflect passing light, he calculated the total deflection to be about five times larger than the value given by general relativity. In other words, the observed deflections require less mass than previously thought, and it can be entirely accounted for by the known matter in galaxies.

“General relativity in terms of Einstein’s field equations is a mathematical model of the universe, whereas we need the physical account of the evolving universe provided by Maupertuis’ principle of least action,” he said. “Progress by patching may appear appealing, but it will easily become inconsistent by resorting to ad hoc accretions. Bertrand Russell is completely to the point about the contemporary tenet when saying that ‘all exact science is dominated by the idea of approximation,’ but fundamentally, any sophisticated modeling is secondary to comprehending the simple principle of how nature works.”

Annila added that these concepts can be tested to see whether they are the correct way to analyze supernovae and interpret the universe's expansion.

“The principle of least-time free energy consumption claims by its nature to be the universal and inviolable law,” he said. “Therefore, not only the supernovae explosions but basically any data will serve to test its validity. Consistency and universality of the principle can be tested, for example, by perihelion precession and galactic rotation data. Also the final results of Gravity Probe B for the geodetic effect appear to me certainly good enough to test the natural principle, whereas recordings of the tiny frame-dragging effect are compromised by large uncertainties as well as by unforeseeable but illuminating experimental tribulations.”

More information: Arto Annila. “Least-time paths of light.” Mon. Not. R. Astron. Soc. 416, 2944-2948 (2011) DOI:10.1111/j.1365-2966.2011.19242.x

Copyright 2011 PhysOrg.com.

All rights reserved. This material may not be published, broadcast, rewritten or redistributed in whole or part without the express written permission of PhysOrg.com.