To probe an unexplored space of hard problems, researchers play the devil's advocate

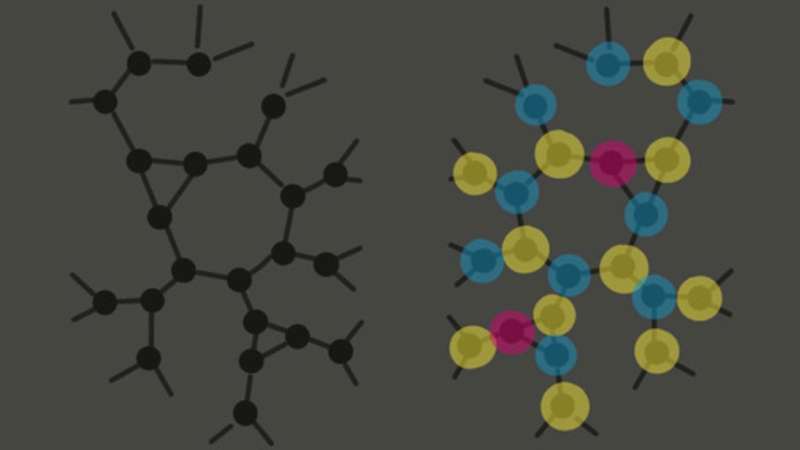

In computer science, the graph coloring problem is a classic. Inspired by the map-coloring problem, it asks: Given a network of nodes connected by links, what's the minimum number of colors you need to color each node so that no links connect two of the same color? For small numbers of colors and links, looking for a solution is straightforward: Just try all possible combinations. But as links increase, the problem becomes more constrained—until, if there are too many links and not enough colors, no solution may exist at all.

"Then there are these fascinating middle zones where there probably is a solution, but it's very difficult to find—and another where there probably isn't, but it's hard to prove that there isn't," says polymath Cris Moore, a resident professor at SFI. That means conventional problem-solving algorithms can't find them, he says. If one starts with a random coloring, for example, and just starts flipping the colors of nodes, it won't find one of these hidden solutions.

In a new paper presented at the 2021 Conference on Learning Theory, Moore and his collaborators describe a new way to construct problems with such hidden solutions. The group includes mathematician Jess Banks, a former SFI undergraduate and post-baccalaureate intern who is now pursuing a Ph.D. at the University of California-Berkeley.

They approach the problem by playing a sort of mathematical devil's advocate. Instead of solving problems, they make up new, complicated ones—with solutions hidden within. "We take off the white hat of the solver, the solution-finder, and we put on the black hat of the person who designs tricky problems—almost like cryptography," says Moore. "The solution is hidden."

The researchers devised a subtle way to hide solutions, says Moore. And when they hand these problems off to problem-solving algorithms, the algorithms come up empty. "If we can create problems that lots of algorithms cannot solve, or even tell whether there's a solution," says Moore, "then we have strong evidence that we've located the zone where these problems are hard."

The paper includes a theorem proving that multiple families of algorithms fail to find solutions to these generated problems. That means researchers are closer than ever to identifying the phase-transition boundary of this "hard" zone in which it's difficult to find solutions—or prove that there aren't any.

Moore says the ongoing effort to precisely identify problems grew out of conjectures in physics that ask how systems change as they become more constrained.

"Our adventure," he says, "has been to turn these conjectures and calculations in physics into mathematical proofs." And the only way to keep pushing the field forward, he says, is to call on the overlapping strengths of tools from math, physics, and computer science.

More information: Afonso S. Bandeira et al, Spectral Planting and the Hardness of Refuting Cuts, Colorability, and Communities in Random Graphs. arXiv:2008.12237v1 [cs.CC], arxiv.org/abs/2008.12237

Provided by Santa Fe Institute