Controlling robots across oceans and space

This Autumn is seeing a number of experiments controlling robots from afar, with ESA astronaut Luca Parmitano directing a robot in The Netherlands and engineers in Germany controlling a rover in Canada.

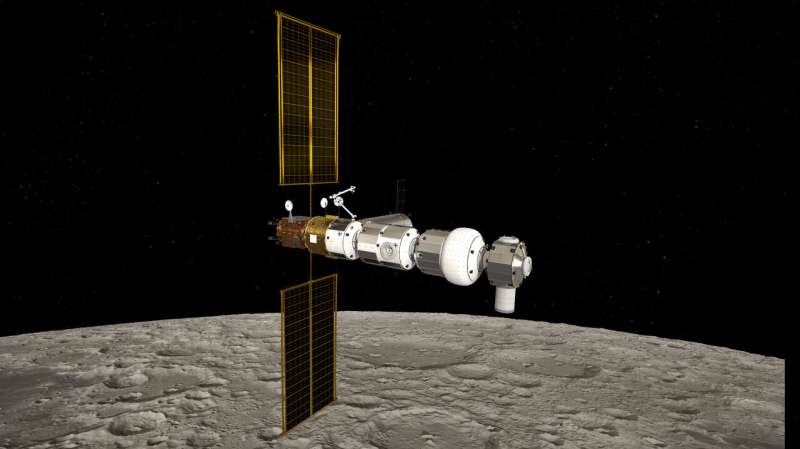

Imagine looking down at the Moon from the Gateway as you prepare to land near a lunar base to run experiments, but you know the base needs maintenance work on the life-support system that will take days. It would be better to maintain the base from orbit so the astronauts can get straight to work once on the Moon.

Human-robotic partnerships are at the heart of ESA's exploration strategy, which includes preparing for scenarios like this by sending robotic scouts to the Moon and planets, hand-in-hand with astronauts controlling them from orbit.

The Meteron project was formed to develop the technology and know-how needed to operate rovers in these harsh conditions. It covers all aspects of operations, from communications and the user interface to surface operations and even connecting the robots to the astronauts by sense of touch.

Historic robotic control

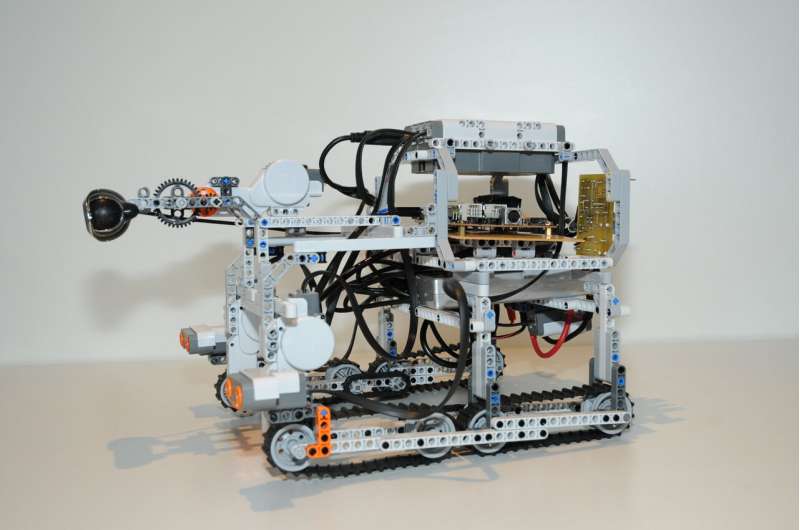

The first experiment took place in 2012 when NASA astronaut Sunita Williams controlled a LEGO rover in Germany to test a newly-developed 'space internet' – proving it is possible to control a rover from orbit. This is no easy feat as signals from the International Space Station make a round trip of 144 400 km. As the outpost moves around Earth at 29 000 km/h, signals travel up to satellites almost 36 000 km high and then down to a US ground station in New Mexico, via NASA Houston and through a transatlantic cable to Europe—and back.

In parallel, engineers worked to design the user interface for the astronauts to control the robots. As this is a new field, aspects such as camera-views, joysticks and even whether to use a traditional computer or touch-screen had to be considered and designed.

From initial tests, larger rovers such as Eurobot were controlled from space while teams at ESA's technical centre in The Netherlands started experimenting with haptic feedback, allowing astronauts to feel what the robot touches. In 2015, a historic orbital 'handshake' occurred between NASA astronaut Terry Virts and a person on Earth over 5000 km away.

Just a few months after that milestone, ESA astronaut Andreas Mogensen controlled a rover to insert a metal peg into a round hole in a 'task board' with millimetre precision, simulating the repair of an electrical connection.

Tests have continued with increasing fidelity. Last week, a ground-based ESA team together with the Canadian Space Agency practiced night-time operations. Experts at ESA's mission control centre in Darmstadt, Germany, controlled the CSA's Juno rover from across the Atlantic Ocean, with a science team at ESA's ESTEC technical centre in The Netherlands advising—a similar setup is used for daily International Space Station operations.

The four-hour experiment saw Juno travel over two kilometres covering six 'waypoints' while taking scans of its surroundings and inspecting areas of scientific interest. None of the teams in Europe knew exactly what to expect, just as they would during an actual lunar mission.

"The MAGIC experiment really was a huge success," says Kim Nergaard, Head of Advanced Mission Concepts at ESA's ESOC operations centre in Darmstadt, Germany.

"We faced some issues of course—at one point the rover was overly cautious of a relatively small rock—but we reached our end point within the allocated time and achieved all the objectives we set out to, learning a great deal along the way."

Putting it all together

In November 2019, the Meteron project will put all the elements together when ESA astronaut Luca Parmitano operates the Interact rover in The Netherlands from the International Space Station.

This experiment, dubbed Analog-1, will combine all the know-how from a decade of the Meteron project into a full-scale test: operating a rover from orbit to collect scientific samples of lunar rock. Luca will have a team at Europe's astronaut centre in Cologne, Germany, acting as mission control and will be able to feel what Interact feels using the haptic-feedback technology developed by ESA's Telerobotics Laboratory.

Provided by European Space Agency