New geometric model improves predictions of fluid flow in rock

Deep beneath the Earth's surface, oil and groundwater percolate through gaps in rock and other geologic material. Hidden from sight, these critical resources pose a significant challenge for scientists seeking to evaluate the state of such two-phase fluid flows. Fortunately, the combination of supercomputing and synchrotron-based imaging techniques enables more accurate methods for modeling fluid flow in large subsurface systems like oil reservoirs, sinks for carbon sequestration, and groundwater aquifers.

Researchers led by computational scientist James McClure of Virginia Tech used the 27-petaflop Titan supercomputer at the Oak Ridge Leadership Computing Facility (OLCF) to develop a geometric model that requires only a few key measurements to characterize how fluids are arranged within porous rock—that is, their geometric state.

The OLCF is a US Department of Energy (DOE) Office of Science User Facility located at DOE's Oak Ridge National Laboratory. The team's results were published in Physical Review Fluids in 2018.

The new geometric model offers geologists a way to uniquely predict the fluid state and overcome a well-known shortcoming associated with models that have been used for more than half a century.

Around the turn of the 20th century, the German mathematician Hermann Minkowski demonstrated that 3-D objects are associated with four essential measures: volume, surface area, integral mean curvature, and Euler characteristic. However, in the traditional computational models for subsurface flow, the volume fraction provides the only measure of the fluid state and relies on observational data collected over time for greatest accuracy. Based on Minkowski's fundamental analysis, these traditional models are incomplete.

"The mathematics in our model is different from the traditional model, but it works quite well," McClure said. "The geometric model is characterizing the microstructure of the medium using a very limited number of measures."

To apply Minkowski's result to the complex, multiphase fluid configurations found in porous rock, McClure's team needed to generate a large amount of data, and Titan provided the extreme computational power needed.

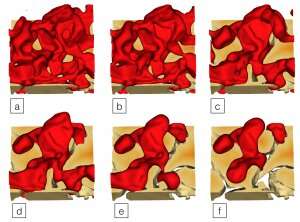

Working with international collaborators, the team selected five micro-computed tomography (microCT) datasets collected by X-ray synchrotrons to represent the microscopic structure of real rocks. The datasets included two sandstones, a sand pack, a carbonate rock, and a synthetic porous system known as Robuglas. The team also included a simulated pack of spheres.

Within each rock, thousands of possible fluid configurations were simulated and analyzed, totaling more than 250,000 fluid configurations. Using the simulation data, the team was able to show that a unique relationship exists among the four geometric variables, paving the way for a new generation of models that predict the fluid state from theory rather than by relying on a historical set of data.

"Relationships once thought to be inherently history-dependent can now be reconsidered based on rigorous geometric theory," McClure said.

The team used the open source Lattice Boltzmann for Porous Media (LBPM) code, developed by McClure and named for the statistics-driven lattice Boltzmann method that calculates fluid flow across a range of scales more rapidly than calculations using finite methods, which are most accurate at small scales. The LBPM code, which uses Titan's GPUs to speed fluid flow simulations, is released through the Open Porous Media Initiative, which maintains open-source codes for the research community.

"Lattice Boltzmann methods perform very well on GPUs," McClure said. "In our implementation, the simulation runs on the GPUs while the CPU cores analyze information or modify the state of the fluids."

At exceptional computing speeds, the team was able to analyze the simulation state at about every 1,000 time steps, or at about every minute of computing time.

"This allowed us to generate a very large number of data points that can be used to study not only the geometric state but also other aspects of flow physics as we move forward," McClure said.

Larger simulations will be needed to study how the diverse properties and microstructure of real rocks influence the behavior of the geometric relationship across length scales. A new generation of supercomputers like the OLCF's latest system—the 200-petaflop IBM AC922 Summit—will be needed to connect flow physics across length scales that range from nanometer- to millimeter-sized pores to reservoirs that can span several kilometers.

"The release of the Summit supercomputer enables larger simulations that will further push the boundaries of our understanding for these complex multiscale systems," McClure said.

More information: James E. McClure et al. Geometric state function for two-fluid flow in porous media, Physical Review Fluids (2018). DOI: 10.1103/PhysRevFluids.3.084306

Provided by Oak Ridge National Laboratory