Causal disentanglement is the next frontier in AI

Recreating the human mind's ability to infer patterns and relationships from complex events could lead to a universal model of artificial intelligence.

A major challenge for artificial intelligence (AI) is having the ability to see past superficial phenomena to guess at the underlying causal processes. New research by KAUST and an international team of leading specialists has yielded a novel approach that moves beyond superficial pattern detection.

Humans have an extraordinarily refined sense of intuition or inference that give us the insight, for example, to understand that a purple apple could be a red apple illuminated with blue light. This sense is so highly developed in humans that we are also inclined to see patterns and relationships where none exist, giving rise to our propensity for superstition.

This type of insight is such a challenge to codify in AI that researchers are still working out where to start: yet it represents one of the most fundamental difference between natural and machine thought.

Five years ago, a collaboration between KAUST-affiliated researchers Hector Zenil and Jesper Tegnér, along with Narsis Kiani and Allan Zea from Sweden's Karolinska Institutet, began adapting algorithmic information theory to network and systems biology in order to address fundamental problems in genomics and molecular circuits. That collaboration led to the development of an algorithmic approach to inferring causal processes that could form the basis of a universal model of AI.

"Machine learning and AI are becoming ubiquitous in industry, science and society," says KAUST professor Tegnér. "Despite recent progress, we are still far from achieving general purpose machine intelligence with the capacity for reasoning and learning across different tasks. Part of the challenge is to move beyond superficial pattern detection toward techniques enabling the discovery of the underlying causal mechanisms producing the patterns."

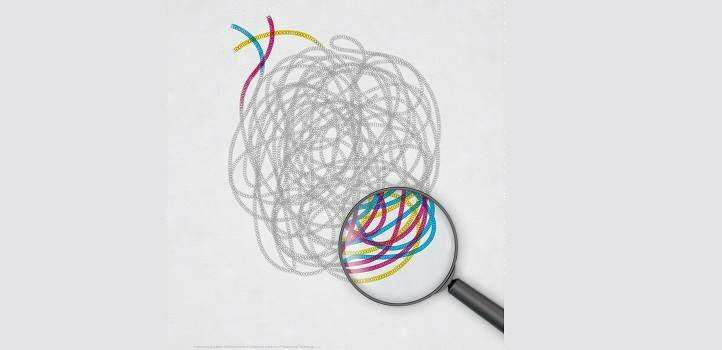

This causal disentanglement, however, becomes very challenging when several different processes are intertwined, as is often the case in molecular and genomic data. "Our work identifies the parts of the data that are causally related, taking out the spurious correlations and then identifies the different causal mechanisms involved in producing the observed data," says Tegnér.

The method is based on a well-defined mathematical concept of algorithmic information probability as the basis for an optimal inference machine. The main difference from previous approaches, however, is the switch from an observer-centric view of the problem to an objective analysis of the phenomena based on deviations from randomness.

"We use algorithmic complexity to isolate several interacting programs, and then search for the set of programs that could generate the observations," says Tegnér.

The team demonstrated their method by applying it to the interacting outputs of multiple computer codes. The algorithm finds the shortest combination of programs that could construct the convoluted output string of 1s and 0s.

"This technique can equip current machine learning methods with advanced complementary abilities to better deal with abstraction, inference and concepts, such as cause and effect, that other methods, including deep learning, cannot currently handle," says Zenil.

More information: Hector Zenil et al. Causal deconvolution by algorithmic generative models, Nature Machine Intelligence (2018). DOI: 10.1038/s42256-018-0005-0