Platform for mobile networks would bring services up to speeds of 100 Gbps

Even though mobile internet link speeds might soon achieve 100 Gbps, this doesn't necessarily mean network carriers will be free of data-handling challenges that effectively slow down mobile data services, for everything from individual device users to billions of Internet-of-Things connections.

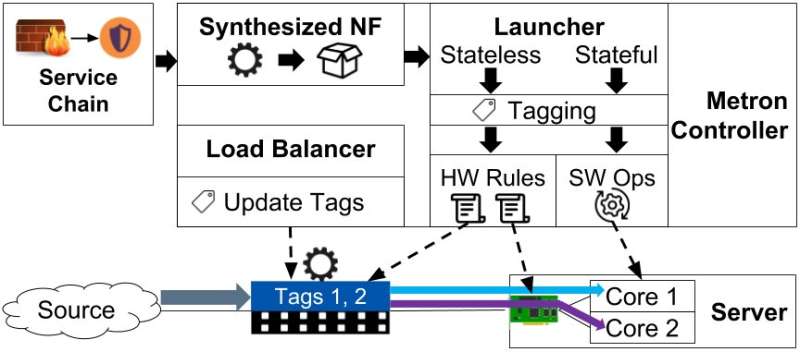

Making connections faster means getting networks to process data packets in a different way, which is what a team from KTH Royal Institute of Technology, RISE SICS, and University of Liege reportedly have done. In a recent conference paper, the researchers introduced a new platform, Metron, for network functions virtualization, which enables network services to function at the true speed of the underlying hardware.

"We are the first to enable modern network services to operate at the speed of the underlying commodity hardware, at wireline speed, thus achieving ultra-high throughput with low predictable latency, and high resource efficiency," says Dejan Kostic. The researchers believe Metron could help networks meet performance expectations even as demand for services such as high definition video, social media, and cloud-based applications continues to grow.

While available specialized hardware can accommodate these speeds, modern networks have adopted a new networking model that replaces expensive specialized hardware with open-source software running on commodity hardware, Kostic explains. "But achieving high performance using commodity hardware is hard to do, and current solutions fail to satisfy the performance requirements of high speed networks."

For one thing, the data uplink and downlink requires a network to take a couple of steps that make a big difference. Each packet of data must be inspected, then it's directed to servers where it must locate its destination among a multitude of cores, each designed to fulfill a specific service.

The Metron platform is designed to perform early traffic classification and apply tags to the packets. Then hardware can accurately dispatch traffic to the correct CPU core of a commodity server based upon the tag. "We also exploit our earlier work (called Synthesized Network Functions), to realize a highly optimized traffic classifier by synthesizing its internal operations, while eliminating processing redundancy," Kostic says.

The researchers' experiments on a 100 gigabit per second ethernet (GbE) network realized services with dramatically lower latency (up to 4.7x), higher throughput (up to 7.8x), and better efficiency (up to 6.5x) than is currently possible. Kostic says the work enables network operators to offer high throughput and low predictable latency – key requirements for future networks. "This is also relevant for popular services such as Google, Microsoft, Facebook, and Twitter."

"It shows that popular network services can be provided to billions of users at a high quality, by efficiently exploiting the increasing networking speeds," he says.

More information: Metron: NFV Service Chains at the True Speed of the Underlying Hardware, Georgios P. Katsikas, RISE SICS and KTH Royal Institute of Technology; Tom Barbette, University of Liege; Dejan Kostic, KTH Royal Institute of Technology; Rebecca Steinert, RISE SICS; Gerald Q. Maguire Jr., KTH Royal Institute of Technology, NSDI 18 www.usenix.org/conference/nsdi … resentation/katsikas

Provided by KTH Royal Institute of Technology