New system learns how to grasp objects

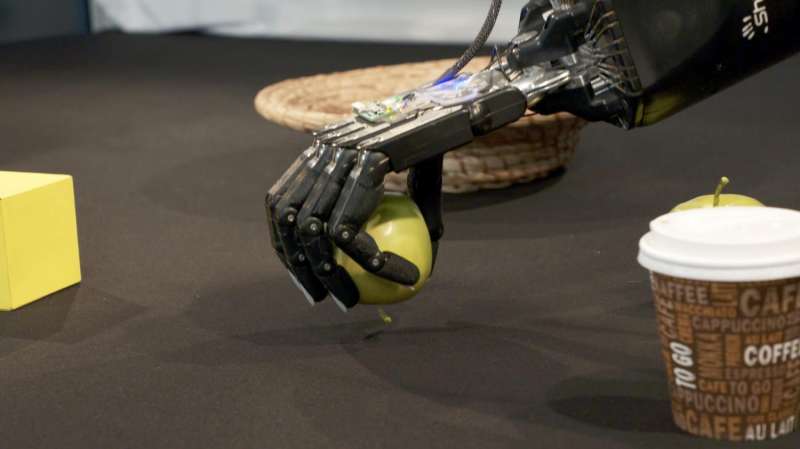

Researchers at Bielefeld University have developed a grasp system with robot hands that autonomously familiarizes itself with novel objects. The new system works without previously knowing the characteristics of objects, such as pieces of fruit or tools. The study could contribute to future service robots that are able to independently adapt to working in new households.

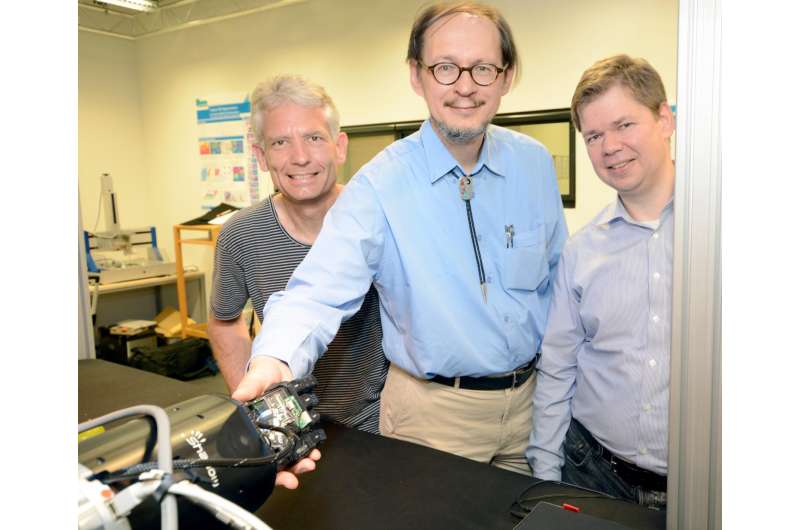

"Our system learns by trying out and exploring on its own—just as babies approach new objects," says neuroinformatics professor Dr. Helge Ritter, who heads the Famula project together with sports scientist and cognitive psychologist Dr. Thomas Schack and robotics expert Dr. Sven Wachsmuth.

The CITEC researchers are working on a robot with two manipulators that are based on human hands in terms of shape and mobility. The robot brain for these hands learns how unfamiliar objects like pieces of fruit, dishes, or stuffed animals can be distinguished on the basis of their color or shape. Different objects imply different possible actions—a banana can be held, and a button can be pressed. "The system learns to recognize such possibilities as characteristics, and constructs a model for interacting and re-identifying the object," explains Ritter.

To accomplish this, the interdisciplinary project brings together work in artificial intelligence with research from other disciplines. Thomas Schack's research group investigated which characteristics study participants perceived to be significant in grasping actions. In one study, test subjects had to compare the similarity of more than 100 objects. "It was surprising that weight hardly plays a role. We humans rely mostly on shape and size when we differentiate objects," says Thomas Schack. In another study, test subjects' eyes were covered and they had to handle cubes that differed in weight, shape and size. Infrared cameras recorded their hand movements. "We found out how people touch an object, and which strategies they prefer to use to identify its characteristics," explains Dirk Koester, who is a member of Schack's research group. "Of course, we also found out which mistakes people make when blindly handling objects."

System Puts Itself in the Position of "Mentor"

Dr. Robert Haschke, a colleague of Helge Ritter, stands in front of a large metal cage with both robot arms and a table with various test objects. In his role as a human learning mentor, Dr. Haschke helps the system to acquire familiarity with novel objects, telling the robot which object on the table to inspect next. To do this, Haschke points to individual objects, or gives spoken hints, such as in which direction an interesting object can be found. Using color cameras and depth sensors, two monitors display how the system perceives its surroundings and reacts to instructions from humans.

"In order to understand which objects they should work with, the robot hands have to be able to interpret not only spoken language, but also gestures," explains Sven Wachsmuth, of CITEC's Central Labs. "And they also have to be able to put themselves in the position of a human to also ask themselves if they have correctly understood." Wachsmuth and his team have also given the system a face. From one of the monitors, Flobi follows the movements of the hands and reacts to the researchers' instructions. Flobi is a stylized robot head that complements the robot's language and actions with facial expressions. As part of the Famula system, the virtual version of the robot Flobi is currently in use.

Understanding Human Interaction

With the Famula project, CITEC researchers are conducting basic research that can benefit self-learning robots of the future in both the home and industry. "We want to literally understand how we learn to understand the environment with our hands. The robot makes it possible for us to test our findings in reality and to rigorously expose the gaps in our understanding. In doing so, we are contributing to the future use of complex, multi-fingered robot hands, which today are still too costly or complex to be used, for instance, in industry," explains Ritter.

Provided by Bielefeld University