Scientists show prediction polls can outdo prediction markets

Ask economists whether prediction markets or prediction polls fare better, and they'll likely favor the former.

In prediction markets, people bet against each other to predict an outcome, say the chance of someone winning an election. The market represents the crowd's best guess. In a prediction poll, the guesser isn't concerned with what anyone else thinks, essentially betting against himself.

"According to the theory, prediction markets should 'always win' because markets are the most efficient mechanisms for aggregating the wisdom of a crowd. That process should converge on a true prediction," said the University of Pennsylvania's Philip Tetlock, a Penn Integrates Knowledge professor. "But our findings published in Management Science suggest that you can actually get just as much out of a forecasting tournament using prediction surveys."

Tetlock, the Annenberg University Professor, co-authored the paper with Penn's Barbara Mellers, the I. George Heyman University Professor and a PIK professor, and Pavel Atanasov, who completed his Ph.D. from Penn in 2012 and postdoc there in 2015. The study found that team polls, in which groups of up to 15 people collaborated to make forecasts, produced the most accurate predictions when combined with a statistical algorithm.

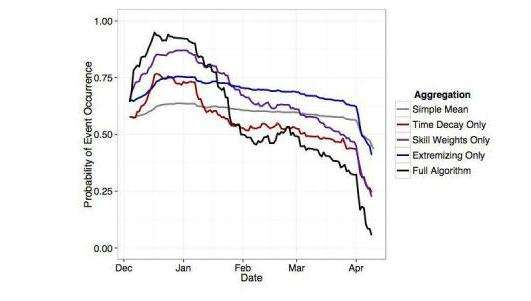

"We used each person's prior track record and behavioral patterns to come up with a weighting scheme that amplified those who were more skilled and lowered the voices of the less skilled," said Atanasov, the study's lead author, who currently works on decision science and prediction at a startup called Pytho. "Accounting for skill improved the overall results."

The work stems from The Good Judgment Project, a group of faculty and students from Penn and elsewhere selected to participate in the Intelligence Advanced Research Projects Activity's four-year forecasting tournament that began in 2011. During that competition, five university groups produced their best predictions for 500 questions about topics ranging from whether world leaders would remain in power to which countries the World Health Organization would declare Ebola-free by a certain date.

Penn's team eventually won, by producing what they called "superforecasters" who performed in the top two percent of thousands of forecasters. Superforecasters did so well that their combined forecasts outperformed those of the intelligence community by about 30 percent. Mellers and Tetlock describe The Good Judgement Project in a commentary for the Feb. 3, 2017 issue of Science.

The analysis comparing prediction polls to prediction markets documented in Management Science, however, excludes the superforecasters, said Atanasov, who was a postdoctoral scholar with Mellers and Tetlock at The Good Judgement Project.

"At the end of each season of the tournament, we removed superforecasters from the main experiment and let them work in a separate condition," he said. "Then we recruited new participants. Each season started with a mix of the forecasters who continued from a previous season and new additions. But none were superforecasters."

Participants were randomly assigned to one of three conditions: prediction market, independent polls or team polls. The researchers then tracked performance during two 10-month seasons. Each new season brought different designs and different algorithms, all with the aim of generating the most precise crowd-based prediction possible.

"The bottom line is that the team polls produced the most accurate predications once we combined them with statistical algorithms," Atanasov said. "The market has its own way of identifying skill and up-weighting skill forecasters based on earnings. In the polling condition, we generated an algorithm that could perform that function."

In the real world, people—particularly those in power—often neglect to make predictions as pointed as those in the tournament, instead using vague wording to prevent the possibility of later being proven wrong. But in a forecasting tournament, the name of the game is accuracy.

Atanasov said, "You get a lot more accurate information because people are willing to let down their defenses and talk in straighter terms."

Mellers has now taken this work a step further, in a new three-year undertaking called The Foresight Project, which grew out of The Good Judgement Project. At the beginning and the end of the original tournament, participants were tested on the extent to which they would go out of their way to seek out and pay attention to information that ran counter to their beliefs, what's called "confirmation bias."

The research team saw a correlation between years in the tournament and an actively open mind: Longer-standing participants were more open. So Mellers decided to recruit undergraduate, graduate and professional students for a new tournament to test for causality. Mellers' team will complete year one this June and will be recruiting for year two this summer.

"We're looking for ways to pry open closed minds," she said. "We're hoping that tournaments can change the way people think about political events. They learn to take differing perspectives, consider alternative outcomes, put aside their wishful thoughts and preferences. The focus on accuracy is a constant challenge."

It's an exercise in mental control, she added, but one that if successful, could change political discourse for the better.

More information: Pavel Atanasov et al. Distilling the Wisdom of Crowds: Prediction Markets vs. Prediction Polls, Management Science (2017). DOI: 10.1287/mnsc.2015.2374

Philip E. Tetlock et al. Bringing probability judgments into policy debates via forecasting tournaments, Science (2017). DOI: 10.1126/science.aal3147

Journal information: Science , Management Science

Provided by University of Pennsylvania