A scientist and a supercomputer re-create a tornado

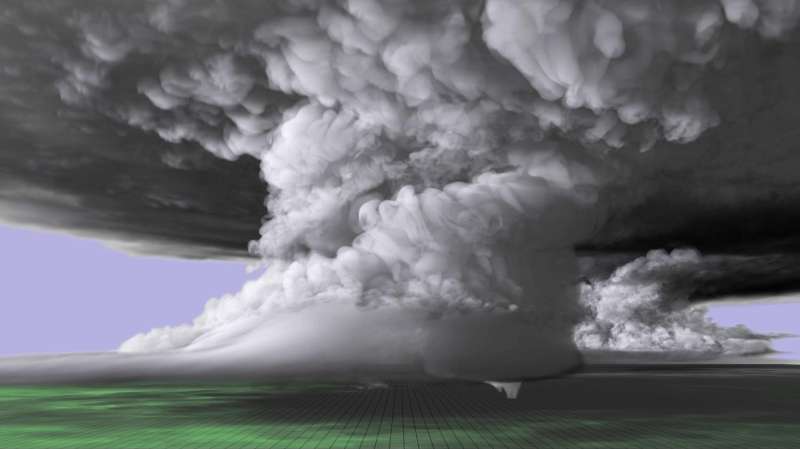

With tornado season fast approaching or already underway in vulnerable states throughout the U.S., new supercomputer simulations are giving meteorologists unprecedented insight into the structure of monstrous thunderstorms and tornadoes. One such recent simulation recreates a tornado-producing supercell thunderstorm that left a path of destruction over the Central Great Plains in 2011.

The person behind that simulation is Leigh Orf, a scientist with the Cooperative Institute for Meteorological Satellite Studies (CIMSS) at the University of Wisconsin-Madison. He leads a group of researchers who use computer models to unveil the moving parts inside tornadoes and the supercells that produce them. The team has developed expertise creating in-depth visualizations of supercells and discerning how they form and ultimately spawn tornadoes.

The work is particularly relevant because the U.S. leads the global tornado count with more than 1,200 touchdowns annually, according to the National Oceanic and Atmospheric Administration.

In May 2011, several tornadoes touched down over the Oklahoma landscape in a short, four-day assemblage of storms. One after the other, supercells spawned funnel clouds that caused significant property damage and loss of life. On May 24, one tornado in particular - the "El Reno" - registered as an EF-5, the strongest tornado category on the Enhanced Fujita scale. It remained on the ground for nearly two hours and left a path of destruction 63-miles long.

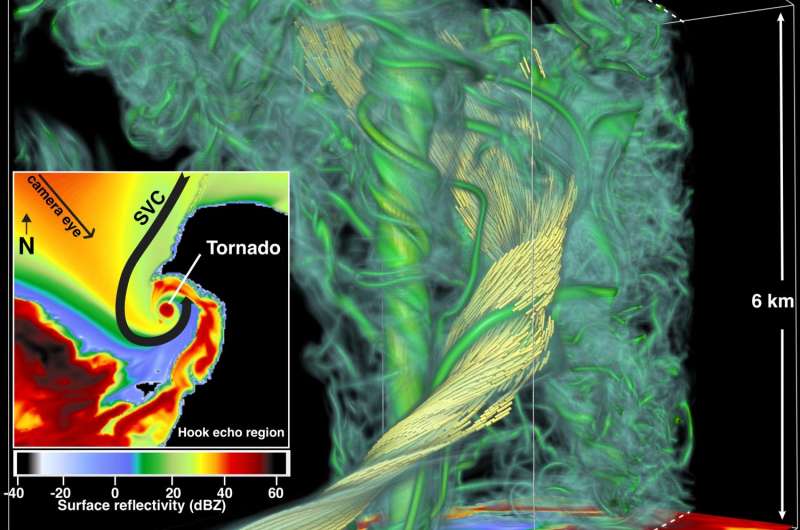

Orf's most recent simulation recreates the El Reno tornado, revealing in high-resolution the numerous "mini-tornadoes" that form at the onset of the main tornado. As the funnel cloud develops, they begin to merge, adding strength to the tornado and intensifying wind speeds. Eventually, new structures form, including what Orf refers to as the streamwise vorticity current (SVC).

"The SVC is made up of rain-cooled air that is sucked into the updraft that drives the whole system," says Orf. "It's believed that this is a crucial part in maintaining the unusually strong storm, but interestingly, the SVC never makes contact with the tornado. Rather, it flows up and around it."

Using real-world observational data, the research team was able to recreate the weather conditions present at the time of the storm and witness the steps leading up to the creation of the tornado. The archived data, taken from a short-term operational model forecast, was in the form of an atmospheric sounding, a vertical profile of temperature, air pressure, wind speed and moisture. When combined in the right way, these parameters can create the conditions suitable for tornado formation, known as tornadogenesis.

According to Orf, producing a tornado requires a couple of "non-negotiable" parts, including abundant moisture, instability and wind shear in the atmosphere, and a trigger that moves the air upwards, like a temperature or moisture difference. However, the mere existence of these parts in combination does not mean that a tornado is inevitable.

"In nature, it's not uncommon for storms to have what we understand to be all the right ingredients for tornadogenesis and then nothing happens," says Orf. "Storm chasers who track tornadoes are familiar with nature's unpredictability, and our models have shown to behave similarly."

Orf explains that unlike a typical computer program, where code is written to deliver consistent results, modelling on this level of complexity has inherent variability, and in some ways he finds it encouraging since the real atmosphere exhibits this variability, too.

Successful modeling can be limited by the quality of the input data and the processing power of computers. To achieve greater levels of accuracy in the models, retrieving data on the atmospheric conditions immediately prior to tornado formation is ideal, but it remains a difficult and potentially dangerous task. With the complexity of these storms, there can be subtle (and currently unknown) factors in the atmosphere that influence whether or not a supercell forms a tornado.

Digitally resolving a tornado simulation to a point where the details are fine enough to yield valuable information requires immense processing power. Fortunately, Orf had earned access to a high-performance supercomputer, specifically designed to handle complex computing needs: the Blue Waters Supercomputer at the National Center for Supercomputing Applications at the University of Illinois at Urbana-Champaign

In total, their EF-5 simulation took more than three days of run time. In contrast, it would take decades for a conventional desktop computer to complete this type of processing.

Looking ahead, Orf is working on the next phase of this research and continues to share the group's findings with scientists and meteorologists across the country. In January 2017, the group's research was featured on the cover of the Bulletin of the American Meteorological Society.

"We've completed the EF-5 simulation, but we don't plan to stop there," says Orf. "We are going to keep refining the model and continue to analyze the results to better understand these dangerous and powerful systems."

Provided by University of Wisconsin-Madison