Does 'publication bias' affect the 'canonization' of facts in science?

Researchers model how 'publication bias' does—and doesn't—affect the 'canonization' of facts in science

Arguing in a Boston courtroom in 1770, John Adams famously pronounced, "Facts are stubborn things," which cannot be altered by "our wishes, our inclinations or the dictates of our passion."

But facts, however stubborn, must pass through the trials of human perception before being acknowledged—or "canonized"—as facts. Given this, some may be forgiven for looking at passionate debates over the color of a dress and wondering if facts are up to the challenge.

Carl Bergstrom believes facts stand a fighting chance, especially if science has their back. A professor of biology at the University of Washington, he has used mathematical modeling to investigate the practice of science, and how science could be shaped by the biases and incentives inherent to human institutions.

"Science is a process of revealing facts through experimentation," said Bergstrom. "But science is also a human endeavor, built on human institutions. Scientists seek status and respond to incentives just like anyone else does. So it is worth asking—with precise, answerable questions—if, when and how these incentives affect the practice of science."

In an article published Dec. 20 in the journal eLife, Bergstrom and co-authors present a mathematical model that explores whether "publication bias"—the tendency of journals to publish mostly positive experimental results—influences how scientists canonize facts. Their results offer a warning that sharing positive results comes with the risk that a false claim could be canonized as fact. But their findings also offer hope by suggesting that simple changes to publication practices can minimize the risk of false canonization.

These issues have become particularly relevant over the past decade, as prominent articles have questioned the reproducibility of scientific experiments—a hallmark of validity for discoveries made using the scientific method. But neither Bergstrom nor most of the scientists engaged in these debates are questioning the validity of heavily studied and thoroughly demonstrated scientific truths, such as evolution, anthropogenic climate change or the general safety of vaccination.

"We're modeling the chances of 'false canonization' of facts on lower levels of the scientific method," said Bergstrom. "Evolution happens, and explains the diversity of life. Climate change is real. But we wanted to model if publication bias increases the risk of false canonization at the lowest levels of fact acquisition."

Bergstrom cites a historical example of false canonization in science that lies close to our hearts—or specifically, below them. Biologists once postulated that bacteria caused stomach ulcers. But in the 1950s, gastroenterologist E.D. Palmer reported evidence that bacteria could not survive in the human gut.

"These findings, supported by the efficacy of antacids, supported the alternative 'chemical theory of ulcer development,' which was subsequently canonized," said Bergstrom. "The problem was that Palmer was using experimental protocols that would not have detected Helicobacter pylori, the bacteria that we know today causes ulcers. It took about a half century to correct this falsehood."

While the idea of false canonization itself may cause dyspepsia, Bergstrom and his team—lead author Silas Nissen of the Niels Bohr Institute in Denmark and co-authors Kevin Gross of North Carolina State University and UW undergraduate student Tali Magidson—set out to model the risks of false canonization given the fact that scientists have incentives to publish only their best, positive results. The so-called "negative results," which show no clear, definitive conclusions or simply do not affirm a hypothesis, are much less likely to be published in peer-reviewed journals.

"The net effect of publication bias is that negative results are less likely to be seen, read and processed by scientific peers," said Bergstrom. "Is this misleading the canonization process?"

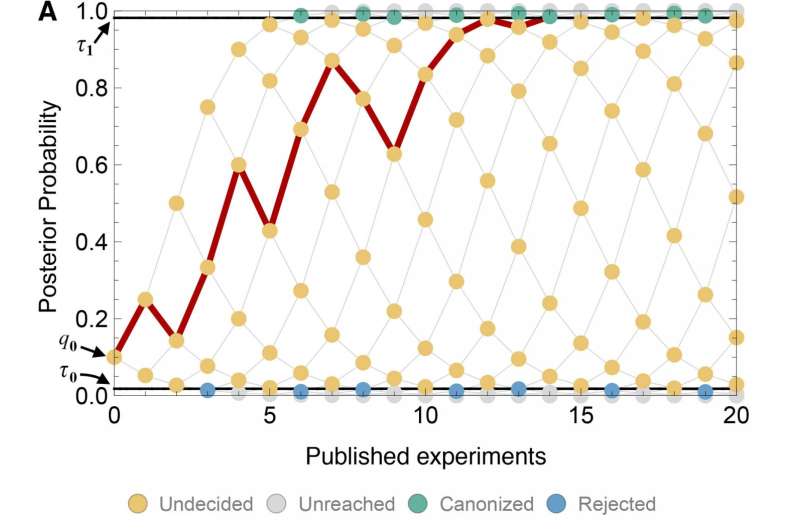

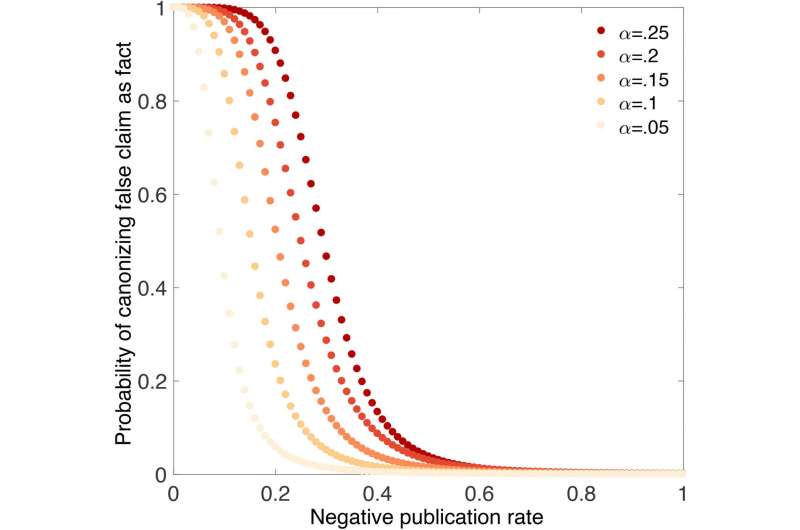

For their model, Bergstrom's team incorporated variables such as the rates of error in experiments, how much evidence is needed to canonize a claim as fact and the frequency with which negative results are published. Their mathematical model showed that the lower the publication rate is for negative results, the higher the risk for false canonization. And according to their model, one possible solution—raising the bar for canonization—didn't help alleviate this risk.

"It turns out that requiring more evidence before canonizing a claim as fact did not help," said Bergstrom. "Instead, our model showed that you need to publish more negative results—at least more than we probably are now."

Since most negative results live out their obscurity in the pages of laboratory notebooks, it is difficult to quantify the ratio that are published. But clinical trials, which must be registered with the U.S. Food and Drug Administration before they begin, offer a window into how often negative results make it into the peer-reviewed literature. A 2008 analysis of 74 clinical trials for antidepressant drugs showed that scarcely more than 10 percent of negative results were published, compared to over 90 percent for positive results.

"Negative results are probably published at different rates in other fields of science," said Bergstrom. "And new options today, such as self-publishing papers online and the rise of journals that accept some negative results, may affect this. But in general, we need to share negative results more than we are doing today."

Their model also indicated that negative results had the biggest impact as a claim approached the point of canonization. That finding may offer scientists an easy way to prevent false canonization.

"By more closely scrutinizing claims as they achieve broader acceptance, we could identify false claims and keep them from being canonized," said Bergstrom.

To Bergstrom, the model raises valid questions about how scientists choose to publish and share their findings—both positive and negative. He hopes that their findings pave the way for more detailed exploration of bias in scientific institutions, including the effects of funding sources and the different effects of incentives on different fields of science. But he believes a cultural shift is needed to avoid the risks of publication bias.

"As a community, we tend to say, 'Damn it, this didn't work, and I'm not going to write it up,'" said Bergstrom. "But I'd like scientists to reconsider that tendency, because science is only efficient if we publish a reasonable fraction of our negative findings."

More information: Silas Boye Nissen et al, Publication bias and the canonization of false facts, eLife (2016). DOI: 10.7554/eLife.21451

Journal information: eLife

Provided by University of Washington