'Expansion entropy': A new litmus test for chaos?

Can the flap of a butterfly's wings in Brazil set off a tornado in Texas? This intriguing hypothetical scenario, commonly called "the butterfly effect," has come to embody the popular conception of a chaotic system, in which a small difference in initial conditions will cascade toward a vastly different outcome in the future.

Understanding and modeling chaos can help address a variety of scientific and engineering questions, and so researchers have worked to develop better mathematical definitions of chaos. These definitions, in turn, will aid the construction of models that more accurately represent real-world chaotic systems.

Now, researchers from the University of Maryland have described a new definition of chaos that applies more broadly than previous definitions. This new definition is compact, can be easily approximated by numerical methods and works for a wide variety of chaotic systems. The discovery could one day help advance computer modeling across a wide variety of disciplines, from medicine to meteorology and beyond. The researchers present their new definition in the July 28, 2015 issue of the journal Chaos.

"Our definition of chaos identifies chaotic behavior even when it lurks in the dark corners of a model," said Brian Hunt, a professor of mathematics with a joint appointment in the Institute for Physical Science and Technology (IPST) at UMD. Hunt co-authored the paper with Edward Ott, a Distinguished University Professor of Physics and Electrical and Computer Engineering with a joint appointment in the Institute for Research in Electronics and Applied Physics (IREAP) at UMD.

The study of chaos is relatively young. MIT meteorologist Edward Lorenz, whose work gave rise to the term "the butterfly effect," first noticed chaotic characteristics in weather models in the mid-20th century. In 1963, he published a set of differential equations to describe atmospheric airflow and noted that tiny variations in initial conditions could drastically alter the solution to the equations over time, making it difficult to predict the weather in the long term.

Mathematically, extreme sensitivity to initial conditions can be represented by a quantity called a Lyapunov exponent. This number is positive if two infinitesimally close starting points diverge exponentially as time progresses. Yet, Lyapunov exponents have limitations as a definition of chaos: they only test for chaos in particular solutions of a model, not in the model itself, and they can be positive even when the underlying model is considered too straightforward to be deemed chaotic.

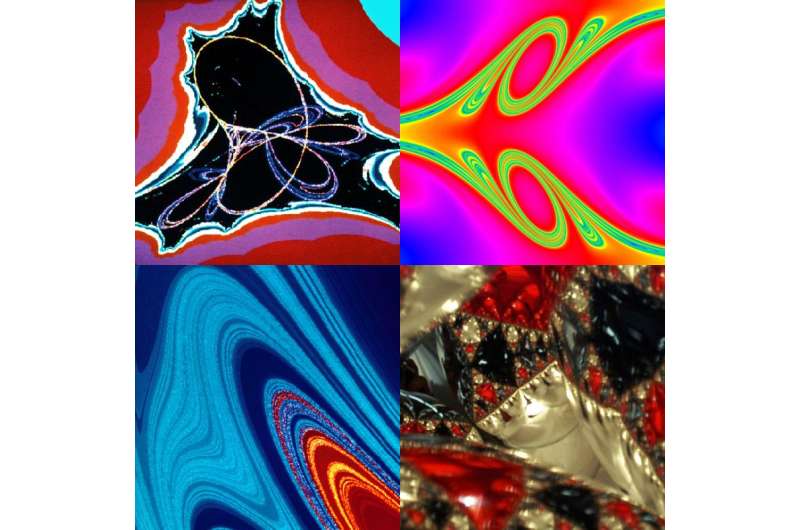

Fittingly, the chaotic solution to Lorenz's equations looks like two wings of a butterfly. The shape can be mathematically categorized as an attractor, meaning it is easy to identify with Lyapunov exponents. Yet, not all chaotic behavior is quite so clear, Hunt explained.

To provide an example, Hunt described four glass-ball Christmas ornaments stacked in a pyramid. Light hitting the shiny spheres reflects off in all directions. Most of the light travels along simple paths, but some photons can become trapped in the interior of the pyramid, bouncing chaotically back and forth between the ornaments. These chaotic light paths are mathematically categorized as repellers and can be difficult to find using model equations unless you know exactly where to look.

Researchers commonly encounter chaotic repellers in physical and natural systems as varied as plumbing networks, asteroid orbits, chemical reactions, geophysical systems, bird flocks and human organ systems.

To fit the generally recognized forms of chaos under one umbrella definition, Hunt and Ott turned to a concept called entropy. In a system that changes over time, entropy represents the rate at which disorder and uncertainty build up.

The idea that entropy could be a proxy for chaos is not new, but the standard definitions of entropy, such as metric entropy and topological entropy, are trapped in the mathematical equivalent of a straightjacket. The definitions are difficult to apply computationally and contain stringent prerequisites that make it difficult or impossible to apply the definitions to many physical and biological systems of interest to scientists.

Hunt and Ott's work defines a new flexible type of entropy, called expansion entropy, which can be applied to more realistic models of the world. The researchers also broadened their definition of chaos by including systems where external factors continue to push or pull on the model as it evolves. The definition can be approximated accurately by a computer and can accommodate systems, like regional weather models, which are forced by potentially chaotic inputs. The researchers define chaotic models as those that exhibit positive expansion entropy.

The researchers hope expansion entropy will become a simple, commonly used tool to identify chaos in a wide range of model systems. Pinpointing chaos in a system can be a first step to determining whether the system can ultimately be controlled.

For example, Hunt explains, two identical chaotic systems with different initial conditions may evolve completely differently, but if the systems are forced by external inputs they may start to synchronize. By applying the expansion entropy definition of chaos and characterizing whether the original systems respond chaotically to inputs, researchers can tell whether they can wrestle some control over the chaos through inputs to the system.

This type of control could be used, for example, to design highly secure communications systems and more effective pacemakers for the heart, Hunt said.

More information: The research paper, "Defining Chaos," Brian Hunt and Edward Ott, was published on July 28, 2015 in the journal Chaos: An Interdisciplinary Journal of Nonlinear Science: DOI: 10.1063/1.4922973

Journal information: Chaos

Provided by University of Maryland