Mastering magnetic reconnection

On March 12, National Aeronautics and Space Administration (NASA) scientists launched four observational satellites into space, officially beginning the Magnetospheric Multiscale (MMS) Mission. The diminutive spacecraft, coming in at 11 feet by 4 feet each, will let scientists observe a giant mystery—one of the cosmos's most fundamental and mysterious processes, magnetic reconnection.

Magnetic reconnection occurs within electrically charged gases called plasmas. These charged particles interact strongly with magnetic fields, but at the same time their motions modify the magnetic field.

To understand this delicate interplay, imagine the magnetic field lines as rubber bands embedded within the plasma. As the plasma moves, the lines can become highly stressed in certain regions, like rubber bands stretched almost to snapping. When these stresses are too large, the field lines can abruptly change their connectivity, converting the magnetic energy into heat and motion much like when a rubber band snaps.

The most common observable form of magnetic reconnection, a solar flare, sends a cloud of particles and radiation streaming out from the sun's surface. In some cases these coronal mass ejections can knock out Earth's telecommunications satellites and pose serious risks for astronauts.

The magnetosphere is a giant plasma bubble around our planet. Studying magnetic reconnection gives scientists insight into how it functions. They know that reconnection in Earth's magnetosphere can open up gaps, allowing very energetic particles to enter.

Scientists can observe this phenomenon on Earth to an extent, and laboratory experiments can employ plasmas to study magnetic reconnection. However, to gain a full understanding of magnetic reconnection in space, scientists need the collaboration of MMS researchers and high-performance computing experts.

For 5 years a team led by William Daughton of Los Alamos National Laboratory (LANL) has been simulating magnetic reconnection in space using the Cray XK7 Titan supercomputer and its predecessor, the Cray XT5 Jaguar supercomputer, at the Oak Ridge Leadership Computing Facility (OLCF), a US Department of Energy(DOE) Office of Science User Facility located at DOE's Oak Ridge National Laboratory.

"Magnetic reconnection is a fundamental process," Daughton said. "It occurs in laboratory fusion machines, it occurs around our planet in the magnetosphere, and it plays a key role in space weather. In addition, there is a whole range of astrophysical problems related to reconnection, and there is growing evidence that it may be playing a key role in accelerating highly energetic particles. It's a basic science issue that people are trying to understand, and our team is working to put together pieces of this complex puzzle."

From plasma sheets to particles

Scientists have simulated magnetic reconnection in two dimensions for some time, but simulating this phenomenon in three yields a more accurate picture. However, three dimensions increase the complexity and computational intensity of the simulations.

It doesn't matter whether the simulations are done in two or three dimensions, however. Either way scientists have to compute complex physics associated with the plasma dynamics.

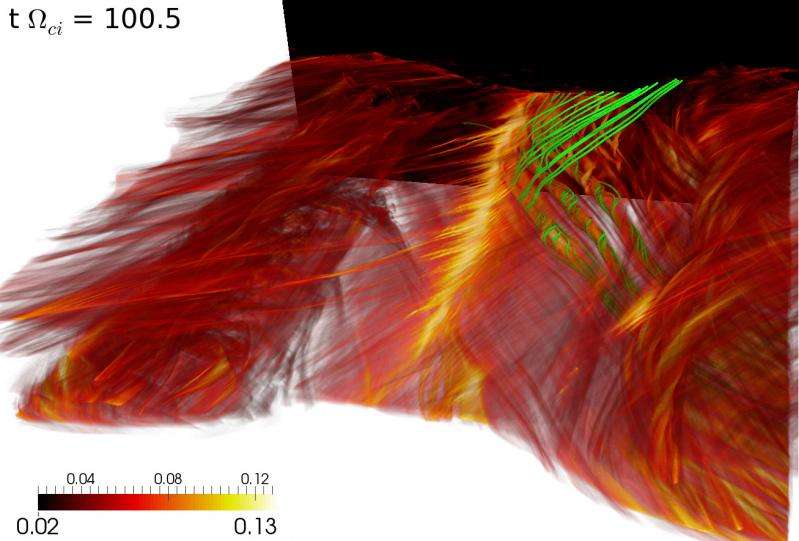

Reconnection often occurs in current sheets, or thin sheets of plasma carrying electrical currents and rotating magnetic fields. Researchers have to simulate the macroscopic interactions between the magnetic fields within the plasma, but for precise results, they also have to accurately simulate—at microscopic scales—individual particle motions, which are collectively interacting with many other particles.

"Each particle in our simulations requires about 32 bytes of memory, so to track a trillion particles in a simulation, you need 32 terabytes of memory," Daughton said. "Currently our largest runs have consisted of 2 to 3 trillion particles."

The large number of particles required to model the physics is a result of the vast separation of spatial and temporal scales. The smallest regions of interest for reconnection involve the motions of the electrons in the plasma, motions that are much smaller than the corresponding motions of the ions. The macroscropic scale of the magnetosphere is, of course, much larger than any of these microscopic scales.

The team uses two primary codes. The central processing unit–centric vector particle-in-cell (VPIC) code is capable of very large production runs on Titan, whereas the new particle simulation code, PSC, developed by Kai Germaschewski at the University of New Hampshire, shows a sixfold speedup over VPIC on two-dimensional models. Germaschewski is, however, continuing PSC development to make full use of Titan's hybrid compute architecture.

In addition to Titan's computing power, Daughton noted, the OLCF's data analysis and management resources have played a big role in the project's success. The team uses the ParaView application on the OLCF's Rhea Linux cluster to quickly view runs and analyze data from Titan. The team has also had a good experience moving large amounts of data on and off the High-Performance Storage System at the center.

In contrast to approximate fluid models sometimes used to model reconnection, the team's simulations on Titan offer an ab initio description of the physics. This puts the Daughton team in a unique position to compare large simulation data sets with the unprecedented level of detail MMS is expected to capture in space. "The MMS instruments will gather electron particle data 100 times faster than previous missions," Daughton said. "These measurements go to the very heart of how this process works at the most basic level."

A higher-fidelity future

Whereas two-dimensional magnetic reconnection simulations have been improving for nearly 20 years, three-dimensional simulations are offering unprecedented detail and insight. However, current-generation supercomputers cannot simulate large enough systems at long enough timescales to solve some of the most difficult physics problems.

We don't really know if we are running large enough simulations to allow the full complexity of what really happens during reconnection to be captured, Daughton said.

Though the team can consistently simulate 2 trillion to 3 trillion particles at a time, adding more particles and simulating them over longer periods than the current 200,000 to 300,000 time steps will help researchers gain a more complete understanding of the complex plasma dynamics.

Daughton noted that a tenfold to fiftyfold increase in computing power would likely resolve questions researchers have not had the resources to answer. This increase falls in line with the expected jump in computing power from Titan to the next OLCF supercomputer, Summit, set to begin production runs in 2018.

More powerful computers handle more complex problems, which in turn create larger data sets. Simulations like those performed by the Daughton team already demand substantial data importing and exporting, and that trend is likely to continue. In fact, the extreme size of some data sets is requiring a different approach to analysis.

"Each time you do bigger simulations, it becomes a bigger headache to analyze and make sense of your data," Daughton said. "For these really huge runs, people are going to have to move to some in situ analysis and visualization because they just won't be able to save everything." In preparation the team is currently collaborating with another LANL group that is developing methods for analyzing data during simulation.

Daughton added that this research does not apply to just space plasmas either. For instance, researchers studying fusion reactors also benefit from higher-fidelity plasma simulations because they offer a greater understanding of plasma behavior while under the influence of magnetic forces. "That's why I think this whole project is so neat," Daughton said. "Within the discipline of plasma physics, magnetic reconnection arises in a great number of problems, so it is important to understand at a basic level."

Provided by Oak Ridge National Laboratory