Citizen scientists rival experts in analyzing land-cover data

Over the past 5 years, IIASA researchers on the Geo-Wiki project have been leading a team of citizen scientists who examine satellite data to categorize land cover or identify places where people live and farm. These data have led to several publications published in peer-reviewed journals.

"One question we always get is whether the analysis from laypeople is as good as that from experts. Can we rely on non-experts to provide accurate data analysis?" says IIASA researcher Linda See, who led the study published in the journal PLOS ONE.

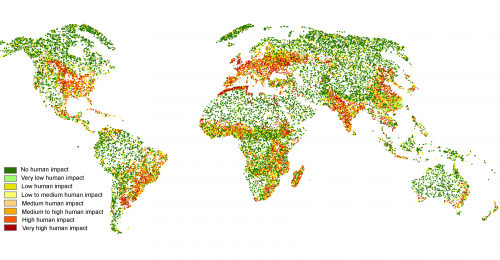

The researchers compared 53,000 data points analyzed by more than 60 individuals, including experts and non-experts in remote sensing and geospatial sciences. The new study shows that non-experts were as good as experts at identifying human impact, a concept that has emerged from ecological sciences, in satellite land cover data.

However, the study showed, experts were better at identifying the specific land-cover types such as forest, farmland, grassland, or desert. When presented with control data where researchers knew the land-cover type, experts identified land cover correctly 69% of the time, while non-experts made correct classifications only 62% of the time. The researchers suggest that interactive training and feedback could help non-experts learn to make better classifications and continue to improve these numbers in the future.

"Citizen science has actually been around for a very long time in many different guises," says See. "But as the internet continues to proliferate in the developing world, and mobile devices and wearable sensors become the norm, we will see an explosion of activity in this area. Studies like this are critical for establishing the quality of data coming from citizens. What we need is more tools and techniques for helping us to continually improve this quality, and more partnerships with NGOs, industry, government and academia that actively involve and trust citizens."

More information: See, et al., 2013. Comparing the quality of crowdsourced data contributed by experts and non-experts. PLOS ONE. dx.plos.org/10.1371/journal.pone.0069958

Journal information: PLoS ONE

Provided by International Institute for Applied Systems Analysis