In first head-to-head speed test with conventional computing, quantum computer wins

(Phys.org) —A computer science professor at Amherst College who recently devised and conducted experiments to test the speed of a quantum computing system against conventional computing methods will soon be presenting a paper with her verdict: quantum computing is, "in some cases, really, really fast."

"Ours is the first paper to my knowledge that compares the quantum approach to conventional methods using the same set of problems," says Catherine McGeoch, the Beitzel Professor in Technology and Society (Computer Science) at Amherst. "I'm not claiming that this is the last word, but it's a first word, a start in trying to sort out what it can do and can't do."

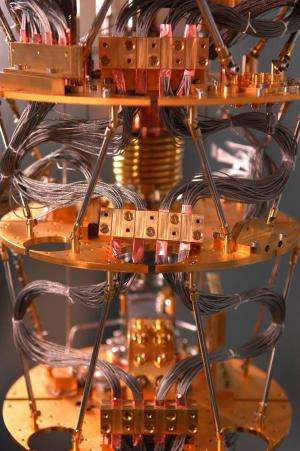

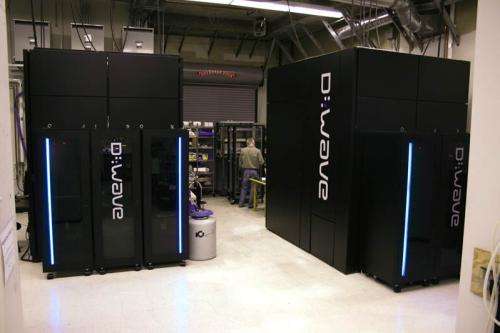

The quantum computer system she was testing, produced by D-Wave just outside Vancouver, BC, has a thumbnail-sized chip that is stored in a dilution refrigerator within a shielded cabinet at near absolute zero, or .02 degrees Kelvin in order to perform its calculations. Whereas conventional computing is binary, 1s and 0s get mashed up in quantum computing, and within that super-cooled (and non-observable) state of flux, a lightning-quick logic takes place, capable of solving problems thousands of times faster than conventional computing methods can, according to her findings.

"You think you're in Dr. Seuss land," McGeoch says. "It's such a whole different approach to computation that you have to wrap your head around this new way of doing things in order to decide how to evaluate it. It's like comparing apples and oranges, or apples and fish, and the difficulty was coming up with experiments and analyses that allowed you to say you'd compared things properly. It definitely was the oddest set of problems I've ever coped with."

McGeoch, author of A Guide to Experimental Algorithmics (Cambridge University Press, 2012), has 25 years of experience setting up experiments to test various facets of computing speed, and is one of the founders of "experimental algorithmics," which she jokingly calls an "oddball niche" of computer science. Her specialty is, however, proving increasingly helpful in trying to evaluate different types of computing performance.

That's why she spent a month last fall at D-Wave, which has produced what it claims is the world's first commercially available quantum computing system. Geordie Rose, D-Wave's founder and Chief Technical Officer, retained McGeoch as an outside consultant to help devise experiments that would test its machines against conventional computers and algorithms.

McGeoch will present her analysis at the peer-reviewed 2013 Association for Computing Machinery (ACM) International Conference on Computing Frontiers in Ischia, Italy, on May 15. Her 10-page-paper, titled "Experimental Evaluation of an Adiabiatic Quantum System for Combinatorial Optimization," was co-authored with Cong Wang, a graduate student at Simon Fraser University.

McGeoch says the calculations the D-Wave excels at involve a specific combinatorial optimization problem, comparable in difficulty to the more famous "travelling salesperson" problem that's been a foundation of theoretical computing for decades.

Briefly stated, the travelling salesperson problem asks this question: Given a list of cities and the distances between each pair of cities, what is the shortest possible route that visits each city exactly once and returns to the original city? Questions like this apply to challenges such as shipping logistics, flight scheduling, search optimization, DNA analysis and encryption, and are extremely difficult to answer quickly. The D-Wave computer has the greatest potential in this area, McGeoch says.

"This type of computer is not intended for surfing the internet, but it does solve this narrow but important type of problem really, really fast," McGeoch says. "There are degrees of what it can do. If you want it to solve the exact problem it's built to solve, at the problem sizes I tested, it's thousands of times faster than anything I'm aware of. If you want it to solve more general problems of that size, I would say it competes – it does as well as some of the best things I've looked at. At this point it's merely above average but shows apromising scaling trajectory."

McGeoch, who has spent her academic career in computer science, doesn't take a stance on whether the D-Wave is a true quantum computer or not, a notionsome physicists take issue with.

"Whether or not it's a quantum computer, it's an interesting approach to solving these problems that is worth studying," she says.

Whether the D-Wave computer will ever have mass market appeal is also difficult for McGeoch to assess. While the 439-qubit model she tested does have incredible computing power, there is that near-zero Kelvin chip operating temperature requirement that would make home or office use a chilly proposition. At present, she thinks the power of the D-Wave approach is too narrowly focused to be of much use to the average personal computer user.

"The founder of IBM famously predicted that only about five of his company's first computers would be sold because he just didn't see the need for that much computing power," McGeoch says. "Who needs to solve those big problems now? I'd say it's probably going to be big companies like Google and government agencies."

And, while conventional approaches to solving these problems will likely continue to improve incrementally, this fast quantum approach has the potential to expand to larger variety of problems than it does now, McGeoch says.

"Within a year or two I think these quantum computing methods will solve more and bigger problems significantly faster than the best conventional computing options out there," she says.

At the same time, she cautions that her first set of experiments represents a snapshot moment of the state of quantum computing versus conventional computing.

"This by no means settles the question of how fast the quantum computer is," she says. "That's going to take a lot more testing and a variety of experiments. It may not be a question that ever gets answered because there's always going to be progress in both quantum and conventional computing."

Provided by Amherst College