Researchers advance scientific computing with record-setting simulations

(Phys.org)—Breaking new ground for scientific computing, two teams of Department of Energy (DOE) scientists have for the first time exceeded a sustained performance level of 10 petaflops (quadrillion floating point operations per second) on the Sequoia supercomputer at the National Nuclear Security Administration's (NNSA) Lawrence Livermore National Laboratory (LLNL).

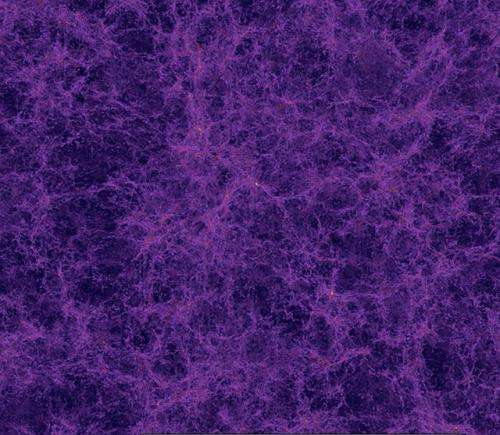

A team led by Argonne National Laboratory used the recently developed Hardware/Hybrid Accelerated Cosmology Codes (HACC) framework to achieve nearly 14 petaflops on the 20-petaflop Sequoia, an IBM BlueGene/Q supercomputer, in a record-setting benchmark run with 3.6 trillion simulation particles. HACC provides cosmologists the ability to simulate entire survey-sized volumes of the universe at a high resolution, with the ability to track billions of individual galaxies.

Simulations of this kind are required by the next generation of cosmological surveys to help elucidate the nature of dark energy and dark matter. The HACC framework is designed for extreme performance in the weak scaling limit (high levels of memory utilization) by integrating innovative algorithms, as well as programming paradigms, in a way that easily adapts to different computer architectures. The HACC team is now conducting a fully-instrumented science run with more than a trillion particles on Argonne's 10-petaflop Mira system, also an IBM BlueGene/Q system.

"The performance of these applications on Mira and Sequoia provides an early glimpse of the transformational science these machines make possible—science important to DOE missions," said Barbara Helland, of DOE's Office of Science. "By pushing the state-of-the-art, these two teams of scientists are advancing science and also the know-how to use these new resources to produce insight and discovery."

LLNL, in collaboration with scientists at IBM Research, created a new simulation capability called Cardioid to realistically and rapidly model a beating human heart at near-cellular resolution. The highly scalable code models in exquisite detail the electrophysiology of the human heart, including activation of heart muscle cells and cell-to-cell electrical coupling. Developed to run with high efficiency in the extreme strong-scaling limit, the scientists were able to achieve a performance of nearly 12 petaflops on Sequoia, and demonstrated the ability to model a highly resolved whole heart beating in very nearly real time (67.2 seconds of wall-clock time to model 60 seconds of real time). Using Cardioid, the team performed groundbreaking simulations demonstrating for the first time in a simulation of a whole heart the generation of a reentrant activation pattern that often leads to a kind of arrhythmia known as Torsades de Pointes, which can result in sudden cardiac death. The potential to elucidate detailed mechanisms of arrhythmia will have impact on a multitude of applications in medicine, pharmaceuticals and implantable devices.

"A vital DOE/NNSA mission is to push the state-of-the-art in high performance computing to not only ensure the nation's security but its technological and economic competitiveness," said NNSA Advanced Simulation and Computing Director Bob Meisner. "Sequoia and Mira are powerful computational engines that allow our skilled teams to run applications such as Cardioid and HACC at very high levels of performance. What we learn from these early science applications will inform a broad range of scientific computing including our national security applications."

Sequoia at Livermore and Mira at Argonne represent the third generation of IBM Blue Gene supercomputers. Sequoia, second on the TOP500 list with 98,304 nodes (1.57 million central processing units), and Mira, fourth on the list with 49,152 nodes (786,432 central processing units), allow execution of massive calculations in parallel. Both teams took full advantage of the five levels of parallelism available in the hardware to achieve sustained performance levels of 58.8 percent for Cardioid and an astounding 69.2 percent for HACC of the theoretical peak performance of the machine, as well as near perfect scaling. Strong scaling measures the ability to speed up a problem by using more processors, so that a given simulation finishes in one-hundredth of the time by using 100 times as many processors. Weak scaling measures the ability to increase the size of problem by using more processors, so that in a given time, a simulation 100 times larger is executed by using 100 times as many processors.

Provided by Argonne National Laboratory