Supercomputer simulations to help predict tornadoes

Each year, tornadoes tear across the United States, causing numerous deaths and physical damage to the environment and infrastructure.

The National Weather Service estimates there have been about 1,475 this year alone, outpacing the last decade's yearly average of 1,274.

Many of these spinning giants strike in the plains states between the Rocky and Appalachian Mountains. So researchers at the University of Oklahoma in the heart of Tornado Alley want to know how tornadoes form and how to better predict them.

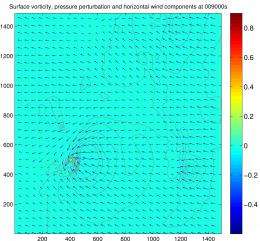

The task involves assembling data from more storm variables-such as updraft, downdraft and vorticity or regions of spin--than what can be observed from ground tornado chasers or even actually produced in the atmosphere. For example, researchers need to better understand how changes in wind direction with height cause the updrafts in a storm to rotate, preceding the formation of a tornado.

To solve the quandary, Amy McGovern, an associate professor in OU's School of Computer Science, and her team create tornado models with super computers that can process vast amounts of data. McGovern and her colleagues use the models to analyze how storm variables interact in order to identify tornadic and non-tornadic storms.

"The goals of our research are to fundamentally transform our understanding of the formation of tornadoes, said McGovern. "Long term, this will dramatically improve the prediction of tornadoes as we will better understand why some storms generate tornadoes and others do not. With a more accurate prediction, and hopefully improved lead time on the warnings, we hope more people will heed the warnings and thus reduce loss of life and property."

McGovern's research is funded by the National Science Foundation's (NSF) Faculty Early Career Development Program, the agency's most prestigious award that supports junior faculty "who exemplify the role of teacher-scholars through outstanding research, excellent education and the integration of education and research within the context of the mission of their organizations."

Initially McGovern and her team used observational data from a 20-year-old storm to create more than 250 storm simulations, but the simulations did not produce enough data. The researchers then began using supercomputers such as Kraken, a Teragrid computer that is currently the eighth fastest computer in the world, to generate simulations.

Kraken is funded by the National Science Foundation and managed by the University of Tennessee's (UT) National Institute for Computational Sciences. It is located at the Oak Ridge National Laboratory in Tennessee.

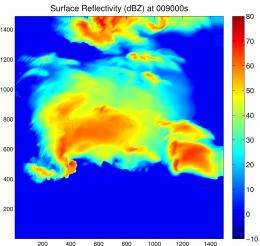

McGovern and her team use Kraken to produce high-resolution simulations of supercell thunderstorms, powerful weather events that have a deep, continuously-rotating updraft--regions where the wind is blowing upward. They are the most intense type of thunderstorms that generate the strongest tornados.

So far, the team has produced about 50 simulations; they plan to produce about 100.

Each simulation generates enormous amounts of data; nearly 50 terabytes has been produced from the nearly 50 simulations. As a result and to better assess the causes of tornadoes, the team uses another supercomputer to data mine the information.

"Data mining enables us to efficiently identify patterns in very large data sets," said McGovern describing the process by which the team analyzes storm variables. "Since each simulation generates 1 terabyte of data and we have 50 such simulations, we have too much data for a human to examine by hand. As such, data mining plays a crucial role."

Nautilus, a powerful computer for visualizing and analyzing large datasets at the Remote Data Analysis and Visualization Center at UT, performs the data mining. The supercomputer is especially useful for research that loads and processes large data files on the order of 4 terabytes.

Using Nautilus, McGovern and her team can isolate and analyze variables as well as identify critical spatiotemporal interactions, or the interactions of variables in space and time that indicate severe weather or tornado storms.

"In the short term, we intend to continue to mine these simulations with the goal of fundamentally transforming our understanding of 'tornadoegensis,'" said McGovern. "In the longer term, we would like to bring our findings and methods to the weather forecasters who actually issue the tornado warnings. We would like to develop an interface that provides them with immediate and useful information, which they can use to improve their tornado warnings."

Provided by National Science Foundation