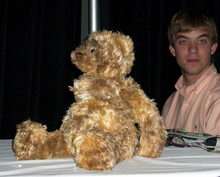

Up Close with MIT's Huggable Robot

The high-tech stuffed animal robot dubbed Huggable uses Microsoft software to connect with kids and medical patients.

Once voted one of Wired's 50 Best robots ever, Massachusetts Institute of Technology Media Lab's Huggable therapeutic bear robot made a rare public appearance at the Robo Business 2007 conference and expo this week.

Under development since January 2005, the plush, adorable teddy bear robot was on display to help Microsoft's Tandy Trower demonstrate how companies and researchers are using Microsoft's new Robotics Studio development kit to drive new functionality for new and existing robots.

Joined by a team of young MIT student researchers, Media Lab Research Assistant and Huggable Lead Dan Stiehl (who works under socialable robotics legend and MIT Media Lab Robotic Life Group Director Cynthia Breazeal )explained how Huggable can act as an avatar, allowing users to see what the robot sees and interact with children and patients who might be sitting in front of it. In the Robo Business demo, two of Stiehl's students played the part of children, sitting in front of the bear, while Stiehl and another researcher controlled the robot via a Web-based interface.

Built on an MSR service, the Web interface shows what Huggable sees through its camera eyes, its relative body position, and even if it's being moved, and the interface allows the user to control the robot's still-limited interaction. Huggable cannot get up and walk around, but it is, according to Stiehl, a very complex robot, featuring full-body sensor skin, sensors, and tensiometers in its legs and feet, speakers and a microphone in its head, a camera in each eye (one color and one black and white), and motors in the neck so it can turn its head. Future versions will add motors to the eyebrows, ears, shoulders, and the neck. The latter should give Huggable a full eight degrees of head movement, so it can tilt its head to the side.

The current robot showed off some pretty impressive functionality during the demonstration. Stiehl and his team used the Web-based controls to have the robot look at each researcher sitting in front of it, turning the robot's head and then labeling the position with each researcher's name. Now Stiehl and his team could have the robot quickly look from one researcher by simply selecting the position label.

While Stiehl and company used MSR for the control interface, much of the robot's programming underpinnings are based in Java, which was programmed on the Mac. This code base is actually 10 years old; Huggable's lineage includes sociable robots Cog and Leonardo (a joint project between Breazeal and movie special-effects wiz Stan Winston).

Unlike most research robots, Huggable may have a life outside the lab. Stiehl told me that while the goal of this current, high-powered Huggable is to explore the larger questions, like how a robot can operate in a hospital environment, interact with children and gather data, there are numerous Huggable technologies that could be turned into standalone products and applications. One such product might be the "Squeeze Doll," which could be used to help patients show where they're experiencing pain by squeezing the corresponding area on a robot equipped with air bladder sensors. Likewise, Stiehl can foresee a lower-cost version of the Huggable eventually making it to market.

Still, if you're in the U.S., don't get too excited. Media Lab and the Huggable project is funded in part by the Highlands and Islands Enterprise in Scotland, and it's likely that the first prototypes will be built and tested there. One of the most likely early applications will be language education, where educators will use the robot to help them teach Gaelic to young students.

Some comparisons have been made between Huggable and the National Institute of Advanced Industrial Science and Technology's Paro Therapeutic Robot pup seal. Stiehl, however, told me there are very important differences. Paro is focused on responsiveness to touch, but everything it does "stays within the robot." Huggable, on the other hand, collects data and allows people to communicate through him. It can be a "hospital team member."

Copyright 2007 by Ziff Davis Media, Distributed by United Press International