Virtual robotization for human limbs

Recent advances in computer gaming technology allow for an increasingly immersive gaming experience. Gesture input devices, for example, synchronise a player's actions with the character on the screen. Entertainment systems now use special haptic displays - these are attached to the player's body to provide so-called 'vibrotactile feedback', synthesizing the feeling of being attacked during combat games, for example.

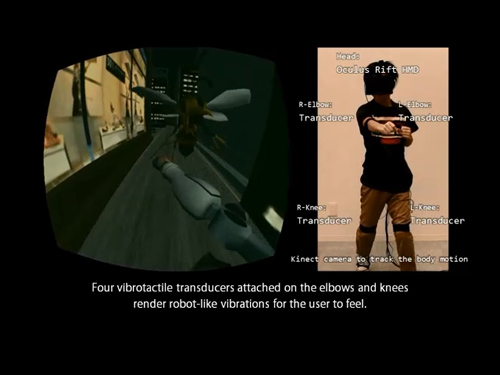

A new virtual reality robotization gaming system called Jointonation, developed by Hiroyuki Kajimoto at the University of Electro-Communications in Tokyo and co-workers, has taken gaming to a new level by allowing the player to discover what it feels like to become a robot. The robotic simulation uses a combination of visual, auditory and tactile sensations to 'transform' the player's arms and legs into metallic limbs.

Vibrotactile feedback makes use of sensory receptors in the skin which respond to mechanical stimuli such as pressure and distortion. By triggering a certain response to sensations, it is possible to 'fool' the brain into thinking these sensations are real. Kajimoto and his team synchronized the limb movements of an on-screen robot with the player's three-dimensional limb movements. They then used modelled vibration data recorded from the arm joint of an industrial robot, combined with robotic sound recordings, to simulate the feeling of robotic limbs via vibrators attached to the player's elbows and knees.

Trials of Jointonation proved a great success, with the combination of visual, auditory and haptic sensations providing very effective 'robot-like' feelings in players' limbs.

More information: Kurihara, Y., Takei, S., Nakai, Y., Hachisu, T., Kuchenbecker, K.J. & Kajimoto, H. Haptic robotization of the human body by data-driven vibrotactile feedback. Entertainment Computing 10 (2014)

Provided by UEC Research Portal