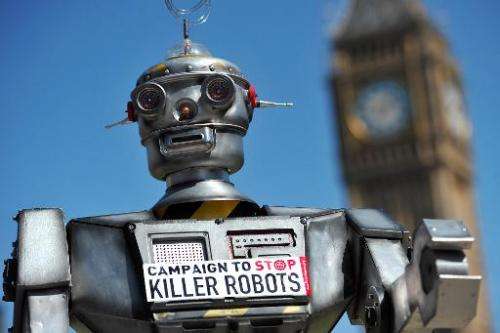

UN talks take aim at 'killer robots' (Update)

Armies of Terminator-like warriors fan out across the battlefield, destroying everything in their path, as swarms of fellow robots rain fire from the skies.

That dark vision could all too easily shift from science fiction to fact unless such weapons are banned before they leap from the drawing board to the arsenal, campaigners warn.

On Tuesday, governments began the first-ever talks exclusively on so-called "lethal autonomous weapons systems"—opponents prefer the label "killer robots".

"All too often international law only responds to atrocities and suffering once it has happened," said Michael Moeller, head of the UN Conference on Disarmament.

"You have the opportunity to take pre-emptive action and ensure that the ultimate decision to end life remains firmly under human control," he told the meeting in Geneva.

That was echoed by the International Committee of the Red Cross, guardian of the Geneva Conventions on warfare.

"There is a sense of deep discomfort with the idea of allowing machines to make life-and-death decisions on the battlefield with little or no human involvement," said Kathleen Lawand, head of its arms unit.

The four-day meeting aims to pave the way for more in-depth talks in November.

"The only answer is a pre-emptive ban," said Human Rights Watch arms expert Steve Goose.

UN-brokered talks have done that before: blinding laser weapons were banned in 1998, before they ever hit the battlefield.

Automated weapons are already deployed worldwide.

The best-known are drones, unmanned aircraft whose human controllers push the trigger from a distant base. Controversy rages, especially over the civilian collateral damage caused when the United States strikes alleged Islamist militants.

Perhaps closest to the Terminator of Arnold Schwarzenegger's action films is a Samsung sentry robot used in South Korea, able to spot unusual activity, quiz intruders and, when authorised by a controller, shoot them.

Other countries in the research vanguard include Britain, Israel, China, Russia and Taiwan.

As revolutionary as gunpowder

But it is the next step, the power to kill without a human handler, that rattles opponents the most.

Experts predict that military research could produce such machines within 20 years.

"Lethal autonomous weapons systems are rightly described as the next revolution in military technology, on par with the introduction of gunpowder and nuclear weapons," said Pakistan's UN ambassador Zamir Akram, warning that they would threaten world peace and security.

German ambassador Michael Biontino said human control was the bedrock of international law.

"Even in times of war, human beings cannot be made simple objects of machine action," he said.

The goal, diplomats said, is not to ban the technology outright.

"We need to keep in mind that these are dual technologies and could have numerous civilian, peaceful and legitimate uses. This must not be about restricting research in this field," said French ambassador Jean-Hugues Simon-Michel, chairman of the talks.

Robots can potentially be used in firefighting and bomb disposal, while robot vacuum cleaners and lawnmowers are already common.

"We believe that such technology is not only useful, but also contributes to a safe and sound life for us all," said Japan's ambassador Toshio Sano.

Campaigner Noel Sharkey, emeritus professor of robotics and artificial intelligence at Britain's University of Sheffield, said autonomy itself is not the problem.

"There is just one thing that we don't want, and that's what we call the kill function," he said.

One aim is to start sketching out the definition of a robot weapon.

US delegate Stephen Townley said the Terminator image was misleading.

"That is a far cry from what we should be focusing on, which is the likely trajectory of technological development, not images from popular culture," he said.

"The United States believes it is premature to determine where these discussions might or should lead," he added.

In 2012, Washington imposed a 10-year human control requirement on automated weapons, welcomed by campaigners even though they said it should go further.

Supporters of automated weapons say they have life-saving potential in warfare, for example being able to get closer than troops to assess a threat properly, without letting emotion cloud decision-making.

But that is precisely what worries critics.

"If we don't inject a moral and ethical discussion into this, we won't control warfare," said Jody Williams, who won the 1997 Nobel Peace Prize for her campaign for a treaty banning landmines.

© 2014 AFP