Personal touch is key to gesture control software: Design-it-yourself gestures may be key to future computer interfaces

(Phys.org) —A team of experts in human-computer interaction at the University of St Andrews have found that gesture-based Natural User Interfaces (used to control televisions, computers and games consoles like the Wii and Xbox 360) just need the personal touch to make them more effective.

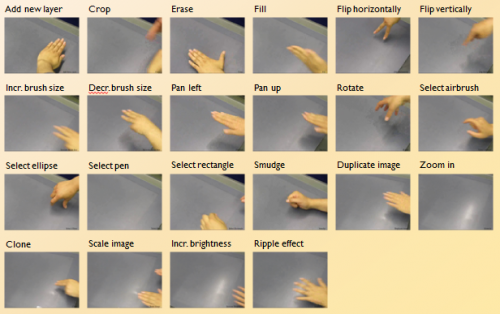

A key question for Natural User Interfaces is whether people will be able to remember the many gestures recognised by the devices.

The St Andrews team found that users could remember 44 per cent more gestures when they designed the gestures themselves, in comparison with gestures pre-programmed by professionals.

The researchers carried out three controlled experiments testing people's ability to remember gestures, and analysed the type of errors that they made. Controlling for learning time, users were significantly better at remembering gestures they created themselves.

Until now, designers and researchers of gestural interfaces have focused on designing one set of gestures that would work for everyone. The new experiment results question this approach.

Designing personalised gestures was also perceived to be more creative and more fun. Further, they could then reflect the user's cultural background. In contrast, pre-programmed gestures can mean one thing in one culture but make no sense in another.

Dr Miguel Nacenta, Lecturer in the School of Computer Science at the University said: "Our work could make the difference between whether this technology becomes commonplace or fails to captivate the market.

"If the gesture-based interface is clumsy and frustrating consumers will not be willing to ditch their remote controls for it."

Gesture-based Natural User Interfaces are becoming increasing popular and products pushing these technologies include Samsung SmartTVs, Microsoft's Kinect and LEAP Motion.

However, one drawback is the difficulty users have in learning and remembering pre-programmed gesture patterns.

Current gesture-based interfaces, like those in Samsung SmartTVs, are based on how designers see interaction. The University of St Andrews research suggests that involving users in the design of gestures is a promising approach.

The researchers will present their work at the CHI 2013 conference (the ACM Conference on Human Factors in Computing Systems) in Paris on April 30. CHI is the principal forum for outstanding research in human-computer interaction.

More information: "Memorability of Pre-designed and User-defined Gesture Sets" (2013) Miguel A. Nacenta, Yemliha Kamber, Yizhou Qiang and Per Ola Kristensson. Full paper in Proceedings of the thirty-first annual ACM SIGCHI conference on Human factors in computing systems CHI '13 (10 pages – to appear).

Provided by University of St Andrews