Why do birds crash into solar panels?

Billions of birds die annually from collisions with windows, communication towers, wind turbines, and other human-made objects. One reason is that birds see a reflection of the sky in the object and think they're flying into an unobstructed path.

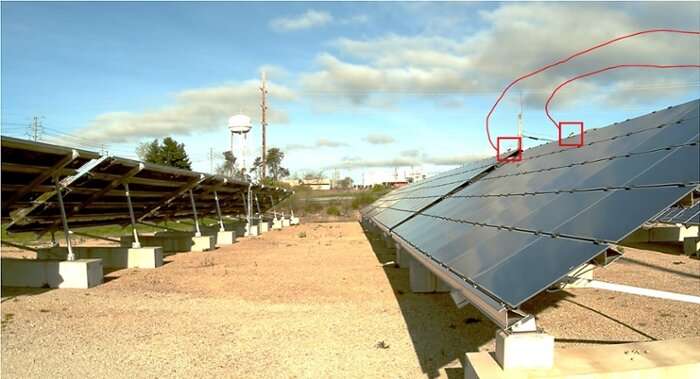

This is even a problem for solar panel facilities, which see up to 138,000 bird deaths per year in the US from collisions with equipment. Though damage to the solar panels is minimal, officials worry about the impact these structures have on local wildlife. To combat the problem, the Department of Energy (DOE) has awarded Argonne National Laboratory $1.3 million to develop a system that can automatically monitor bird activity.

Adam Szymanski, a software engineer at Argonne, spoke with us about the challenges the team face, as well as how a model designed to track drones is already working to keep an eye on birds.

Too much to do

At the moment, the only way to track bird deaths at solar panel facilities is to have a human walk around and collect the bodies. This prevents researchers from collecting a valuable amount of data. That's why Szymanski and his colleagues are working on a means to automate the process.

"We've come up with a camera system that can automatically detect a moving object in the area and then classify if it's a bird or not," says Szymanski. "Then, the system should be able to classify if the bird collided with anything or what other activity it's actually doing. This is all done using machine learning and computer vision."

The researchers are relying on high-performance computing (HPC) to train this bird-spotting system. Argonne Blues is being tapped for two important jobs: processing the data and training classification models.

"That's one great benefit of being at Argonne," says Szymanski. "We have a fairly large HPC center right next door to my building."

Currently, the team is working with ten hours of footage per day from eight cameras across a one-month timeframe. This is then broken down into thousands of five-minute clips, which are processed in parallel. The resulting data is then used to create a machine learning model that can detect if a bird is in a frame or not.

All of this is building toward an edge computing model which should be able to work within the camera itself. Processing this video data later on an offsite machine would defeat the purpose of devising a more efficient means of tracking birds, so it's important that this kind of work eventually takes place in real time where the camera is.

The HPC-powered Argonne Blues isn't the only resource the researchers drew from. In fact, the technology behind this bird identification system is based on a model that was built to detect drones flying in the sky.

Eye to the sky

To a computer, picking a drone out of a sky is not that much different than noticing birds. In both cases, the model needs to be trained to categorize random items (i.e., a drone or not a drone) and do so in real time at the edge.

While many drone detection systems try to locate drones via their radio signals, it doesn't always work since drones don't need to transmit signals at all times. That's why Szymanksi helped create a computing model that uses upward-facing cameras to monitor the skies for drones.

Despite the similarities in the two tasks, Szymanski is quick to point out the differences.

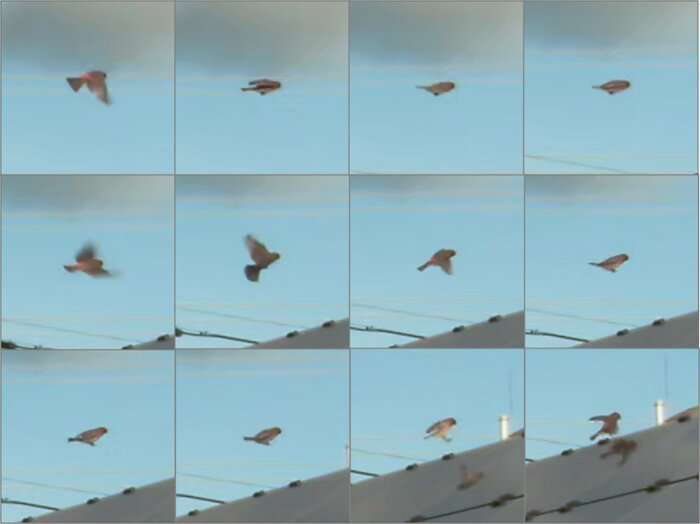

"Drones usually have some kind of propeller that a computer can pick out," says Szymanski. "A bird flaps its wings as it's flying, so when its wings are out it's fairly recognizable as a bird. But when its wings are in (for example, when gliding) it essentially just looks like a black dot, so it becomes harder to classify."

The new bird detection model also differs in what it looks at. While the drone model monitored an empty sky, the cameras at solar facilities will be directed at the panels themselves. This brings more objects into the camera's line of "sight," which complicates the detection process. But Szymanksi believes all this hard work is toward a good cause.

The number of birds that perish at solar panel facilities pales in comparison to other environmental calamities. But for a long time, we humans have put our needs above those of the natural world. Projects like this one, that combine conservation with renewable energy are vital because they are a tangible recognition that nature's wellbeing shouldn't be an afterthought.

More information: This story is republished courtesy of Science Node. Read the original story here.

Provided by Science Node