Shake rattle and code

Southern California defines cool. The perfect climes of San Diego, the glitz of Hollywood, the magic of Disneyland. The geology is pretty spectacular, as well.

"Southern California is a prime natural laboratory to study active earthquake processes," said Tom Jordan, a professor in the Department of Earth Sciences at the University of Southern California (USC). "The desert allows you to observe the fault system very nicely."

The fault system to which he is referring is the San Andreas, among the more famous fault systems in the world. With roots deep in Mexico, it scars California from the Salton Sea in the south to Cape Mendocino in the north, where it then takes a westerly dive into the Pacific.

Situated as it is at the heart of the San Andreas Fault System, Southern California does make an ideal location to study earthquakes. That it is home to nearly 24 million people makes for a more urgent reason to study them.

Jordan and a team from the Southern California Earthquake Center (SCEC) are using the supercomputing resources of the Argonne Leadership Computing Facility (ALCF), a U.S. Department of Energy (DOE) Office of Science User Facility, to advance modeling for the study of earthquake risk and how to reduce it.

Headquartered at USC, the center is one of the largest collaborations in geoscience, engaging over 70 research institutions and 1,000 investigators from around the world.

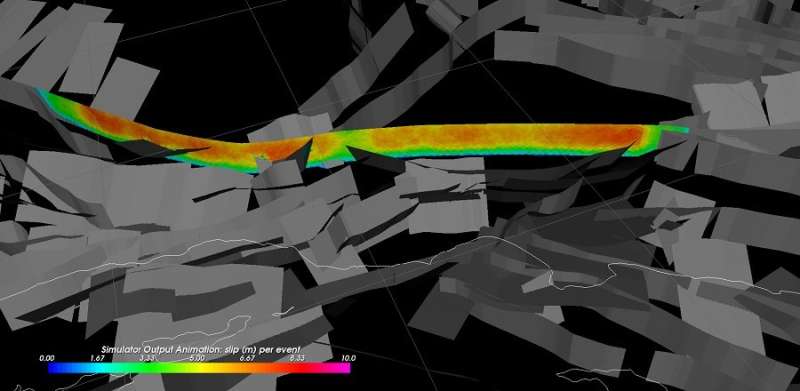

The team relies on a century's worth of data from instrumental records as well as regional and seismic national hazard models to develop new tools for understanding earthquake hazards. Working with the ALCF, it has used this information to improve its earthquake rupture simulator, RSQSim.

RSQ is a reference to rate- and state-dependent friction in earthquakes—a friction law that can be used to study the nucleation, or initiation, of earthquakes. RSQSim models both nucleation and rupture processes to understand how earthquakes transfer stress to other faults.

ALCF staff were instrumental in adapting the code to Mira, the ALCF's 10-petaflop supercomputer, which allows for the larger simulations required to model earthquake behaviors in very complex fault systems, like San Andreas, and which led to the team's biggest discovery.

The SCEC, in partnership with the U.S. Geological Survey, had already developed an empirically based model that integrates theory, geologic information and geodetic data, like GPS displacements, to determine spatial relationships between faults and slippage rates of the tectonic plates that created those faults.

Though more traditional, a newer version is considered the best representation of California earthquake ruptures, but the picture it portrays is still not as accurate as researchers would hope.

"We know a lot about how big earthquakes can be, how frequently they occur and where they occur, but we cannot predict them precisely in time," notes Jordan.

The team turned to Mira to run RSQSim to determine whether it could achieve more accurate results more quickly. A physics-based code, RSQSim produces long-term synthetic earthquake catalogs that comprise dates, times, locations and magnitudes for predicted events.

Using simulation, researchers impose stresses upon some representation of a fault system, changing the stress throughout much of the system and thus changing the way future earthquakes occur. Trying to model these powerful stress-mediated interactions is particularly difficult with complex systems and faults like San Andreas.

"We just let the system evolve and create earthquake catalogs for a hundred thousand or a million years. It's like throwing a grain of sand in a set of cogs to see what happens," explained Christine Goulet, a team member and executive science director for special projects with SCEC.

The end result is a more detailed picture of the possible hazard, which forecasts a sequence of earthquakes of various magnitudes expected to occur on the San Andreas Fault over a given time range.

The group tried to calibrate RSQSim's numerous parameters to replicate the model designed by the SCEC and the U.S. Geological Survey. But the group eventually decided to run the code with its default parameters. While the initial intent was to evaluate the magnitude of differences between the models, they discovered, instead, that both models agreed closely on their forecasts of future seismologic activity.

"So it was an aha moment. Eureka," recalled Goulet. "The results were a surprise because the group had thought carefully about optimizing the parameters. The decision not to change them from their default values made for very nice results."

The researchers noted that the mutual validation of the two approaches could prove extremely productive in further assessing seismic hazard estimates and their uncertainties.

Information derived from the simulations will help the team compute the strong ground motions generated by faulting that occurs at the surface—the characteristic shaking that is synonymous with earthquakes. To do this, the team couples the earthquake rupture forecasts, the SCEC-U.S. Geological Survey code and RSQSim, with different models that represent the way waves propagate through the system. These models involve standard equations, called ground motion prediction equations, used by engineers to calculate the shaking levels from earthquakes of different sizes and locations.

"These experiments show that the physics-based model RSQSim can replicate the seismic hazard estimates derived from the empirical model, but with far fewer statistical assumptions," noted Jordan. "The agreement gives us more confidence that the seismic hazard models for California are consistent with what we know about earthquake physics. We can now begin to use these physics to improve the hazard models."

Provided by Argonne National Laboratory